前言

周末在家无聊刷朋友圈,看到Hudi on Flink最新进展,突然心血来潮,想在自己的笔记上体验一把。本文算是准备工作吧,编译部署最新版本的Hadoop和Flink。

Hadoop3.2.2源码编译

源码下载地址:

https://github.com/apache/hadoop/archive/rel/release-3.2.2.tar.gz

笔者操作系统为:

root@felixzh:~# cat proc/version

Linux version 4.4.0-31-generic (buildd@lgw01-43) (gcc version 4.8.4 (Ubuntu 4.8.4-2ubuntu1~14.04.3) ) #50~14.04.1-Ubuntu SMP Wed Jul 13 01:07:32 UTC 2016

感兴趣的同学可以参考官方文档:

https://github.com/apache/hadoop/blob/trunk/BUILDING.txt

笔者参考的部分如下:

Installing required packages for clean install of Ubuntu 14.04 LTS Desktop:

* Oracle JDK 1.8 (preferred)

$ sudo apt-get purge openjdk*

$ sudo apt-get install software-properties-common

$ sudo add-apt-repository ppa:webupd8team/java

$ sudo apt-get update

$ sudo apt-get install oracle-java8-installer

* Maven

$ sudo apt-get -y install maven

* Native libraries

$ sudo apt-get -y install build-essential autoconf automake libtool cmake zlib1g-dev pkg-config libssl-dev libsasl2-dev

* ProtocolBuffer 2.5.0 (required)

$ sudo apt-get -y install protobuf-compiler

笔者使用的编译命令:

mvn clean package -Pdist,native -DskipTests–Dtar

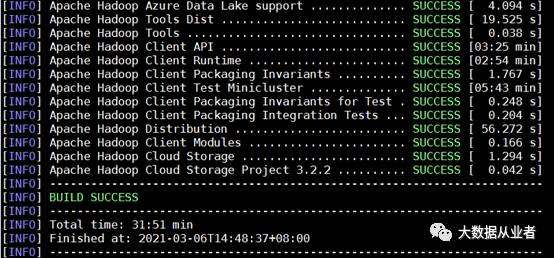

编译结果(顺利的话,耗时半小时左右):

Flink1.12.2源码编译

源码下载地址:

https://github.com/apache/flink/archive/release-1.12.2.tar.gz

感兴趣的同学,可以参考官方文档:

https://ci.apache.org/projects/flink/flink-docs-release-1.12/flinkDev/building.html

笔者使用的编译命令:

mvn clean install -DskipTests -Dfast-Dhadoop.version=3.2.2

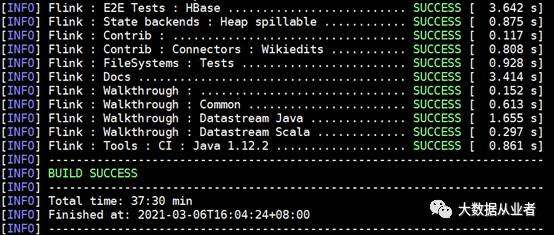

编译结果(顺利的话,40分钟左右):

Hadoop3.2.2部署

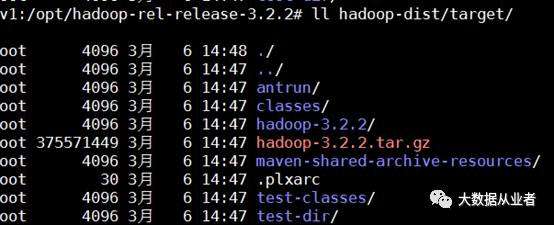

拷贝编译结果hadoop-3.2.2到/opt/bigdata

cd opt/bigdata/hadoop-3.2.2/etc/Hadoop

修改四个配置文件:

core-site.xml

<configuration>

<property>

<name>fs.defaultFSname>

<value>hdfs://felixzh:9000value>

property>

configuration>

mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>

<property>

<name>mapreduce.application.classpathname>

<value>$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/*:$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/lib/*value>

property>

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>

<property>

<name>mapreduce.jobhistroy.addressname>

<value>felixzh:10020value>

property>

<property>

<name>mapreduce.jobhistroy.webapp.addressname>

<value>felixzh:19888value>

property>

configuration>

yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.nodemanager.env-whitelistname>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOMEvalue>

property>

<property>

<name>yarn.nodemanager.remote-app-log-dirname>

<value>/tmp/logsvalue>

property>

<property>

<name>yarn.nodemanager.resource.memory-mbname>

<value>4096value>

property>

<property>

<name>yarn.scheduler.minimum-allocation-mbname>

<value>2048value>

property>

<property>

<name>yarn.scheduler.maximum-allocation-mbname>

<value>4096value>

property>

<property>

<name>yarn.nodemanager.vmem-pmem-rationame>

<value>5value>

property>

configuration>

hdfs-site.xml

<configuration>

<property>

<name>dfs.replicationname>

<value>1value>

property>

configuration>

/opt/bigdata/hadoop-3.2.2/sbin修改四个脚本文件

start-dfs.sh、stop-dfs.sh增加如下内容:

HDFS_DATANODE_USER=root

HADOOP_SECURE_DN_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

start-yarn.sh、stop-yarn.sh增加如下内容:

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

建立软连接(/opt/pkg/jdk/为我的JAVA_HOME)

ln -s opt/pkg/jdk/bin/java /bin/java

格式化hdfs

/opt/bigdata/hadoop-3.2.2/bin/hdfs namenode -format

启动hdfs、yarn

/opt/bigdata/hadoop-3.2.2/sbin/start-dfs.sh

/opt/bigdata/hadoop-3.2.2/sbin/start-yarn.sh

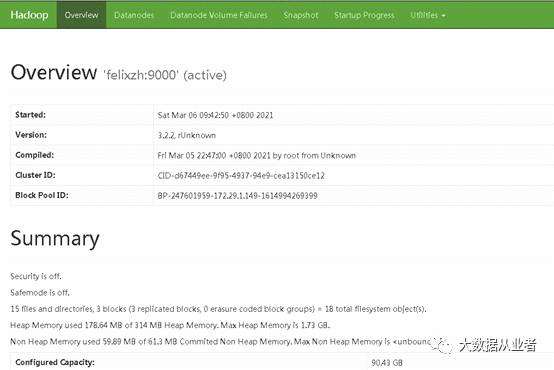

查看Hdfs页面

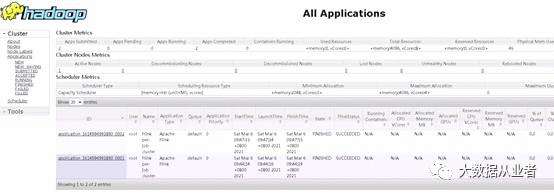

查看Yarn页面

Flink1.12.2 on yarn部署

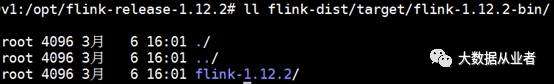

拷贝编译结果flink-1.12.2到/opt/bigdata

/opt/bigdata/flink-1.12.2/conf/flink-conf.yaml增加

classloader.check-leaked-classloader:false

声明环境变量

export HADOOP_CLASSPATH=/opt/bigdata/hadoop-3.2.2/share/hadoop/client/*:/opt/bigdata/hadoop-3.2.2/share/hadoop/common/*:/opt/bigdata/hadoop-3.2.2/share/hadoop/common/lib/*:/opt/bigdata/hadoop-3.2.2/share/hadoop/hdfs/*:/opt/bigdata/hadoop-3.2.2/share/hadoop/hdfs/lib/*:/opt/bigdata/hadoop-3.2.2/share/hadoop/mapreduce/*:/opt/bigdata/hadoop-3.2.2/share/hadoop/mapreduce/lib/*:/opt/bigdata/hadoop-3.2.2/share/hadoop/yarn/*:/opt/bigdata/hadoop-3.2.2/share/hadoop/yarn/lib/*

以yarn session模式启动Flink集群

/opt/bigdata/flink-1.12.2/bin/yarn-session.sh