花了十来分钟写了个这个小爬虫,目的就是想能够方便一点寻找职位,并且大四了,没有工作和实习很慌啊!

爬虫不具有扩展性,自己随手写的,改掉里面的 keyword 和 region 即可爬行所有的招聘,刚开始测试的是5s访问一次,不过还是会被ban,所以改成了20s一次,没有使用多线程和代理池,懒,够用就行了,结果会保存到一个csv文件里面,用excel打开即可。

直接上代码:

import requests

import urllib.parse

import json

import time

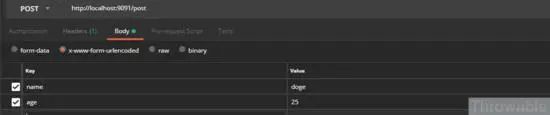

import csvdef main():keyword &#61; &#39;逆向&#39;region &#61; &#39;全国&#39;headers &#61; {&#39;Accept&#39;: &#39;application/json, text/Javascript, */*; q&#61;0.01&#39;,&#39;Accept-Encoding&#39;: &#39;gzip, deflate, br&#39;,&#39;Accept-Language&#39;: &#39;zh-CN,zh;q&#61;0.9&#39;,&#39;Cache-Control&#39;: &#39;no-cache&#39;,&#39;Connection&#39;: &#39;keep-alive&#39;,&#39;Content-Length&#39;: &#39;37&#39;,&#39;Content-Type&#39;: &#39;application/x-www-form-urlencoded; charset&#61;UTF-8&#39;,&#39;Host&#39;: &#39;www.lagou.com&#39;,&#39;Origin&#39;: &#39;https://www.lagou.com&#39;,&#39;Pragma&#39;: &#39;no-cache&#39;,&#39;Referer&#39;: &#39;https://www.lagou.com/jobs/list_%s?city&#61;%s&#39; % (urllib.parse.quote(keyword), urllib.parse.quote(region)),&#39;User-Agent&#39;: &#39;Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.81 Safari/537.36&#39;,&#39;X-Anit-Forge-Code&#39;: &#39;0&#39;,&#39;X-Anit-Forge-Token&#39;: &#39;None&#39;,&#39;X-Requested-With&#39;: &#39;XMLHttpRequest&#39;,}data &#61; {&#39;pn&#39;: 1,&#39;kd&#39;: keyword,}total_count &#61; 1pn &#61; 1jobjson &#61; []while 1:if total_count <&#61; 0:breakdata[&#39;pn&#39;] &#61; pnlagou_reverse_search &#61; requests.post("https://www.lagou.com/jobs/positionAjax.json?needAddtionalResult&#61;false", headers&#61;headers, data&#61;data)datajson &#61; json.loads(lagou_reverse_search.text)print(&#39;page %d get finish&#39; % pn)if pn &#61;&#61; 1:total_count &#61; int(datajson[&#39;content&#39;][&#39;positionResult&#39;][&#39;totalCount&#39;])jobjson &#43;&#61; [{&#39;positionName&#39;: j[&#39;positionName&#39;], &#39;salary&#39;: j[&#39;salary&#39;], &#39;workYear&#39;: j[&#39;workYear&#39;], &#39;education&#39;: j[&#39;education&#39;], &#39;city&#39;: j[&#39;city&#39;], &#39;industryField&#39;: j[&#39;industryField&#39;], &#39;companyShortName&#39;: j[&#39;companyShortName&#39;], &#39;financeStage&#39;: j[&#39;financeStage&#39;]} for j in datajson[&#39;content&#39;][&#39;positionResult&#39;][&#39;result&#39;]]total_count -&#61; 15pn &#43;&#61; 1time.sleep(20)csv_header &#61; [&#39;positionName&#39;, &#39;salary&#39;, &#39;workYear&#39;, &#39;education&#39;, &#39;city&#39;, &#39;industryField&#39;, &#39;companyShortName&#39;, &#39;financeStage&#39;]with open(&#39;job.csv&#39;,&#39;w&#39;) as f:f_csv &#61; csv.DictWriter(f, csv_header)f_csv.writeheader()f_csv.writerows(jobjson)if __name__ &#61;&#61; &#39;__main__&#39;:main()

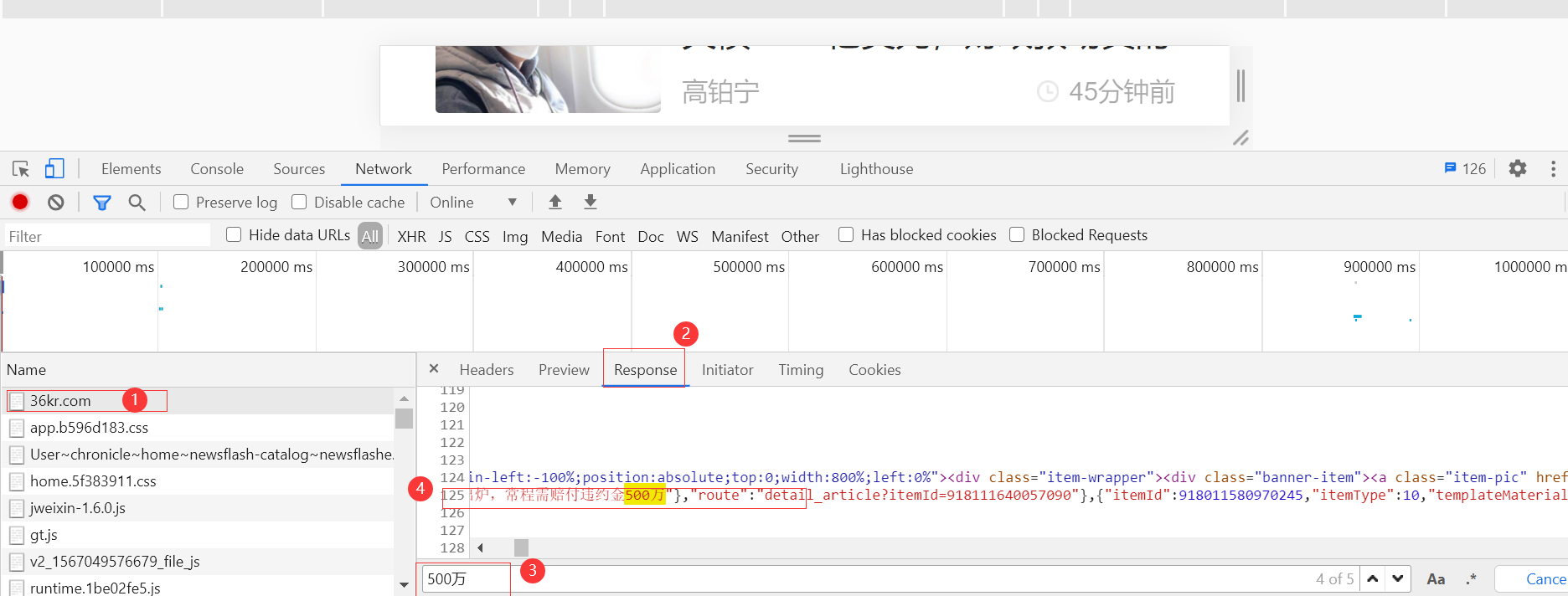

ajax动态加载的&#xff0c;直接打开调试工具看XHR即可。

京公网安备 11010802041100号

京公网安备 11010802041100号