搭建K8S集群

上一个文章中简答了解到了Kubernetes,这次来说说K8S集群怎么搭建

单个master节点,然后管理多个node节点

多个master节点,管理多个node节点,同时中间多了一个负载均衡的过程

master:2核4G 20G

node: 4核8G 40G

master:8核16G 100G

node: 16核64G 200G

目前生产部署Kubernetes集群主要有两种方式

kubeadm是一个K8S部署工具,提供Kubeadm init和Kubeadm join,用于快速部署Kubernetes集群

官网地址:点击这里

从github下载发行版的二进制包,手动部署每一个组件,组成Kubernetes集群。

Kubeadm降低部署门槛,但屏蔽了很多细节,遇到问题很难排查。如果想更容易可控,推荐使用二进制包部署Kubernetes集群,虽然手动部署麻烦点,期间可以学习很多工作原理,也有利于后期维护

Kubeadm是官方社区推出的一个用于快速部署Kunernetes集群的工具,这个工具能通过两条指令完成一个kubernetes集群的部署:

#创建一个Master节点kubeadm init #将Node节点加入到当前集群中$ kubeadm join <Master节点的IP和端口>

使用kubeadm方式搭建K8s集群主要分为一下几步

准备三台虚拟机&#xff0c;同时安装操作系统CentOS 7.x

对三个安装之后的操作系统进行初始化操作

对三个节点安装docker、kubelet、kubeadm、kubectl

在master节点执行kubeadm init命令初始化集群

在node节点执行kubeadm join命令&#xff0c;把node节点添加到当前集群

配置CNI网络插件&#xff0c;用于节点之间的联通

通过拉取一个nginx进行测试&#xff0c;能否进行外网测试

在开始之前&#xff0c;部署Kubernetes集群机器需要满足一下几个条件

一台或者多态机器&#xff0c;操作系统为CentOS 7.x。内核版本4.0以上

硬件配置&#xff1a;至少2GB内存&#xff0c;2颗CPU&#xff0c;硬盘30G

集群中所有的机器之间网络互通

可以访问外网&#xff0c;需要拉取镜像&#xff0c;如果服务器不能上网&#xff0c;需要提前下载镜像并且导入节点

禁止swap分区

| 角色 | IP |

|---|---|

| Master | 192.168.2.73 |

| Node01 | 192.168.2.71 |

| Node02 | 192.168.2.72 |

开始在每台集群上执行下面的命令

# 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld#关闭selinux

##永久关闭

sed -i &#39;s/enforcing/disabled/&#39; /etc/selinux/config

##临时关闭

setenforce 0#关闭swap

##临时关闭

swapoff -a

##永久关闭

sed -ri &#39;s/.*swap.*/#&/&#39; /etc/fstab#根据规划设置主机名

##Master

hostnamectl set-hostname k8s-master

##Node01

hostnamectl set-hostname k8s-node01

##Node02

hostnamectl set-hostname k8s-node02#在master节点添加hosts

cat >> /etc/hosts << EOF

192.168.2.73 k8s-master

192.168.2.71 k8s-node01

192.168.2.72 k8s-node02

EOF#将桥接的IPv4流量传递到itables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables &#61; 1

net.bridge.bridge-nf-call-iptables &#61; 1

net.ipv4.ip_forward&#61;1

EOF

# 刷新生效

sysctl --system#同步时间

yum -y install ntpdate

ntpdate -u ntp1.aliyun.com #调整时区为 中国/上海

timedatectl set-timezone Asia/Shanghai#将当前的UTC时间写入硬件时钟

timedatectl set-local-rtc 0#重启依赖于系统时间的服务

systemctl restart rsyslog

systemctl restart crond#关闭系统不需要的服务

systemctl stop postfix && systemctl disable postfix

所有节点安装Docker/kubeadm/kubelet&#xff0c;Kubernetes默认CRI&#xff08;容器运行时&#xff09;为Docker&#xff0c;因此先安装Docker

首先配置Docker的阿里yum源

cat >/etc/yum.repos.d/docker.repo<<EOF

[docker-ce-edge]

name&#61;Docker CE Edge - \$basearch

baseurl&#61;https://mirrors.aliyun.com/docker-ce/linux/centos/7/\$basearch/edge

enabled&#61;1

gpgcheck&#61;1

gpgkey&#61;https://mirrors.aliyun.com/docker-ce/linux/centos/gpg

EOF

安装docker

#yum安装

yum -y install docker-ce#查看docker版本

docker --version#启动docker

systemctl start docker

systemctl enable docker

配置docker的镜像源

cat >> /etc/docker/daemon.json << EOF

{"registry-mirrors": ["https://b9pmyelo.mirror.aliyuncs.com"]

}

EOF

重启docker

systemctl restart docker

配置yum的k8s软件源

cat

[kubernetes]

name&#61;Kubernetes

baseurl&#61;http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled&#61;1

gpgcheck&#61;0

repo_gpgcheck&#61;0

gpgkey&#61;http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

由于版本更新频繁&#xff0c;这里需要指定版本号部署

#安装kubelet、kubeadm、kubectl&#xff0c;同时指定版本

yum -y install kubelet-1.18.0 kubeadm-1.18.0 kubectl-1.18.0#设置开机启动

systemctl enable kubelet

在192.168.2.73节点执行

kubeadm init --apiserver-advertise-address&#61;192.168.2.73 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.18.0 --service-cidr&#61;10.96.0.0/12 --pod-network-cidr&#61;10.244.0.0/16

根据初始化提示&#xff0c;使用kubectl工具

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

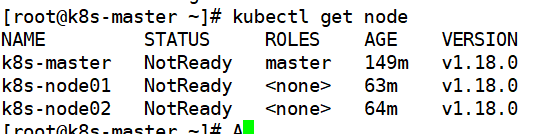

执行完成后&#xff0c;查看正在运行的节点

kubectl get nodes

目前只有一个master节点在运行了&#xff0c;但是还处于为准备状态

下面还需要在node节点执行其他命令&#xff0c;将node01和node02加入到我们的k8s集群里

下面我们需要到node01和node02节点&#xff0c;执行下面的代码向集群添加新节点

执行kubeadm init 输出的kubeadm join命令&#xff1a;

##注意&#xff0c;以下的命令是在master初始化完成后&#xff0c;每个人的都不一样&#xff01;&#xff01;&#xff01;需要复制自己生成的

kubeadm join 192.168.177.130:6443 --token 8j6ui9.gyr4i156u30y80xf \--discovery-token-ca-cert-hash sha256:eda1380256a62d8733f4bddf926f148e57cf9d1a3a58fb45dd6e80768af5a500

默认token有效期为24小时&#xff0c;当过期后&#xff0c;该token就不可用了。这时就需要重新创建token

kubeadm tolen create --print-join-command

当我们把两个节点都加进来后&#xff0c;我们就可以去Master节点执行是下面的命令查看情况

kubectl get node

上面的状态还是NotReday&#xff0c;下面需要部署网络插件&#xff0c;来进行联网访问

下载网络插件配置

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

没代理的可以复制一下内容

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:name: psp.flannel.unprivilegedannotations:seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/defaultseccomp.security.alpha.kubernetes.io/defaultProfileName: docker/defaultapparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/defaultapparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:privileged: falsevolumes:- configMap- secret- emptyDir- hostPathallowedHostPaths:- pathPrefix: "/etc/cni/net.d"- pathPrefix: "/etc/kube-flannel"- pathPrefix: "/run/flannel"readOnlyRootFilesystem: false# Users and groupsrunAsUser:rule: RunAsAnysupplementalGroups:rule: RunAsAnyfsGroup:rule: RunAsAny# Privilege EscalationallowPrivilegeEscalation: falsedefaultAllowPrivilegeEscalation: false# CapabilitiesallowedCapabilities: [&#39;NET_ADMIN&#39;]defaultAddCapabilities: []requiredDropCapabilities: []# Host namespaceshostPID: falsehostIPC: falsehostNetwork: truehostPorts:- min: 0max: 65535# SELinuxseLinux:# SELinux is unused in CaaSPrule: &#39;RunAsAny&#39;

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:name: flannel

rules:- apiGroups: [&#39;extensions&#39;]resources: [&#39;podsecuritypolicies&#39;]verbs: [&#39;use&#39;]resourceNames: [&#39;psp.flannel.unprivileged&#39;]- apiGroups:- ""resources:- podsverbs:- get- apiGroups:- ""resources:- nodesverbs:- list- watch- apiGroups:- ""resources:- nodes/statusverbs:- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:name: flannel

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: flannel

subjects:

- kind: ServiceAccountname: flannelnamespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:name: flannelnamespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:name: kube-flannel-cfgnamespace: kube-systemlabels:tier: nodeapp: flannel

data:cni-conf.json: |{"name": "cbr0","cniVersion": "0.3.1","plugins": [{"type": "flannel","delegate": {"hairpinMode": true,"isDefaultGateway": true}},{"type": "portmap","capabilities": {"portMappings": true}}]}net-conf.json: |{"Network": "10.244.0.0/16","Backend": {"Type": "vxlan"}}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: kube-flannel-ds-amd64namespace: kube-systemlabels:tier: nodeapp: flannel

spec:selector:matchLabels:app: flanneltemplate:metadata:labels:tier: nodeapp: flannelspec:affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: kubernetes.io/osoperator: Invalues:- linux- key: kubernetes.io/archoperator: Invalues:- amd64hostNetwork: truetolerations:- operator: Existseffect: NoScheduleserviceAccountName: flannelinitContainers:- name: install-cniimage: quay.io/coreos/flannel:v0.12.0-amd64command:- cpargs:- -f- /etc/kube-flannel/cni-conf.json- /etc/cni/net.d/10-flannel.conflistvolumeMounts:- name: cnimountPath: /etc/cni/net.d- name: flannel-cfgmountPath: /etc/kube-flannel/containers:- name: kube-flannelimage: quay.io/coreos/flannel:v0.12.0-amd64command:- /opt/bin/flanneldargs:- --ip-masq- --kube-subnet-mgrresources:requests:cpu: "100m"memory: "50Mi"limits:cpu: "100m"memory: "50Mi"securityContext:privileged: falsecapabilities:add: ["NET_ADMIN"]env:- name: POD_NAMEvalueFrom:fieldRef:fieldPath: metadata.name- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespacevolumeMounts:- name: runmountPath: /run/flannel- name: flannel-cfgmountPath: /etc/kube-flannel/volumes:- name: runhostPath:path: /run/flannel- name: cnihostPath:path: /etc/cni/net.d- name: flannel-cfgconfigMap:name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: kube-flannel-ds-arm64namespace: kube-systemlabels:tier: nodeapp: flannel

spec:selector:matchLabels:app: flanneltemplate:metadata:labels:tier: nodeapp: flannelspec:affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: kubernetes.io/osoperator: Invalues:- linux- key: kubernetes.io/archoperator: Invalues:- arm64hostNetwork: truetolerations:- operator: Existseffect: NoScheduleserviceAccountName: flannelinitContainers:- name: install-cniimage: quay.io/coreos/flannel:v0.12.0-arm64command:- cpargs:- -f- /etc/kube-flannel/cni-conf.json- /etc/cni/net.d/10-flannel.conflistvolumeMounts:- name: cnimountPath: /etc/cni/net.d- name: flannel-cfgmountPath: /etc/kube-flannel/containers:- name: kube-flannelimage: quay.io/coreos/flannel:v0.12.0-arm64command:- /opt/bin/flanneldargs:- --ip-masq- --kube-subnet-mgrresources:requests:cpu: "100m"memory: "50Mi"limits:cpu: "100m"memory: "50Mi"securityContext:privileged: falsecapabilities:add: ["NET_ADMIN"]env:- name: POD_NAMEvalueFrom:fieldRef:fieldPath: metadata.name- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespacevolumeMounts:- name: runmountPath: /run/flannel- name: flannel-cfgmountPath: /etc/kube-flannel/volumes:- name: runhostPath:path: /run/flannel- name: cnihostPath:path: /etc/cni/net.d- name: flannel-cfgconfigMap:name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: kube-flannel-ds-armnamespace: kube-systemlabels:tier: nodeapp: flannel

spec:selector:matchLabels:app: flanneltemplate:metadata:labels:tier: nodeapp: flannelspec:affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: kubernetes.io/osoperator: Invalues:- linux- key: kubernetes.io/archoperator: Invalues:- armhostNetwork: truetolerations:- operator: Existseffect: NoScheduleserviceAccountName: flannelinitContainers:- name: install-cniimage: quay.io/coreos/flannel:v0.12.0-armcommand:- cpargs:- -f- /etc/kube-flannel/cni-conf.json- /etc/cni/net.d/10-flannel.conflistvolumeMounts:- name: cnimountPath: /etc/cni/net.d- name: flannel-cfgmountPath: /etc/kube-flannel/containers:- name: kube-flannelimage: quay.io/coreos/flannel:v0.12.0-armcommand:- /opt/bin/flanneldargs:- --ip-masq- --kube-subnet-mgrresources:requests:cpu: "100m"memory: "50Mi"limits:cpu: "100m"memory: "50Mi"securityContext:privileged: falsecapabilities:add: ["NET_ADMIN"]env:- name: POD_NAMEvalueFrom:fieldRef:fieldPath: metadata.name- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespacevolumeMounts:- name: runmountPath: /run/flannel- name: flannel-cfgmountPath: /etc/kube-flannel/volumes:- name: runhostPath:path: /run/flannel- name: cnihostPath:path: /etc/cni/net.d- name: flannel-cfgconfigMap:name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: kube-flannel-ds-ppc64lenamespace: kube-systemlabels:tier: nodeapp: flannel

spec:selector:matchLabels:app: flanneltemplate:metadata:labels:tier: nodeapp: flannelspec:affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: kubernetes.io/osoperator: Invalues:- linux- key: kubernetes.io/archoperator: Invalues:- ppc64lehostNetwork: truetolerations:- operator: Existseffect: NoScheduleserviceAccountName: flannelinitContainers:- name: install-cniimage: quay.io/coreos/flannel:v0.12.0-ppc64lecommand:- cpargs:- -f- /etc/kube-flannel/cni-conf.json- /etc/cni/net.d/10-flannel.conflistvolumeMounts:- name: cnimountPath: /etc/cni/net.d- name: flannel-cfgmountPath: /etc/kube-flannel/containers:- name: kube-flannelimage: quay.io/coreos/flannel:v0.12.0-ppc64lecommand:- /opt/bin/flanneldargs:- --ip-masq- --kube-subnet-mgrresources:requests:cpu: "100m"memory: "50Mi"limits:cpu: "100m"memory: "50Mi"securityContext:privileged: falsecapabilities:add: ["NET_ADMIN"]env:- name: POD_NAMEvalueFrom:fieldRef:fieldPath: metadata.name- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespacevolumeMounts:- name: runmountPath: /run/flannel- name: flannel-cfgmountPath: /etc/kube-flannel/volumes:- name: runhostPath:path: /run/flannel- name: cnihostPath:path: /etc/cni/net.d- name: flannel-cfgconfigMap:name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: kube-flannel-ds-s390xnamespace: kube-systemlabels:tier: nodeapp: flannel

spec:selector:matchLabels:app: flanneltemplate:metadata:labels:tier: nodeapp: flannelspec:affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: kubernetes.io/osoperator: Invalues:- linux- key: kubernetes.io/archoperator: Invalues:- s390xhostNetwork: truetolerations:- operator: Existseffect: NoScheduleserviceAccountName: flannelinitContainers:- name: install-cniimage: quay.io/coreos/flannel:v0.12.0-s390xcommand:- cpargs:- -f- /etc/kube-flannel/cni-conf.json- /etc/cni/net.d/10-flannel.conflistvolumeMounts:- name: cnimountPath: /etc/cni/net.d- name: flannel-cfgmountPath: /etc/kube-flannel/containers:- name: kube-flannelimage: quay.io/coreos/flannel:v0.12.0-s390xcommand:- /opt/bin/flanneldargs:- --ip-masq- --kube-subnet-mgrresources:requests:cpu: "100m"memory: "50Mi"limits:cpu: "100m"memory: "50Mi"securityContext:privileged: falsecapabilities:add: ["NET_ADMIN"]env:- name: POD_NAMEvalueFrom:fieldRef:fieldPath: metadata.name- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespacevolumeMounts:- name: runmountPath: /run/flannel- name: flannel-cfgmountPath: /etc/kube-flannel/volumes:- name: runhostPath:path: /run/flannel- name: cnihostPath:path: /etc/cni/net.d- name: flannel-cfgconfigMap:name: kube-flannel-cfg

#添加

kubectl apply -f kube-flannel.yaml

##1首先下载v0.12.1-rc2-amd64 的镜像 链接.

##2导入镜像。3个节点都需要导入

docker load -i flannel.tar

##下载到本地&#xff0c;将image: quay.io/coreos/flannel:v0.12.1-rc2 替换为 image: quay.io/coreos/flannel:v0.12.1-rc2-amd6

##查看状态【kube-system是k8s中的最小单元】

kubectl get pod -n kube-system

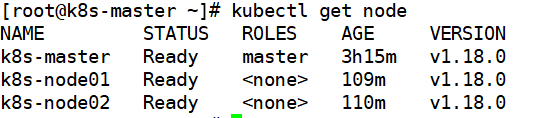

运行完成后&#xff0c;我们查看状态发现&#xff0c;已经变成ready状态了

入过上述操作完成后&#xff0c;还存在某个节点处于NotReady状态&#xff0c;可以在Master节点删除

#master节点执行删除

kubectl delete node k8s-node01#然后到k8s-node01节点进行重置

kubeadm reset

#重置完成后再次加入

kubeadm join 192.168.2.73:6334 --token jkcz0t.3c40t0bqqz5g8wsb \--discovery-token-ca-cert-hash sha256:eda1380256a62d8733f4bddf926f148e57cf9d1a3a58fb45dd6e80768af5a500

我们都知道K8s是容器化技术&#xff0c;他可以联网去下载镜像&#xff0c;用容器的方式进行启动

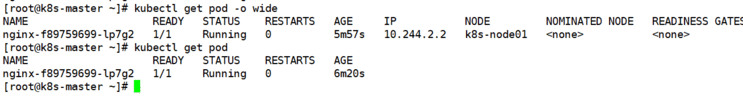

在kubernetes集群中创建一个pod&#xff0c;验证时候正常运行

#创建一个镜像是nginx的deployment

kubectl create deployment nginx --image&#61;nginx

#查看状态

kubectl get pod

如果pod的状态是Running&#xff0c;表示已经成功运行了

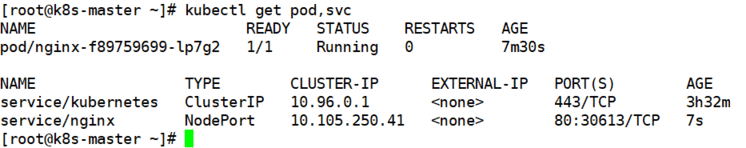

下面需要将端口暴露出去&#xff0c;可以让外部访问

#暴露端口

kubectl expose deployment nginx --port&#61;80 --type&#61;NodePort

#查看对外的端口

kubectl get pod,svc

通过浏览器访问测试

http://192.168.2.73:30613

OK! 目前为止&#xff0c;我们已经用kubeadm搭建完成了一个单master的k8s集群

在执行Kubernets init方法的时候&#xff0c;出现这个问题

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR NumCPU]: the number of available CPUs 1 is less than the required 2

是因为VMware设置的核数为1&#xff0c;而K8s需求的最低核心数应该是2&#xff0c;调整核数重启系统即可

我们在给node1节点使用 kubernetes join命令的时候&#xff0c;出现以下错误

error execution phase preflight: [preflight] Some fatal errors

occurred: [ERROR Swap]: running with swap on is not supported. Please

disable swap

错误原因是需要关闭swap

# 关闭swap

# 临时swapoff -a

# 永久sed -ri &#39;s/.*swap.*/#&/&#39; /etc/fstab

在给node节点是使用库beadm join命令的时候&#xff0c;出现

The HTTP call equal to ‘curl -sSL http://localhost:10248/healthz’

failed with error: Get http://localhost:10248/healthz: dial tcp

[::1]:10248: connect: connection refused

解决方法&#xff0c;首先需要到master节点&#xff0c;创建一个文件

# 创建文件夹

mkdir /etc/systemd/system/kubelet.service.d# 创建文件

vim /etc/systemd/system/kubelet.service.d/10-kubeadm.cond# 添加一下内容

Environment&#61;"KUBELET_SYSTEM_PODS_ARGS&#61;--pod-manifest-path&#61;/etc/kubernetes/manifests --allow-privileged&#61;true --fail-swap-on&#61;false"# 重置

kubeadm reset

然后删除刚刚创建的配置目录

rm -rf $HOME/.kube

在master节点重启初始化

kubeadm init --apiserver-advertise-address&#61;192.168.2.73 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.18.0 --service-cidr&#61;10.96.0.0/12 --pod-network-cidr&#61;10.244.0.0/16

初始化完成在重新到node节点&#xff0c;执行kubeadm join命令加入集群。添加完成后&#xff0c;使用

kubectl get node

命令查看节点是否添加成功

在执行查看节点的时候&#xff0c;kubectl get node会出现问题

Unable to connect to the server: x509: certificate signed by unknown

authority (possibly because of “crypto/rsa: verification error” while

trying to verify candidate authority certificate “kubernetes”)

这是因为我们之前创建的配置文件还存在&#xff0c;就是这些配置

mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf

$HOME/.kube/config sudo chown (id−u):(id -u):(id−u):(id -g) $HOME/.kube/config

解决方法&#xff0c;首先把配置文件删除

rm -rf $HOME/.kube

再重新执行一次

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

这个问题主要是因为在执行kubeadm reset的时候&#xff0c;没有把$HOME/.kube 给移除掉&#xff0c;再次创建时就会出现问题了

安装的时候&#xff0c;出现一下问题

Another app is currently holding the yum lock; waiting for it to exit…

是因为yum上锁&#xff0c;解决方法

yum -y install docker-ce

在node使用kubeadm join时&#xff0c;出现

[root&#64;k8smaster ~]# kubeadm join 192.168.2.73:6443 --token

jkcz0t.3c40t0bqqz5g8wsb --discovery-token-ca-cert-hash

sha256:bc494eeab6b7bac64c0861da16084504626e5a95ba7ede7b9c2dc7571ca4c9e5

W1117 06:55:11.220907 11230 join.go:346] [preflight] WARNING:

JoinControlPane.controlPlane settings will be ignored when

control-plane flag is not set. [preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected “cgroupfs” as the Docker

cgroup driver. The recommended driver is “systemd”. Please follow the

guide at https://kubernetes.io/docs/setup/cri/ error execution phase

preflight: [preflight] Some fatal errors occurred: [ERROR

FileContent–proc-sys-net-ipv4-ip_forward]:

/proc/sys/net/ipv4/ip_forward contents are not set to 1 [preflight] If

you know what you are doing, you can make a check non-fatal with

--ignore-preflight-errors&#61;...To see the stack trace of this error

execute with --v&#61;5 or higher

处于安全考虑&#xff0c;Linux系统默认是进行之数据包转发的&#xff0c;所谓转发即当主机拥有多于一块网卡时&#xff0c;其中一块收到数据包&#xff0c;根据数据包的目的ip地址将包发往本机另一块网卡&#xff0c;该网卡根据路由表继续发送数据包。这通常就是路由器所要实现的功能。也就是说是说 /proc/sys/net/ipv4/ip_forward 文件的值不支持转发

0&#xff1a;禁止

1&#xff1a;转发

所以只需要将值改成1即可

echo "1" > /proc/sys/net/ipv4/ip_forward

修改完成后&#xff0c;重新执行命令即可

在不关闭kubenetes相关服务的情况下&#xff0c;对kubernetes的master节点进行重启。启动后无法获取集群信息&#xff0c;输入命令报错

kubectl get node

The connection to the server <master>:6443 was refused - did you specify the right host or port?

处理思路&#xff1a;

1首先检查环境变量

env | grep -i kube

##如果是环境变量的问题

export KUBECONFIG&#61;/etc/kubernetes/admin.conf

export $HOME/.kube/config

2 检查docker服务是否正常

systemctl status docker.service

3 检查kubelet服务时候正常

systemctl status kubelet.service

4 检查端口是否被监听

netstat -tlunp | grep 6443

5 检查防火墙状态

systemctl status firewalld.service

6 查看日志

journalctl -xeu kubelet

OK&#xff01; 就这篇就这样吧&#xff0c;欢迎各位同学大佬提出自己的意见&#xff0c;辛苦了&#xff01;

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有