What is Hadoop?

==========

Hadoop is an open source, Java-based programming framework that supports the processing and storage of extremely large data sets in a distributed computing environment. It is part of the Apache project sponsored by the Apache Software Foundation.

What technology inspired the invention of Hadoop?

===========

Google paper:

- MapReduce

- Google File System

At architecture level, what are the most key components of Hadoop?

===========

HDFS – Hadoop Distributed File System, which is capable of storing data across thousands of commodity servers to achieve high bandwidth between nodes.

MapReduce - provides the programming model used to tackle large distributed data processing -- mapping data and reducing it to a result.

Yarn – Hadoop Yet Another Resource Negotiator which provides resource management and scheduling for user applications.

What is HDFS?

===========

HDFS provides the scalable, fault-tolerant, cost-efficient storage for big data. HDFS is a Java-based file system that provides scalable and reliable data storage, and it was designed to span large clusters of commodity servers. HDFS has demonstrated production scalability of up to 200 PB of storage and a single cluster of 4500 servers, supporting close to a billion files and blocks.

Describe the HDFS architecture, please.

===========

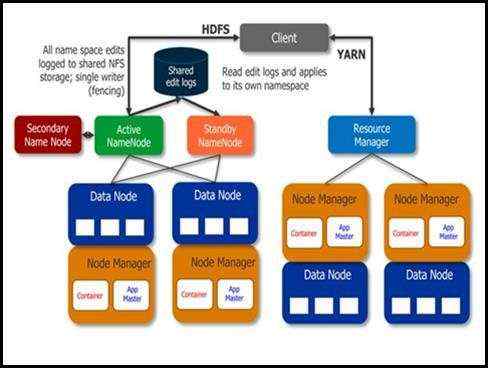

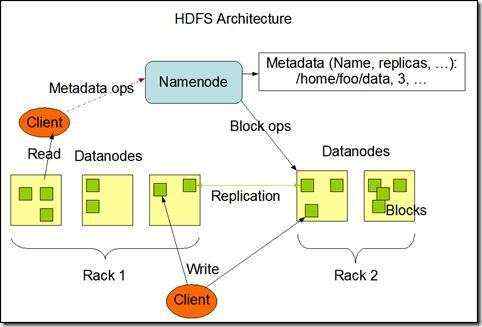

HDFS has a master/slave architecture.

An HDFS cluster consists of a single NameNode, a master server that manages the file system namespace and regulates access to files by clients.

In addition, there are a number of DataNodes, usually one per node in the cluster, which manage storage attached to the nodes that they run on.

HDFS exposes a file system namespace and allows user data to be stored in files.

Internally, a file is split into one or more blocks and these blocks are stored in a set of DataNodes.

- The NameNode executes file system namespace operations like opening, closing, and renaming files and directories. It also determines the mapping of blocks to DataNodes.

- The DataNodes are responsible for serving read and write requests from the file system’s clients. The DataNodes also perform block creation, deletion, and replication upon instruction from the NameNode.

How is a file stored in HDFS?

===========

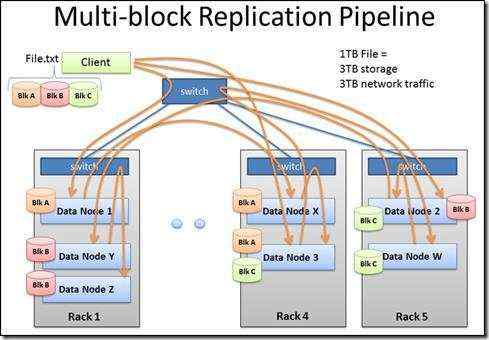

A file is separated into blocks of at least 64MB. These blocks will be stored in DataNodes.

Block placement strategy:

- One replica on local node.

- Second replica on a remote rack.

- Third replica on the same remote rack.

- Additional replicas are randomly placed.

A data file of 1 TB requires how much storage and network traffic to store in HDFS?

===========

1 TB file requires:

- 3 TB storage

- 3 TB network traffic

How is filesystem metadata stored in Hadoop?

===========

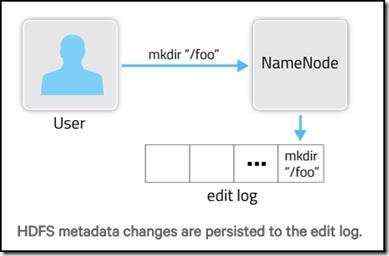

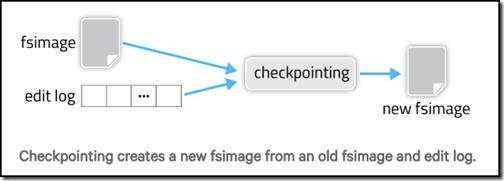

This filesystem metadata is stored in two different constructs: the fsimage and the edit log.

The fsimage is a file that represents a point-in-time snapshot of the filesystem’s metadata.

Rather than writing a new fsimage every time the namespace is modified, the NameNode instead records the modifying operation in the edit log for durability.

This way, if the NameNode crashes, it can restore its state by first loading the fsimage then replaying all the operations (also called edits or transactions) in the edit log to catch up to the most recent state of the namesystem. The edit log comprises a series of files, called edit log segments, that together represent all the namesystem modifications made since the creation of the fsimage.

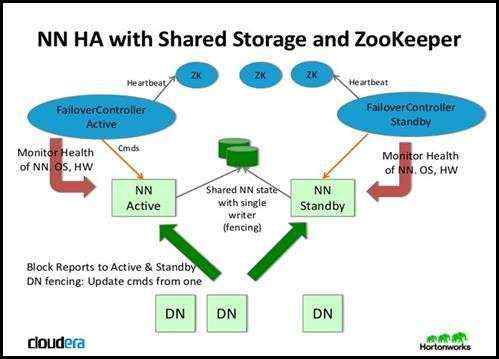

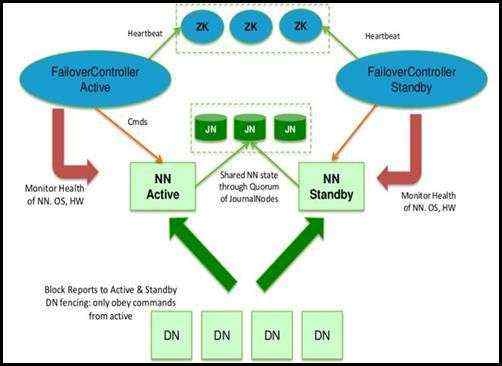

What is ZooKeeper and how is that fit into hadoop?

===========

ZooKeeper is a centralized service for maintaining configuration information, naming, providing distributed synchronization, and providing group services.

What is Hadoop MapReduce?

===========

Hadoop MapReduce is a software framework for easily writing applications which process vast amounts of data (multi-terabyte data-sets) in-parallel on large clusters (thousands of nodes) of commodity hardware in a reliable, fault-tolerant manner.

A MapReduce job usually splits the input data-set into independent chunks which are processed by the map tasks in a completely parallel manner. The framework sorts the outputs of the maps, which are then input to the reduce tasks. Typically both the input and the output of the job are stored in a file-system. The framework takes care of scheduling tasks, monitoring them and re-executes the failed tasks.

Typically the compute nodes and the storage nodes are the same, that is, the MapReduce framework and the Hadoop Distributed File System (see HDFS Architecture Guide) are running on the same set of nodes. This configuration allows the framework to effectively schedule tasks on the nodes where data is already present, resulting in very high aggregate bandwidth across the cluster.

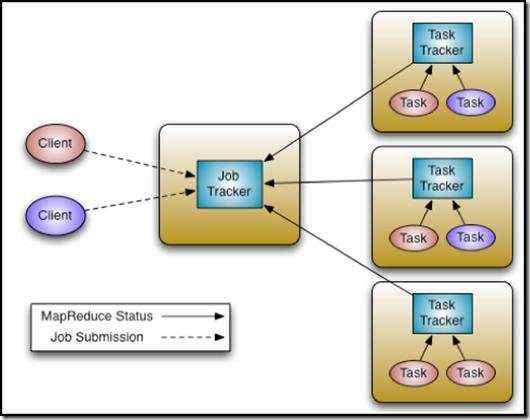

The MapReduce framework consists of a single master JobTracker and one slave TaskTracker per cluster-node. The master is responsible for scheduling the jobs\' component tasks on the slaves, monitoring them and re-executing the failed tasks. The slaves execute the tasks as directed by the master.

Minimally, applications specify the input/output locations and supply map and reduce functions via implementations of appropriate interfaces and/or abstract-classes. These, and other job parameters, comprise the job configuration. The Hadoop job client then submits the job (jar/executable etc.) and configuration to the JobTracker which then assumes the responsibility of distributing the software/configuration to the slaves, scheduling tasks and monitoring them, providing status and diagnostic information to the job-client.

What is Yarn?

===========

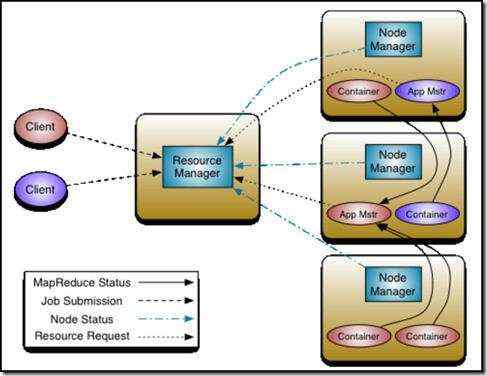

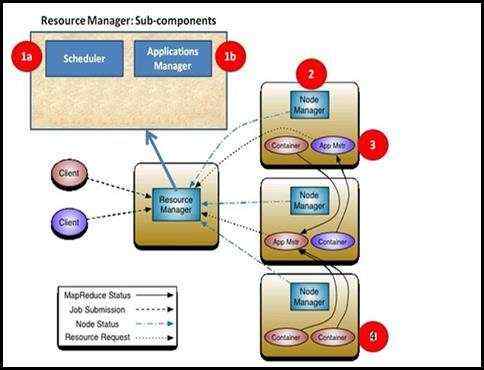

The fundamental idea of YARN is to split up the functionalities of resource management and job scheduling/monitoring into separate daemons. The idea is to have a global ResourceManager (RM) and per-application ApplicationMaster (AM). An application is either a single job or a DAG of jobs.

The ResourceManager and the NodeManager form the data-computation framework. The ResourceManager is the ultimate authority that arbitrates resources among all the applications in the system. The NodeManager is the per-machine framework agent who is responsible for containers, monitoring their resource usage (cpu, memory, disk, network) and reporting the same to the ResourceManager/Scheduler.

The per-application ApplicationMaster is, in effect, a framework specific library and is tasked with negotiating resources from the ResourceManager and working with the NodeManager(s) to execute and monitor the tasks.

Put everything above together?

==============