今天分享一批小明同学的原创,用Python探索金庸笔下的江湖!

带你用python看小说,娱乐学习两不误。

涉及的知识点有:

常规小说网站的爬取思路

基本的pandas数据整理

lxml与xpath应用技巧

正则模式匹配

Counter词频统计

pyecharts数据可视化

stylecloud词云图

gensim.models.Word2Vec的使用

scipy.cluster.hierarchy层次聚类

本文从传统匹配逻辑分析过渡到机器学习的词向量,全方位进行文本分析,值得学习,干货满满。

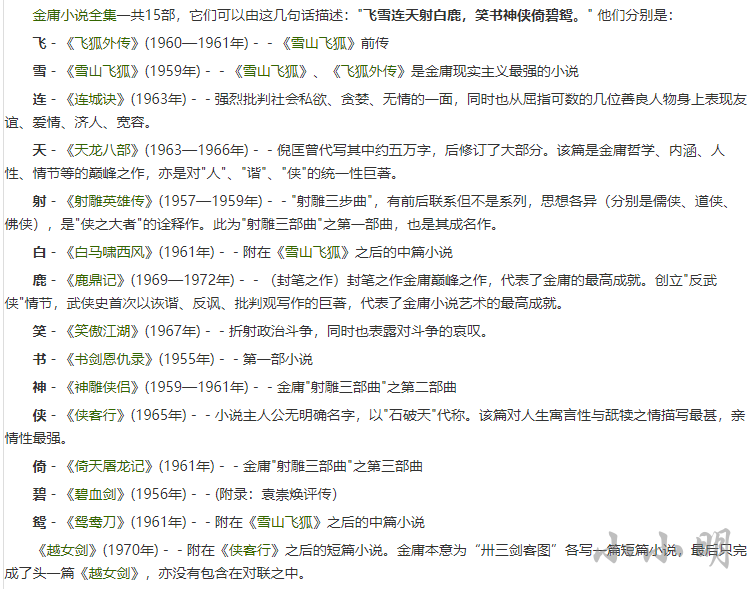

以前金庸小说的网站有很多,但大部分已经无法访问,但由于很多金庸迷的存在,新站也是源源不断出现。我近期通过百度找到的一个还可以访问的金庸小说网址是:aHR0cDovL2ppbnlvbmcxMjMuY29tLw==

数据源下载地址:https://gitcode.net/as604049322/blog_data

下面首先获取这15部作品的名称、创作年份和对应的链接。从开发者工具可以看到每行的a标签很多,我们需要的节点的特征在于后续临近节点紧接着一个创作日期的字符串:

import requests

from lxml import etree

import pandas as pd

import reheaders = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/86.0.4240.198 Safari/537.36","Accept-Language": "zh-CN,zh;q=0.9"

}

res = requests.get(base_url, headers=headers)

res.encoding = res.apparent_encoding

html = etree.HTML(res.text)

a_tags = html.xpath("//div[@class='jianjie']/p/a")

data = []

for a_tag in a_tags:m_obj = re.search("\((\d{4}(?:—\d{4})?年)\)", a_tag.tail)if m_obj:data.append((a_tag.text, m_obj.group(1), a_tag.attrib["href"]))

data = pd.DataFrame(data, columns=["名称", "创作时间", "网址"])

可以按照创作日期排序查看:

data.sort_values("创作时间", ignore_index=True, inplace=True)

data

| 名称 | 创作时间 | 网址 |

|---|---|---|

| 书剑恩仇录 | 1955年 | /shujianenchoulu/ |

| 碧血剑 | 1956年 | /bixuejian/ |

| 射雕英雄传 | 1957—1959年 | /shediaoyingxiongzhuan/ |

| 神雕侠侣 | 1959—1961年 | /shendiaoxialv/ |

| 雪山飞狐 | 1959年 | /xueshanfeihu/ |

| 飞狐外传 | 1960—1961年 | /feihuwaizhuan/ |

| 白马啸西风 | 1961年 | /baimaxiaoxifeng/ |

| 倚天屠龙记 | 1961年 | /yitiantulongji/ |

| 鸳鸯刀 | 1961年 | /yuanyangdao/ |

| 天龙八部 | 1963—1966年 | /tianlongbabu/ |

| 连城诀 | 1963年 | /lianchengjue/ |

| 侠客行 | 1965年 | /xiakexing/ |

| 笑傲江湖 | 1967年 | /xiaoaojianghu/ |

| 鹿鼎记 | 1969—1972年 | /ludingji/ |

| 越女剑 | 1970年 | /yuenvjian/ |

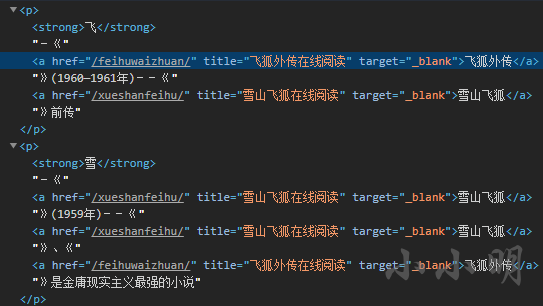

下面看看章节页节点的分布情况,以《雪山飞狐》为例:

from urllib.parse import urljoindef getTitleAndUrl(url):url &#61; urljoin(base_url, url)data &#61; []res &#61; requests.get(url, headers&#61;headers)res.encoding &#61; res.apparent_encodinghtml &#61; etree.HTML(res.text)reverse, last_num &#61; False, Nonefor i, a_tag in enumerate(html.xpath("//dl[&#64;class&#61;&#39;cat_box&#39;]/dd/a")):data.append([re.sub("\s&#43;", " ", a_tag.text), a_tag.attrib["href"]])nums &#61; re.findall("第(\d&#43;)章", a_tag.text)if nums:if last_num and int(nums[0]) < last_num:reverse &#61; Truelast_num &#61; int(nums[0])# 顺序校正并删除后记之后的内容if reverse:data.reverse()return data

测试一下&#xff1a;

title2url &#61; getTitleAndUrl(data.query("名称&#61;&#61;&#39;雪山飞狐&#39;").网址.iat[0])

title2url

[[&#39;第01章&#39;, &#39;/xueshanfeihu/488.html&#39;],[&#39;第02章&#39;, &#39;/xueshanfeihu/489.html&#39;],[&#39;第03章&#39;, &#39;/xueshanfeihu/490.html&#39;],[&#39;第04章&#39;, &#39;/xueshanfeihu/491.html&#39;],[&#39;第05章&#39;, &#39;/xueshanfeihu/492.html&#39;],[&#39;第06章&#39;, &#39;/xueshanfeihu/493.html&#39;],[&#39;第07章&#39;, &#39;/xueshanfeihu/494.html&#39;],[&#39;第08章&#39;, &#39;/xueshanfeihu/495.html&#39;],[&#39;第09章&#39;, &#39;/xueshanfeihu/496.html&#39;],[&#39;第10章&#39;, &#39;/xueshanfeihu/497.html&#39;],[&#39;后记&#39;, &#39;/xueshanfeihu/498.html&#39;]]

可以看到章节已经顺利的正序排列。

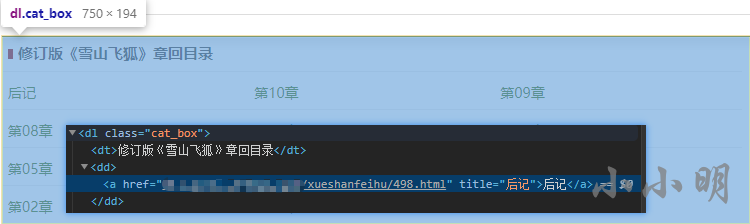

小说每一章的详细页最后一行的数据我们不需要&#xff1a;

def download_page_content(url):res &#61; requests.get(url, headers&#61;headers)res.encoding &#61; res.apparent_encodinghtml &#61; etree.HTML(res.text)content &#61; "\n".join(html.xpath("//div[&#64;class&#61;&#39;entry&#39;]/p/text()")[:-1])return content

然后我们就可以批量下载全部小说了&#xff1a;

import osdef download_one_novel(filename, url):"下载单部小说"title2url &#61; getTitleAndUrl(url)print("创建文件&#xff1a;", filename)for title, url in title2url:with open(filename, "a", encoding&#61;"u8") as f:f.write(title)f.write("\n\n")print("下载&#xff1a;", title)content &#61; download_page_content(url)f.write(content)f.write("\n\n")os.makedirs("novels", exist_ok&#61;True)

for row in data.itertuples():filename &#61; f"novels/{row.名称}.txt"os.remove(filename)download_one_novel(filename, row.网址)

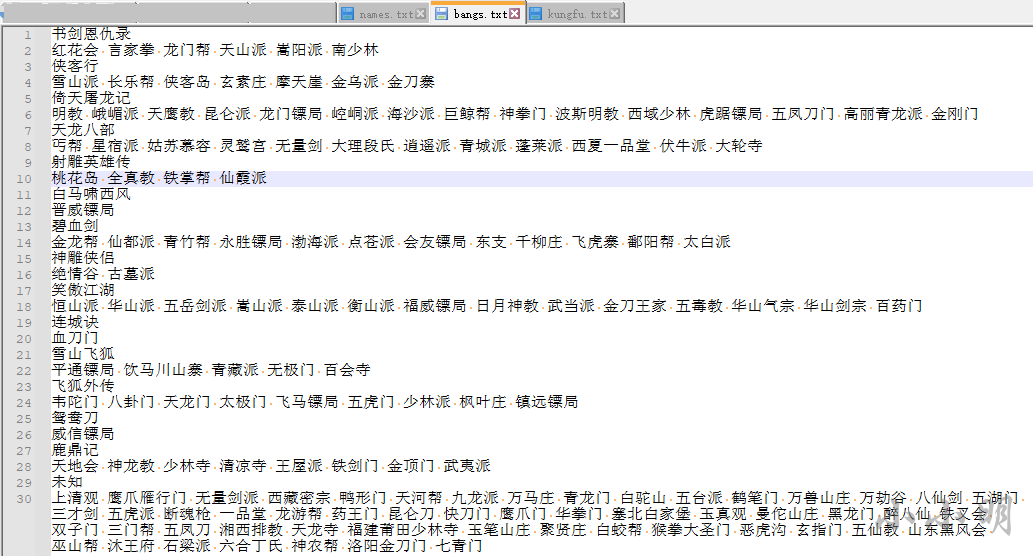

为了更好分析金庸小说&#xff0c;我们还需要采集金庸小说的人物、武功和门派&#xff0c;个人并没有找到还可以访问相关数据的网站&#xff0c;于是自行收集整理了相关数据&#xff1a;

小说1

人物1 人物2 ……

小说2

人物1 人物2 ……

小说3

人物1 人物2 ……

武功&#xff1a;

小说1

武功1 武功2 ……

小说2

武功1 武功2 ……

小说3

武功1 武功2 ……

数据源下载地址&#xff1a;https://gitcode.net/as604049322/blog_data

定义一个加载小说的方法&#xff1a;

def load_novel(novel):with open(f&#39;novels/{novel}.txt&#39;, encoding&#61;"u8") as f:return f.read()

首先我们加载人物数据&#xff1a;

with open(&#39;data/names.txt&#39;,encoding&#61;"utf-8") as f:data &#61; [line.rstrip() for line in f]

novels &#61; data[::2]

names &#61; data[1::2]

novel_names &#61; {k: v.split() for k, v in zip(novels, names)}

del novels, names, data

可以预览一下天龙八部中的人物&#xff1a;

print(",".join(novel_names[&#39;天龙八部&#39;][:20]))

刀白凤,丁春秋,马夫人,马五德,小翠,于光豪,巴天石,不平道人,邓百川,风波恶,甘宝宝,公冶乾,木婉清,包不同,天狼子,太皇太后,王语嫣,乌老大,无崖子,云岛主

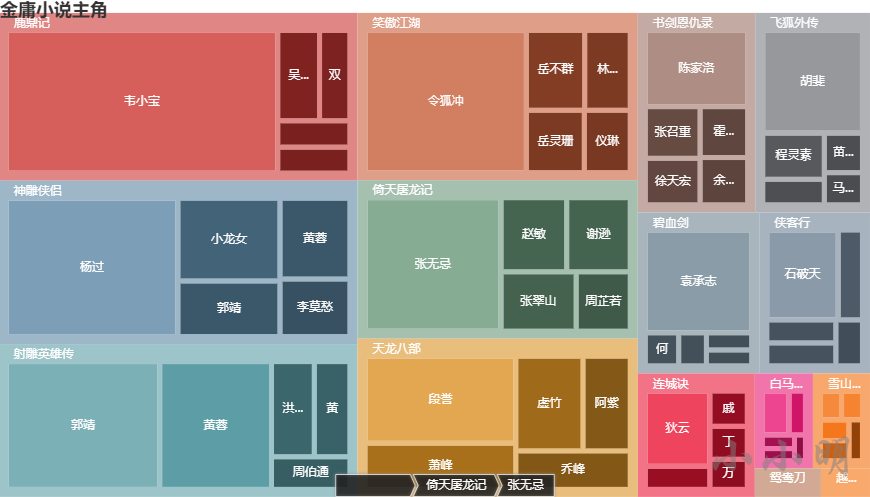

下面我们寻找一下每部小说的主角&#xff0c;统计每个人物的出场次数&#xff0c;显然次数越多主角光环越强&#xff0c;下面我们看看每部小说&#xff0c;出现次数最多的前十个人物&#xff1a;

from collections import Counterdef find_main_charecters(novel, num&#61;10, content&#61;None):if content is None:content &#61; load_novel(novel)count &#61; Counter()for name in novel_names[novel]:count[name] &#61; content.count(name)return count.most_common(num)for novel in novel_names:print(novel, dict(find_main_charecters(novel, 10)))

书剑恩仇录 {&#39;陈家洛&#39;: 2095, &#39;张召重&#39;: 760, &#39;徐天宏&#39;: 685, &#39;霍青桐&#39;: 650, &#39;余鱼同&#39;: 605, &#39;文泰来&#39;: 601, &#39;骆冰&#39;: 594, &#39;周绮&#39;: 556, &#39;李沅芷&#39;: 521, &#39;陆菲青&#39;: 486}

碧血剑 {&#39;袁承志&#39;: 3028, &#39;何铁手&#39;: 306, &#39;温青&#39;: 254, &#39;阿九&#39;: 215, &#39;洪胜海&#39;: 200, &#39;焦宛儿&#39;: 197, &#39;皇太极&#39;: 183, &#39;崔秋山&#39;: 180, &#39;穆人清&#39;: 171, &#39;闵子华&#39;: 163}

射雕英雄传 {&#39;郭靖&#39;: 5009, &#39;黄蓉&#39;: 3650, &#39;洪七公&#39;: 1041, &#39;黄药师&#39;: 868, &#39;周伯通&#39;: 654, &#39;欧阳克&#39;: 611, &#39;丘处机&#39;: 606, &#39;梅超风&#39;: 480, &#39;杨康&#39;: 439, &#39;柯镇恶&#39;: 431}

神雕侠侣 {&#39;杨过&#39;: 5991, &#39;小龙女&#39;: 2133, &#39;郭靖&#39;: 1431, &#39;黄蓉&#39;: 1428, &#39;李莫愁&#39;: 1016, &#39;郭芙&#39;: 850, &#39;郭襄&#39;: 778, &#39;陆无双&#39;: 575, &#39;周伯通&#39;: 555, &#39;赵志敬&#39;: 482}

雪山飞狐 {&#39;胡斐&#39;: 230, &#39;曹云奇&#39;: 228, &#39;宝树&#39;: 225, &#39;苗若兰&#39;: 217, &#39;胡一刀&#39;: 207, &#39;苗人凤&#39;: 129, &#39;刘元鹤&#39;: 107, &#39;陶子安&#39;: 107, &#39;田青文&#39;: 103, &#39;范帮主&#39;: 83}

飞狐外传 {&#39;胡斐&#39;: 2761, &#39;程灵素&#39;: 765, &#39;袁紫衣&#39;: 425, &#39;苗人凤&#39;: 405, &#39;马春花&#39;: 331, &#39;福康安&#39;: 287, &#39;赵半山&#39;: 287, &#39;田归农&#39;: 227, &#39;徐铮&#39;: 217, &#39;商宝震&#39;: 217}

白马啸西风 {&#39;李文秀&#39;: 441, &#39;苏普&#39;: 270, &#39;阿曼&#39;: 164, &#39;苏鲁克&#39;: 147, &#39;陈达海&#39;: 106, &#39;车尔库&#39;: 99, &#39;李三&#39;: 31, &#39;丁同&#39;: 29, &#39;霍元龙&#39;: 23, &#39;桑斯&#39;: 22}

倚天屠龙记 {&#39;张无忌&#39;: 4665, &#39;赵敏&#39;: 1250, &#39;谢逊&#39;: 1211, &#39;张翠山&#39;: 1146, &#39;周芷若&#39;: 825, &#39;殷素素&#39;: 550, &#39;杨逍&#39;: 514, &#39;张三丰&#39;: 451, &#39;灭绝师太&#39;: 431, &#39;小昭&#39;: 346}

鸳鸯刀 {&#39;萧中慧&#39;: 103, &#39;袁冠南&#39;: 82, &#39;卓天雄&#39;: 76, &#39;周威信&#39;: 74, &#39;林玉龙&#39;: 52, &#39;任飞燕&#39;: 51, &#39;萧半和&#39;: 48, &#39;盖一鸣&#39;: 45, &#39;逍遥子&#39;: 28, &#39;常长风&#39;: 19}

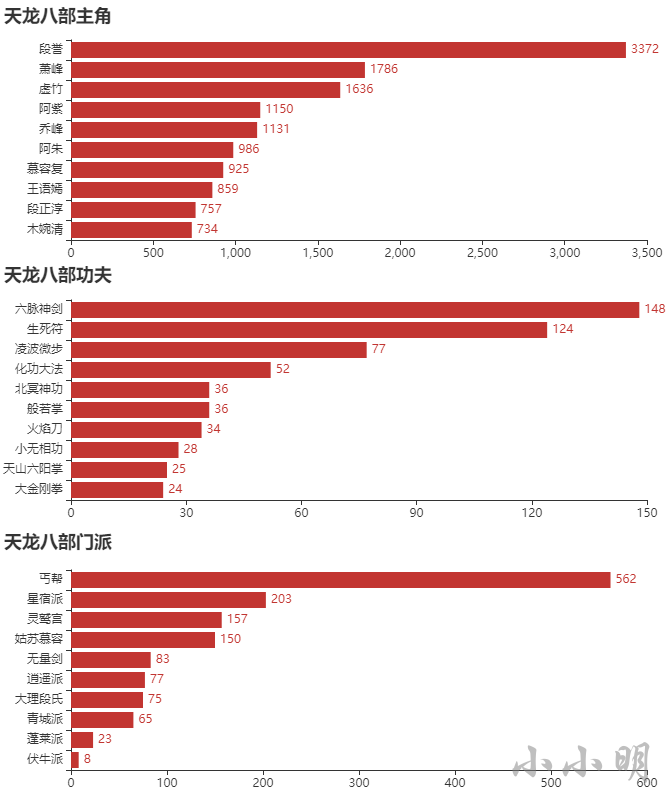

天龙八部 {&#39;段誉&#39;: 3372, &#39;萧峰&#39;: 1786, &#39;虚竹&#39;: 1636, &#39;阿紫&#39;: 1150, &#39;乔峰&#39;: 1131, &#39;阿朱&#39;: 986, &#39;慕容复&#39;: 925, &#39;王语嫣&#39;: 859, &#39;段正淳&#39;: 757, &#39;木婉清&#39;: 734}

连城诀 {&#39;狄云&#39;: 1433, &#39;水笙&#39;: 439, &#39;戚芳&#39;: 390, &#39;丁典&#39;: 364, &#39;万震山&#39;: 332, &#39;万圭&#39;: 288, &#39;花铁干&#39;: 256, &#39;吴坎&#39;: 155, &#39;血刀老祖&#39;: 144, &#39;戚长发&#39;: 117}

侠客行 {&#39;石破天&#39;: 1804, &#39;石清&#39;: 611, &#39;丁珰&#39;: 446, &#39;白万剑&#39;: 446, &#39;丁不四&#39;: 343, &#39;谢烟客&#39;: 337, &#39;闵柔&#39;: 327, &#39;贝海石&#39;: 257, &#39;丁不三&#39;: 217, &#39;白自在&#39;: 199}

笑傲江湖 {&#39;令狐冲&#39;: 5838, &#39;岳不群&#39;: 1184, &#39;林平之&#39;: 926, &#39;岳灵珊&#39;: 919, &#39;仪琳&#39;: 729, &#39;田伯光&#39;: 708, &#39;任我行&#39;: 525, &#39;向问天&#39;: 513, &#39;左冷禅&#39;: 473, &#39;方证&#39;: 415}

鹿鼎记 {&#39;韦小宝&#39;: 9731, &#39;吴三桂&#39;: 949, &#39;双儿&#39;: 691, &#39;鳌拜&#39;: 479, &#39;陈近南&#39;: 472, &#39;方怡&#39;: 422, &#39;茅十八&#39;: 400, &#39;小桂子&#39;: 355, &#39;施琅&#39;: 296, &#39;吴应熊&#39;: 290}

越女剑 {&#39;范蠡&#39;: 121, &#39;阿青&#39;: 64, &#39;勾践&#39;: 47, &#39;薛烛&#39;: 29, &#39;西施&#39;: 26, &#39;文种&#39;: 23, &#39;风胡子&#39;: 7}

上述结果用文本展示了每部小说的前5个主角&#xff0c;但是不够直观&#xff0c;下面我用pyecharts的树图展示一下&#xff1a;

from pyecharts import options as opts

from pyecharts.charts import Treedata &#61; []

for novel in novel_kungfus:tmp &#61; []data.append({"name": novel, "children": tmp})for name, count in find_main_kungfus(novel, 5):tmp.append({"name": name, "value": count})

c &#61; (TreeMap().add("", data, levels&#61;[opts.TreeMapLevelsOpts(),opts.TreeMapLevelsOpts(color_saturation&#61;[0.3, 0.6],treemap_itemstyle_opts&#61;opts.TreeMapItemStyleOpts(border_color_saturation&#61;0.7, gap_width&#61;5, border_width&#61;10),upper_label_opts&#61;opts.LabelOpts(is_show&#61;True, position&#61;&#39;insideTopLeft&#39;, vertical_align&#61;&#39;top&#39;)),]).set_global_opts(title_opts&#61;opts.TitleOpts(title&#61;"金庸小说主角"))

)

c.render_notebook()

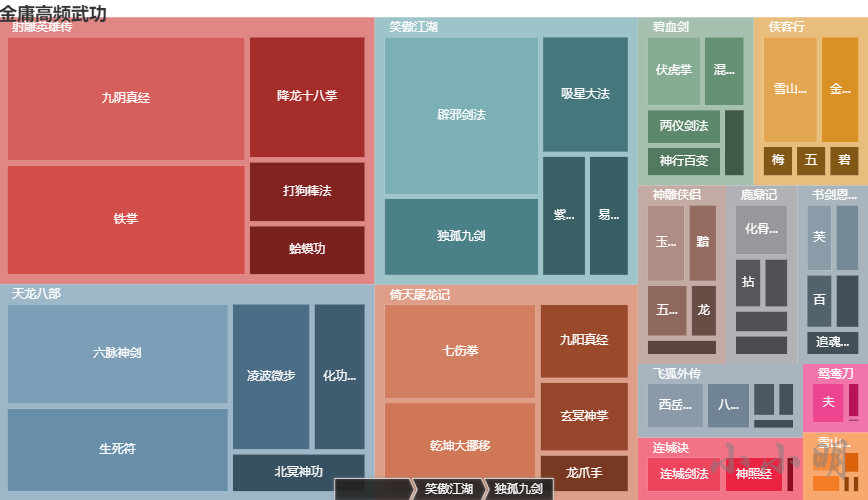

使用上述相同的方法&#xff0c;分析各种武功的出现频次&#xff0c;首先加载武功数据&#xff1a;

with open(&#39;data/kungfu.txt&#39;, encoding&#61;"utf-8") as f:data &#61; [line.rstrip() for line in f]

novels &#61; data[::2]

kungfus &#61; data[1::2]

novel_kungfus &#61; {k: v.split() for k, v in zip(novels, kungfus)}

del novels, kungfus, data

定义计数方法&#xff1a;

def find_main_kungfus(novel, num&#61;10, content&#61;None):if content is None:content &#61; load_novel(novel)count &#61; Counter()for name in novel_kungfus[novel]:count[name] &#61; content.count(name)return count.most_common(num)for novel in novel_kungfus:print(novel, dict(find_main_kungfus(novel, 10)))

书剑恩仇录 {&#39;芙蓉金针&#39;: 16, &#39;柔云剑术&#39;: 15, &#39;百花错拳&#39;: 13, &#39;追魂夺命剑&#39;: 12, &#39;三分剑术&#39;: 12, &#39;八卦刀&#39;: 10, &#39;铁琵琶手&#39;: 9, &#39;无极玄功拳&#39;: 9, &#39;甩手箭&#39;: 7, &#39;黑沙掌&#39;: 7}

侠客行 {&#39;雪山剑法&#39;: 46, &#39;金乌刀法&#39;: 33, &#39;碧针清掌&#39;: 8, &#39;五行六合掌&#39;: 8, &#39;梅花拳&#39;: 8, &#39;罗汉伏魔神功&#39;: 3, &#39;无妄神功&#39;: 1, &#39;神倒鬼跌三连环&#39;: 1, &#39;上清快剑&#39;: 1, &#39;黑煞掌&#39;: 1}

倚天屠龙记 {&#39;七伤拳&#39;: 98, &#39;乾坤大挪移&#39;: 93, &#39;九阳真经&#39;: 46, &#39;玄冥神掌&#39;: 43, &#39;龙爪手&#39;: 24, &#39;金刚伏魔圈&#39;: 21, &#39;千蛛万毒手&#39;: 18, &#39;幻阴指&#39;: 17, &#39;寒冰绵掌&#39;: 16, &#39;真武七截阵&#39;: 10}

天龙八部 {&#39;六脉神剑&#39;: 148, &#39;生死符&#39;: 124, &#39;凌波微步&#39;: 77, &#39;化功大法&#39;: 52, &#39;北冥神功&#39;: 36, &#39;般若掌&#39;: 36, &#39;火焰刀&#39;: 34, &#39;小无相功&#39;: 28, &#39;天山六阳掌&#39;: 25, &#39;大金刚拳&#39;: 24}

射雕英雄传 {&#39;九阴真经&#39;: 191, &#39;铁掌&#39;: 169, &#39;降龙十八掌&#39;: 92, &#39;打狗棒法&#39;: 47, &#39;蛤蟆功&#39;: 39, &#39;空明拳&#39;: 25, &#39;一阳指&#39;: 22, &#39;先天功&#39;: 14, &#39;双手互搏&#39;: 13, &#39;杨家枪法&#39;: 13}

碧血剑 {&#39;伏虎掌&#39;: 30, &#39;混元功&#39;: 23, &#39;两仪剑法&#39;: 21, &#39;神行百变&#39;: 18, &#39;蝎尾鞭&#39;: 12, &#39;破玉拳&#39;: 9, &#39;金蛇剑法&#39;: 5, &#39;软红蛛索&#39;: 4, &#39;混元掌&#39;: 4, &#39;斩蛟拳&#39;: 4}

神雕侠侣 {&#39;玉女素心剑法&#39;: 25, &#39;黯然销魂掌&#39;: 19, &#39;五毒神掌&#39;: 18, &#39;龙象般若功&#39;: 12, &#39;玉箫剑法&#39;: 10, &#39;七星聚会&#39;: 8, &#39;美女拳法&#39;: 8, &#39;天罗地网势&#39;: 7, &#39;上天梯&#39;: 5, &#39;三无三不手&#39;: 4}

笑傲江湖 {&#39;辟邪剑法&#39;: 160, &#39;独孤九剑&#39;: 80, &#39;吸星大法&#39;: 67, &#39;紫霞神功&#39;: 36, &#39;易筋经&#39;: 33, &#39;嵩山剑法&#39;: 33, &#39;华山剑法&#39;: 30, &#39;玉女剑十九式&#39;: 20, &#39;恒山剑法&#39;: 20, &#39;无双无对&#xff0c;宁氏一剑&#39;: 13}

连城诀 {&#39;连城剑法&#39;: 29, &#39;神照经&#39;: 23, &#39;六合拳&#39;: 4}

雪山飞狐 {&#39;胡家刀法&#39;: 8, &#39;苗家剑法&#39;: 7, &#39;龙爪擒拿手&#39;: 5, &#39;追命毒龙锥&#39;: 2, &#39;大擒拿手&#39;: 2, &#39;飞天神行&#39;: 1}

飞狐外传 {&#39;西岳华拳&#39;: 26, &#39;八极拳&#39;: 20, &#39;八仙剑法&#39;: 8, &#39;四象步&#39;: 6, &#39;燕青拳&#39;: 5, &#39;赤尻连拳&#39;: 5, &#39;一路华拳&#39;: 4, &#39;金刚拳&#39;: 3, &#39;毒砂掌&#39;: 3, &#39;四门刀法&#39;: 2}

鸳鸯刀 {&#39;夫妻刀法&#39;: 17, &#39;呼延十八鞭&#39;: 6, &#39;震天三十掌&#39;: 1}

鹿鼎记 {&#39;化骨绵掌&#39;: 24, &#39;拈花擒拿手&#39;: 12, &#39;大慈大悲千叶手&#39;: 11, &#39;沐家拳&#39;: 11, &#39;八卦游龙掌&#39;: 10, &#39;少林长拳&#39;: 7, &#39;金刚护体神功&#39;: 5, &#39;波罗蜜手&#39;: 4, &#39;散花掌&#39;: 3, &#39;金刚神掌&#39;: 2}

每部小说频次前5的武功可视化&#xff1a;

from pyecharts import options as opts

from pyecharts.charts import Treedata &#61; []

for novel in novel_kungfus:tmp &#61; []data.append({"name": novel, "children": tmp})for name, count in find_main_kungfus(novel, 5):tmp.append({"name": name, "value": count})

c &#61; (TreeMap().add("", data, levels&#61;[opts.TreeMapLevelsOpts(),opts.TreeMapLevelsOpts(color_saturation&#61;[0.3, 0.6],treemap_itemstyle_opts&#61;opts.TreeMapItemStyleOpts(border_color_saturation&#61;0.7, gap_width&#61;5, border_width&#61;10),upper_label_opts&#61;opts.LabelOpts(is_show&#61;True, position&#61;&#39;insideTopLeft&#39;, vertical_align&#61;&#39;top&#39;)),]).set_global_opts(title_opts&#61;opts.TitleOpts(title&#61;"金庸高频武功"))

)

c.render_notebook()

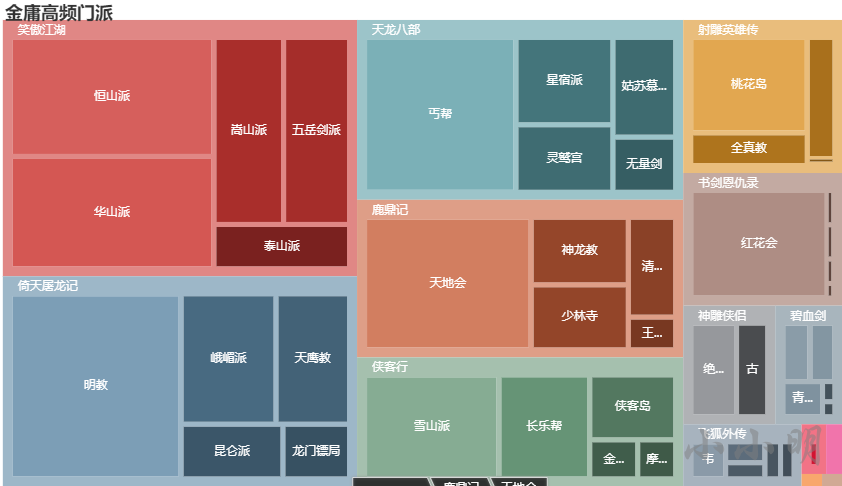

加载数据并获取每部小说前10的门派&#xff1a;

with open(&#39;data/bangs.txt&#39;, encoding&#61;"utf-8") as f:data &#61; [line.rstrip() for line in f]

novels &#61; data[::2]

bangs &#61; data[1::2]

novel_bangs &#61; {k: v.split() for k, v in zip(novels, bangs) if k !&#61; "未知"}

del novels, bangs, datadef find_main_bangs(novel, num&#61;10, content&#61;None):if content is None:content &#61; load_novel(novel)count &#61; Counter()for name in novel_bangs[novel]:count[name] &#61; content.count(name)return count.most_common(num)for novel in novel_bangs:print(novel, dict(find_main_bangs(novel, 10)))

书剑恩仇录 {&#39;红花会&#39;: 394, &#39;言家拳&#39;: 7, &#39;龙门帮&#39;: 7, &#39;天山派&#39;: 5, &#39;嵩阳派&#39;: 3, &#39;南少林&#39;: 3}

侠客行 {&#39;雪山派&#39;: 358, &#39;长乐帮&#39;: 242, &#39;侠客岛&#39;: 143, &#39;金乌派&#39;: 48, &#39;摩天崖&#39;: 38, &#39;玄素庄&#39;: 37, &#39;金刀寨&#39;: 25}

倚天屠龙记 {&#39;明教&#39;: 738, &#39;峨嵋派&#39;: 289, &#39;天鹰教&#39;: 224, &#39;昆仑派&#39;: 130, &#39;龙门镖局&#39;: 85, &#39;崆峒派&#39;: 83, &#39;海沙派&#39;: 58, &#39;巨鲸帮&#39;: 37, &#39;神拳门&#39;: 20, &#39;波斯明教&#39;: 16}

天龙八部 {&#39;丐帮&#39;: 562, &#39;星宿派&#39;: 203, &#39;灵鹫宫&#39;: 157, &#39;姑苏慕容&#39;: 150, &#39;无量剑&#39;: 83, &#39;逍遥派&#39;: 77, &#39;大理段氏&#39;: 75, &#39;青城派&#39;: 65, &#39;蓬莱派&#39;: 23, &#39;伏牛派&#39;: 8}

射雕英雄传 {&#39;桃花岛&#39;: 289, &#39;全真教&#39;: 99, &#39;铁掌帮&#39;: 87, &#39;仙霞派&#39;: 5}

白马啸西风 {&#39;晋威镖局&#39;: 5}

碧血剑 {&#39;仙都派&#39;: 51, &#39;金龙帮&#39;: 47, &#39;青竹帮&#39;: 45, &#39;渤海派&#39;: 8, &#39;永胜镖局&#39;: 6, &#39;点苍派&#39;: 4, &#39;飞虎寨&#39;: 4, &#39;会友镖局&#39;: 2, &#39;东支&#39;: 2, &#39;千柳庄&#39;: 2}

神雕侠侣 {&#39;绝情谷&#39;: 128, &#39;古墓派&#39;: 87}

笑傲江湖 {&#39;恒山派&#39;: 552, &#39;华山派&#39;: 521, &#39;嵩山派&#39;: 297, &#39;五岳剑派&#39;: 281, &#39;泰山派&#39;: 137, &#39;衡山派&#39;: 102, &#39;福威镖局&#39;: 102, &#39;日月神教&#39;: 64, &#39;武当派&#39;: 46, &#39;金刀王家&#39;: 20}

连城诀 {&#39;血刀门&#39;: 24}

雪山飞狐 {&#39;平通镖局&#39;: 7, &#39;饮马川山寨&#39;: 4, &#39;青藏派&#39;: 2, &#39;无极门&#39;: 2, &#39;百会寺&#39;: 1}

飞狐外传 {&#39;韦陀门&#39;: 49, &#39;八卦门&#39;: 31, &#39;天龙门&#39;: 24, &#39;太极门&#39;: 22, &#39;飞马镖局&#39;: 19, &#39;五虎门&#39;: 16, &#39;少林派&#39;: 15, &#39;枫叶庄&#39;: 8, &#39;镇远镖局&#39;: 2}

鸳鸯刀 {&#39;威信镖局&#39;: 5}

鹿鼎记 {&#39;天地会&#39;: 542, &#39;神龙教&#39;: 161, &#39;少林寺&#39;: 155, &#39;清凉寺&#39;: 116, &#39;王屋派&#39;: 38, &#39;铁剑门&#39;: 12, &#39;金顶门&#39;: 8, &#39;武夷派&#39;: 3}

可视化&#xff1a;

from pyecharts import options as opts

from pyecharts.charts import Treedata &#61; []

for novel in novel_bangs:tmp &#61; []data.append({"name": novel, "children": tmp})for name, count in find_main_bangs(novel, 5):tmp.append({"name": name, "value": count})

c &#61; (TreeMap().add("", data, levels&#61;[opts.TreeMapLevelsOpts(),opts.TreeMapLevelsOpts(color_saturation&#61;[0.3, 0.6],treemap_itemstyle_opts&#61;opts.TreeMapItemStyleOpts(border_color_saturation&#61;0.7, gap_width&#61;5, border_width&#61;10),upper_label_opts&#61;opts.LabelOpts(is_show&#61;True, position&#61;&#39;insideTopLeft&#39;, vertical_align&#61;&#39;top&#39;)),]).set_global_opts(title_opts&#61;opts.TitleOpts(title&#61;"金庸高频门派"))

)

c.render_notebook()

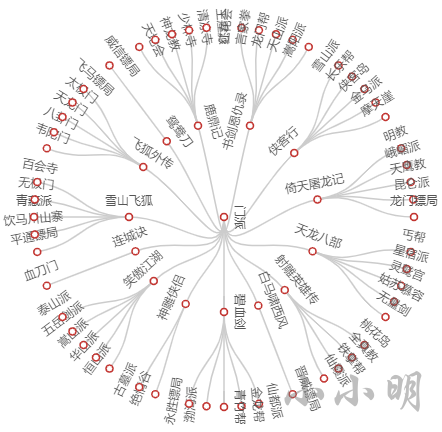

from pyecharts.charts import Treec &#61; (Tree().add("", [{"name": "门派", "children": data}], layout&#61;"radial")

)

c.render_notebook()

下面我们编写一个函数&#xff0c;输入一部小说名&#xff0c;可以输出其最高频的主角、武功和门派&#xff1a;

from pyecharts import options as opts

from pyecharts.charts import Bardef show_top10(novel):content &#61; load_novel(novel)charecters &#61; find_main_charecters(novel, 10, content)[::-1]k, v &#61; map(list, zip(*charecters))c &#61; (Bar(init_opts&#61;opts.InitOpts("720px", "320px")).add_xaxis(k).add_yaxis("", v).reversal_axis().set_series_opts(label_opts&#61;opts.LabelOpts(position&#61;"right")).set_global_opts(title_opts&#61;opts.TitleOpts(title&#61;f"{novel}主角")))display(c.render_notebook())kungfus &#61; find_main_kungfus(novel, 10, content)[::-1]k, v &#61; map(list, zip(*kungfus))c &#61; (Bar(init_opts&#61;opts.InitOpts("720px", "320px")).add_xaxis(k).add_yaxis("", v).reversal_axis().set_series_opts(label_opts&#61;opts.LabelOpts(position&#61;"right")).set_global_opts(title_opts&#61;opts.TitleOpts(title&#61;f"{novel}功夫")))display(c.render_notebook())bangs &#61; find_main_bangs(novel, 10, content)[::-1]k, v &#61; map(list, zip(*bangs))c &#61; (Bar(init_opts&#61;opts.InitOpts("720px", "320px")).add_xaxis(k).add_yaxis("", v).reversal_axis().set_series_opts(label_opts&#61;opts.LabelOpts(position&#61;"right")).set_global_opts(title_opts&#61;opts.TitleOpts(title&#61;f"{novel}门派")))display(c.render_notebook())

例如查看天龙八部&#xff1a;

show_top10("天龙八部")

可以先添加所有的人物、武功和门派作为自定义词汇&#xff1a;

import jiebafor novel, names in novel_names.items():for name in names:jieba.add_word(name)for novel, kungfus in novel_kungfus.items():for kungfu in kungfus:jieba.add_word(kungfu)for novel, bangs in novel_bangs.items():for bang in bangs:jieba.add_word(bang)

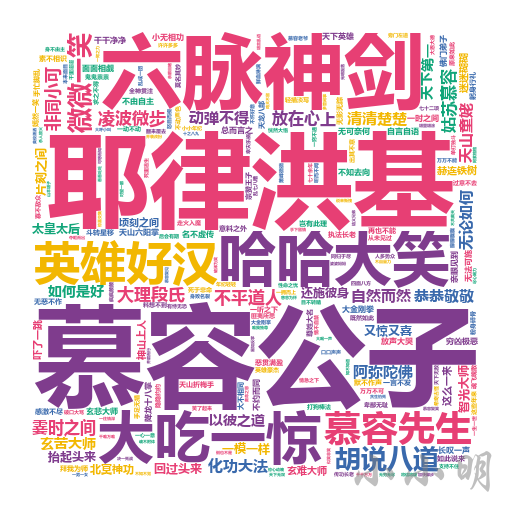

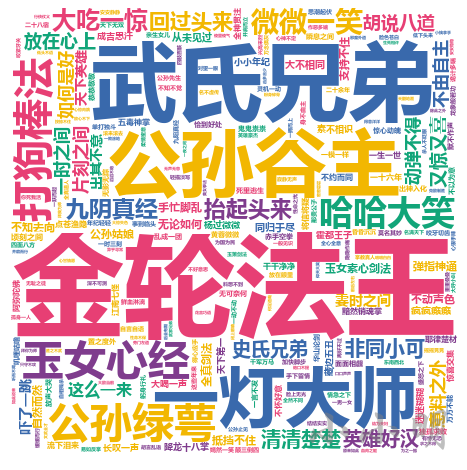

这里我们仅提取词长度不小于4的成语、俗语和短语进行分析&#xff0c;以天龙八部这部小说为例&#xff1a;

from IPython.display import Image

import stylecloud

import jieba

import re# 去除非中文字符

text &#61; re.sub("[^一-龟]&#43;", " ", load_novel("天龙八部"))

words &#61; [word for word in jieba.cut(text) if len(word) >&#61; 4]

stylecloud.gen_stylecloud(" ".join(words),collocations&#61;False,font_path&#61;r&#39;C:\Windows\Fonts\msyhbd.ttc&#39;,icon_name&#61;&#39;fas fa-square&#39;,output_name&#61;&#39;tmp.png&#39;)

Image(filename&#61;&#39;tmp.png&#39;)

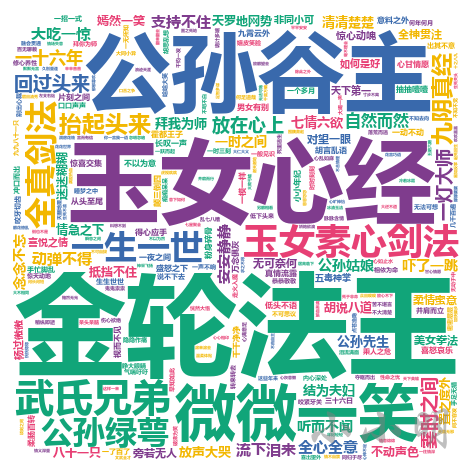

我们知道《神雕侠侣》这部小说最重要的主角是杨过和小龙女&#xff0c;我们可能会对于杨过和小龙女之间所发生的故事很感兴趣。如果通过程序快速了解呢&#xff1f;

我们考虑把《神雕侠侣》这部小说每一段中出现杨过及小龙女的段落进行jieba分词并制作词云。

同样我们只看4个字以上的词&#xff1a;

data &#61; []

for line in load_novel("神雕侠侣").splitlines():if "杨过" in line and "小龙女" in line:line &#61; re.sub("[^一-龟]&#43;", " ", line)data.extend(word for word in jieba.cut(line) if len(word) >&#61; 4)

stylecloud.gen_stylecloud(" ".join(data),collocations&#61;False,font_path&#61;r&#39;C:\Windows\Fonts\msyhbd.ttc&#39;,icon_name&#61;&#39;fas fa-square&#39;,output_name&#61;&#39;tmp.png&#39;)

Image(filename&#61;&#39;tmp.png&#39;)

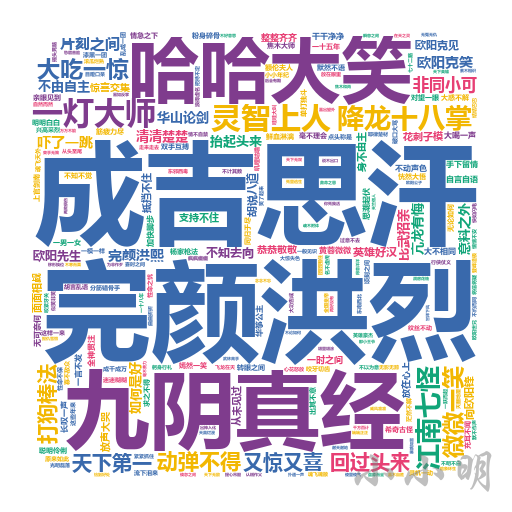

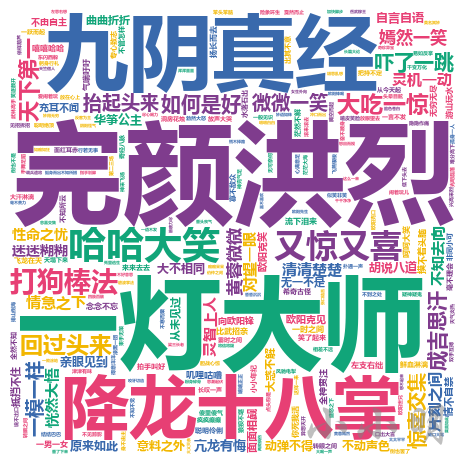

同样的思路看看郭靖和黄蓉&#xff1a;

data &#61; []

for line in load_novel("射雕英雄传").splitlines():if "郭靖" in line and "黄蓉" in line:line &#61; re.sub("[^一-龟]&#43;", " ", line)data.extend(word for word in jieba.cut(line) if len(word) >&#61; 4)

stylecloud.gen_stylecloud(" ".join(data),collocations&#61;False,font_path&#61;r&#39;C:\Windows\Fonts\msyhbd.ttc&#39;,icon_name&#61;&#39;fas fa-square&#39;,output_name&#61;&#39;tmp.png&#39;)

Image(filename&#61;&#39;tmp.png&#39;)

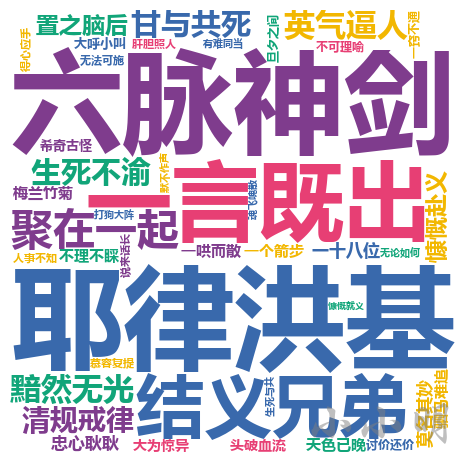

data &#61; []

for line in load_novel("天龙八部").splitlines():if ("萧峰" in line or "乔峰" in line) and "段誉" in line and "虚竹" in line:line &#61; re.sub("[^一-龟]&#43;", " ", line)data.extend(word for word in jieba.cut(line) if len(word) >&#61; 4)

stylecloud.gen_stylecloud(" ".join(data),collocations&#61;False,font_path&#61;r&#39;C:\Windows\Fonts\msyhbd.ttc&#39;,icon_name&#61;&#39;fas fa-square&#39;,output_name&#61;&#39;tmp.png&#39;)

Image(filename&#61;&#39;tmp.png&#39;)

金庸小说15部小说中预计出现了1400个以上的角色&#xff0c;下面我们将遍历小说的每一段&#xff0c;在一段中出现的任意两个角色&#xff0c;都计数1。最终我们取出现频次最高的前200个关系对进行可视化。

完整代码如下&#xff1a;

from pyecharts import options as opts

from pyecharts.charts import Graph

import math

import itertoolscount &#61; Counter()

for novel in novel_names:names &#61; novel_names[novel]re_rule &#61; f"({&#39;|&#39;.join(names)})"for line in load_novel(novel).splitlines():names &#61; list(set(re.findall(re_rule, line)))if names and len(names) >&#61; 2:names.sort()for s, t in itertools.combinations(names, 2):count[(s, t)] &#43;&#61; 1

count &#61; count.most_common(200)

node_count, nodes, links &#61; Counter(), [], []

for (n1, n2), v in count:node_count[n1] &#43;&#61; 1node_count[n2] &#43;&#61; 1links.append({"source": n1, "target": n2})

for node, count in node_count.items():nodes.append({"name": node, "symbolSize": int(math.log(count)*5)&#43;5})

c &#61; (Graph(init_opts&#61;opts.InitOpts("1280px","960px")).add("", nodes, links, repulsion&#61;30)

)

c.render("tmp.html")

这次我们生成了HTML文件是为了更方便的查看结果&#xff0c;前200个人物的关系情况如下&#xff1a;

按照相同的方法分析所有小说的门派关系&#xff1a;

from pyecharts import options as opts

from pyecharts.charts import Graph

import math

import itertoolscount &#61; Counter()

for novel in novel_bangs:bangs &#61; novel_bangs[novel]re_rule &#61; f"({&#39;|&#39;.join(bangs)})"for line in load_novel(novel).splitlines():names &#61; list(set(re.findall(re_rule, line)))if names and len(names) >&#61; 2:names.sort()for s, t in itertools.combinations(names, 2):count[(s, t)] &#43;&#61; 1

count &#61; count.most_common(200)

node_count, nodes, links &#61; Counter(), [], []

for (n1, n2), v in count:node_count[n1] &#43;&#61; 1node_count[n2] &#43;&#61; 1links.append({"source": n1, "target": n2})

for node, count in node_count.items():nodes.append({"name": node, "symbolSize": int(math.log(count)*5)&#43;5})

c &#61; (Graph(init_opts&#61;opts.InitOpts("1280px","960px")).add("", nodes, links, repulsion&#61;50)

)

c.render("tmp2.html")

Word2Vec 是一款将词表征为实数值向量的高效工具&#xff0c;接下来&#xff0c;我们将使用它来处理这些小说。

gensim 包提供了一个 Python 版的实现。

源代码地址&#xff1a;https://github.com/RaRe-Technologies/gensim

官方文档地址&#xff1a;http://radimrehurek.com/gensim/

之前我有使用gensim 包进行了相似文本的匹配&#xff0c;有兴趣可查阅&#xff1a;《批量模糊匹配的三种方法》

首先我要将所有小说的段落分词后添加到组织到一起&#xff08;前面的程序可以重启&#xff09;&#xff1a;

import jiebadef load_novel(novel):with open(f&#39;novels/{novel}.txt&#39;, encoding&#61;"u8") as f:return f.read()with open(&#39;data/names.txt&#39;, encoding&#61;"utf-8") as f:data &#61; f.read().splitlines()novels &#61; data[::2]names &#61; []for line in data[1::2]:names.extend(line.split())with open(&#39;data/kungfu.txt&#39;, encoding&#61;"utf-8") as f:data &#61; f.read().splitlines()kungfus &#61; []for line in data[1::2]:kungfus.extend(line.split())with open(&#39;data/bangs.txt&#39;, encoding&#61;"utf-8") as f:data &#61; f.read().splitlines()bangs &#61; []for line in data[1::2]:bangs.extend(line.split())for name in names:jieba.add_word(name)

for kungfu in kungfus:jieba.add_word(kungfu)

for bang in bangs:jieba.add_word(bang)# 去重

names &#61; list(set(names))

kungfus &#61; list(set(kungfus))

bangs &#61; list(set(bangs))sentences &#61; []

for novel in novels:print(f"处理&#xff1a;{novel}")for line in load_novel(novel).splitlines():sentences.append(jieba.lcut(line))

处理&#xff1a;书剑恩仇录

处理&#xff1a;碧血剑

处理&#xff1a;射雕英雄传

处理&#xff1a;神雕侠侣

处理&#xff1a;雪山飞狐

处理&#xff1a;飞狐外传

处理&#xff1a;白马啸西风

处理&#xff1a;倚天屠龙记

处理&#xff1a;鸳鸯刀

处理&#xff1a;天龙八部

处理&#xff1a;连城诀

处理&#xff1a;侠客行

处理&#xff1a;笑傲江湖

处理&#xff1a;鹿鼎记

处理&#xff1a;越女剑

接下面我们使用Word2Vec训练模型&#xff1a;

import gensimmodel &#61; gensim.models.Word2Vec(sentences)

我这边模型训练耗时15秒&#xff0c;若训练耗时较长可以把训练好的模型存到本地&#xff1a;

model.save("louis_cha.model")

以后可以直接从本地磁盘读取模型&#xff1a;

model &#61; gensim.models.Word2Vec.load("louis_cha.model")

有了模型&#xff0c;我们可以进行一些简单而有趣的测试。

注意&#xff1a;每次生成的模型有一定随机性&#xff0c;后续结果根据生成的模型而变化&#xff0c;并非完全一致。

首先看与乔(萧)峰相似的角色&#xff1a;

model.wv.most_similar(positive&#61;["乔峰", "萧峰"])

[(&#39;段正淳&#39;, 0.8006908893585205),(&#39;张翠山&#39;, 0.8000873923301697),(&#39;虚竹&#39;, 0.7957292795181274),(&#39;赵敏&#39;, 0.7937390804290771),(&#39;游坦之&#39;, 0.7803780436515808),(&#39;石破天&#39;, 0.777414858341217),(&#39;令狐冲&#39;, 0.7761642932891846),(&#39;慕容复&#39;, 0.7629764676094055),(&#39;贝海石&#39;, 0.7625609040260315),(&#39;钟万仇&#39;, 0.7612598538398743)]

再看看与阿朱相似的角色&#xff1a;

model.wv.most_similar(positive&#61;["阿朱", "蛛儿"])

[(&#39;殷素素&#39;, 0.8681862354278564),(&#39;赵敏&#39;, 0.8558328747749329),(&#39;木婉清&#39;, 0.8549383878707886),(&#39;王语嫣&#39;, 0.8355365991592407),(&#39;钟灵&#39;, 0.8338050842285156),(&#39;小昭&#39;, 0.8316497206687927),(&#39;阿紫&#39;, 0.8169034123420715),(&#39;程灵素&#39;, 0.8153879642486572),(&#39;周芷若&#39;, 0.8046135306358337),(&#39;段誉&#39;, 0.8006759285926819)]

除了角色&#xff0c;我们还可以看看门派&#xff1a;

model.wv.most_similar(positive&#61;["丐帮"])

[(&#39;恒山派&#39;, 0.8266139626502991),(&#39;门人&#39;, 0.8158190846443176),(&#39;天地会&#39;, 0.8078100085258484),(&#39;雪山派&#39;, 0.8041207194328308),(&#39;魔教&#39;, 0.7935695648193359),(&#39;嵩山派&#39;, 0.7908961772918701),(&#39;峨嵋派&#39;, 0.7845258116722107),(&#39;红花会&#39;, 0.7830792665481567),(&#39;星宿派&#39;, 0.7826651930809021),(&#39;长乐帮&#39;, 0.7759961485862732)]

还可以看看与降龙十八掌相似的武功秘籍&#xff1a;

model.wv.most_similar(positive&#61;["降龙十八掌"])

[(&#39;空明拳&#39;, 0.9040402770042419),(&#39;打狗棒法&#39;, 0.9009960293769836),(&#39;太极拳&#39;, 0.8992120623588562),(&#39;八卦掌&#39;, 0.8909589648246765),(&#39;一阳指&#39;, 0.8891675472259521),(&#39;七十二路&#39;, 0.8713394999504089),(&#39;绝招&#39;, 0.8693119287490845),(&#39;胡家刀法&#39;, 0.8578060865402222),(&#39;六脉神剑&#39;, 0.8568121194839478),(&#39;七伤拳&#39;, 0.8560649156570435)]

在 Word2Vec 的模型里&#xff0c;有过“中国-北京&#61;法国-巴黎”的例子&#xff0c;我们看看"段誉"和"段公子"类似于乔峰和什么的关系呢&#xff1f;

def find_relationship(a, b, c):d, _ &#61; model.wv.most_similar(positive&#61;[b, c], negative&#61;[a])[0]print(f"{a}-{b} 犹如 {c}-{d}")find_relationship("段誉", "段公子", "乔峰")

段誉-段公子 犹如 乔峰-乔帮主

类似的还有&#xff1a;

# 情侣对

find_relationship("郭靖", "黄蓉", "杨过")

# 岳父女婿

find_relationship("令狐冲", "任我行", "郭靖")

# 非情侣

find_relationship("郭靖", "华筝", "杨过")

郭靖-黄蓉 犹如 杨过-小龙女

令狐冲-任我行 犹如 郭靖-黄药师

郭靖-华筝 犹如 杨过-郭芙

查看韦小宝相关的关系&#xff1a;

# 韦小宝

find_relationship("杨过", "小龙女", "韦小宝")

find_relationship("令狐冲", "盈盈", "韦小宝")

find_relationship("张无忌", "赵敏", "韦小宝")

find_relationship("郭靖", "黄蓉", "韦小宝")

杨过-小龙女 犹如 韦小宝-康熙

令狐冲-盈盈 犹如 韦小宝-方怡

张无忌-赵敏 犹如 韦小宝-阿紫

郭靖-黄蓉 犹如 韦小宝-丁珰

门派武功之间的关系&#xff1a;

find_relationship("郭靖", "降龙十八掌", "黄蓉")

find_relationship("武当", "张三丰", "少林")

find_relationship("任我行", "魔教", "令狐冲")

郭靖-降龙十八掌 犹如 黄蓉-打狗棒法

武当-张三丰 犹如 少林-玄慈

任我行-魔教 犹如 令狐冲-恒山派

之前我们使用 Word2Vec 将每个词映射到了一个向量空间&#xff0c;因此&#xff0c;我们可以利用这个向量表示的空间&#xff0c;对这些词进行聚类分析。

首先取出所有角色对应的向量空间&#xff1a;

all_names &#61; []

word_vectors &#61; []

for name in names:if name in model.wv:all_names.append(name)word_vectors.append(model.wv[name])

all_names &#61; np.array(all_names)

word_vectors &#61; np.vstack(word_vectors)

聚类算法有很多&#xff0c;这里我们使用基本的Kmeans算法进行聚类&#xff0c;如果只分成3类&#xff0c;那么很明显地可以将众人分成主角&#xff0c;配角&#xff0c;跑龙套的三类&#xff1a;

from sklearn.cluster import KMeans

import pandas as pdN &#61; 3

labels &#61; KMeans(N).fit(word_vectors).labels_

df &#61; pd.DataFrame({"name": all_names, "label": labels})

for label, names in df.groupby("label").name:print(f"类别{label}共{len(names)}个角色&#xff0c;前100个角色有&#xff1a;\n{&#39;,&#39;.join(names[:100])}\n")

类别0共103个角色&#xff0c;前100个角色有&#xff1a;

李秋水,向问天,马钰,顾金标,丁不四,耶律齐,谢烟客,陈正德,殷天正,洪凌波,灵智上人,闵柔,公孙止,完颜萍,梅超风,鸠摩智,冲虚,冯锡范,尹克西,陆冠英,王剑英,左冷禅,商老太,尹志平,徐铮,灭绝师太,风波恶,袁紫衣,殷梨亭,宋青书,阿九,韩小莹,乌老大,杨康,何铁手,范遥,朱聪,郝大通,周仲英,风际中,何太冲,张召重,一灯大师,田归农,尼摩星,霍都,潇湘子,梅剑和,南希仁,玄难,纪晓芙,韩宝驹,邓百川,裘千尺,朱子柳,宋远桥,渡难,俞岱岩,武三通,云中鹤,余沧海,花铁干,杨逍,段延庆,巴天石,东方不败,归辛树,梁子翁,赵志敬,韦一笑,赵半山,丘处机,武修文,侯通海,鲁有脚,石清,彭连虎,胖头陀,达尔巴,裘千仞,金花婆婆,金轮法王,木高峰,苗人凤,任我行,王处一,柯镇恶,樊一翁,黄药师,欧阳克,张三丰,曹云奇,沙通天,文泰来,白万剑,鹿杖客,陆菲青,班淑娴,商宝震,全金发类别1共6个角色&#xff0c;前100个角色有&#xff1a;

渔人,汉子,少妇,胖子,大汉,农夫类别2共56个角色&#xff0c;前100个角色有&#xff1a;

张无忌,余鱼同,慕容复,木婉清,田伯光,郭襄,周伯通,陈家洛,乔峰,张翠山,丁珰,游坦之,岳不群,黄蓉,洪七公,岳灵珊,周芷若,马春花,杨过,阿紫,阿朱,赵敏,令狐冲,段正淳,水笙,石破天,徐天宏,程灵素,林平之,双儿,郭靖,袁承志,胡斐,陆无双,狄云,霍青桐,王语嫣,萧峰,李沅芷,骆冰,李莫愁,周绮,丁典,韦小宝,段誉,戚芳,小龙女,钟灵,殷素素,李文秀,谢逊,穆念慈,郭芙,方怡,仪琳,虚竹类别3共236个角色&#xff0c;前100个角色有&#xff1a;

空智,章进,澄观,薛鹊,秃笔翁,曲非烟,田青文,郭啸天,陆大有,方证,阿碧,陶子安,吴三桂,钱老本,马行空,洪胜海,张勇,瑞大林,包不同,慕容景岳,康广陵,施琅,陆高轩,袁冠南,张康年,桃花仙,定逸,执法长老,范蠡,钟镇,陈达海,桃根仙,阿曼,李四,札木合,吴之荣,哈合台,传功长老,卓天雄,茅十八,风清扬,崔希敏,方生,王进宝,葛尔丹,常金鹏,秦红棉,薛慕华,侍剑,孙仲寿,范一飞,归二娘,孙不二,吴六奇,杨铁心,万震山,单正,玄寂,武敦儒,刘正风,西华子,樊纲,店伴,何足道,小昭,孙婆婆,苏普,谭婆,朱九真,耶律洪基,圆真,萧中慧,都大锦,司马林,叶二娘,安大娘,张三,杨成协,掌棒龙头,福康安,玉林,顾炎武,马超兴,殷离,莫声谷,郑萼,桃干仙,华筝,计无施,苏鲁克,费要多罗,苏荃,玄慈,卫璧,马光佐,常遇春,沐剑声,包惜弱,朱长龄,褚万里

我们可以根据每个类别的角色数量的相对大小&#xff0c;判断该类别的角色是属于主角&#xff0c;配角还是跑龙套。

下面我们过滤掉众龙套角色之后&#xff0c;重新聚合成四类&#xff1a;

c &#61; pd.Series(labels).mode().iat[0]

remain_names &#61; all_names[labels !&#61; c]

remain_vectors &#61; word_vectors[labels !&#61; c]

remain_label &#61; KMeans(4).fit(remain_vectors).labels_

df &#61; pd.DataFrame({"name": remain_names, "label": remain_label})

for label, names in df.groupby("label").name:print(f"类别{label}共{len(names)}个角色&#xff0c;前100个角色有&#xff1a;\n{&#39;,&#39;.join(names[:100])}\n")

类别0共103个角色&#xff0c;前100个角色有&#xff1a;

李秋水,向问天,马钰,顾金标,丁不四,耶律齐,谢烟客,陈正德,殷天正,洪凌波,灵智上人,闵柔,公孙止,完颜萍,梅超风,鸠摩智,冲虚,冯锡范,尹克西,陆冠英,王剑英,左冷禅,商老太,尹志平,徐铮,灭绝师太,风波恶,袁紫衣,殷梨亭,宋青书,阿九,韩小莹,乌老大,杨康,何铁手,范遥,朱聪,郝大通,周仲英,风际中,何太冲,张召重,一灯大师,田归农,尼摩星,霍都,潇湘子,梅剑和,南希仁,玄难,纪晓芙,韩宝驹,邓百川,裘千尺,朱子柳,宋远桥,渡难,俞岱岩,武三通,云中鹤,余沧海,花铁干,杨逍,段延庆,巴天石,东方不败,归辛树,梁子翁,赵志敬,韦一笑,赵半山,丘处机,武修文,侯通海,鲁有脚,石清,彭连虎,胖头陀,达尔巴,裘千仞,金花婆婆,金轮法王,木高峰,苗人凤,任我行,王处一,柯镇恶,樊一翁,黄药师,欧阳克,张三丰,曹云奇,沙通天,文泰来,白万剑,鹿杖客,陆菲青,班淑娴,商宝震,全金发类别1共6个角色&#xff0c;前100个角色有&#xff1a;

渔人,汉子,少妇,胖子,大汉,农夫类别2共56个角色&#xff0c;前100个角色有&#xff1a;

张无忌,余鱼同,慕容复,木婉清,田伯光,郭襄,周伯通,陈家洛,乔峰,张翠山,丁珰,游坦之,岳不群,黄蓉,洪七公,岳灵珊,周芷若,马春花,杨过,阿紫,阿朱,赵敏,令狐冲,段正淳,水笙,石破天,徐天宏,程灵素,林平之,双儿,郭靖,袁承志,胡斐,陆无双,狄云,霍青桐,王语嫣,萧峰,李沅芷,骆冰,李莫愁,周绮,丁典,韦小宝,段誉,戚芳,小龙女,钟灵,殷素素,李文秀,谢逊,穆念慈,郭芙,方怡,仪琳,虚竹类别3共236个角色&#xff0c;前100个角色有&#xff1a;

空智,章进,澄观,薛鹊,秃笔翁,曲非烟,田青文,郭啸天,陆大有,方证,阿碧,陶子安,吴三桂,钱老本,马行空,洪胜海,张勇,瑞大林,包不同,慕容景岳,康广陵,施琅,陆高轩,袁冠南,张康年,桃花仙,定逸,执法长老,范蠡,钟镇,陈达海,桃根仙,阿曼,李四,札木合,吴之荣,哈合台,传功长老,卓天雄,茅十八,风清扬,崔希敏,方生,王进宝,葛尔丹,常金鹏,秦红棉,薛慕华,侍剑,孙仲寿,范一飞,归二娘,孙不二,吴六奇,杨铁心,万震山,单正,玄寂,武敦儒,刘正风,西华子,樊纲,店伴,何足道,小昭,孙婆婆,苏普,谭婆,朱九真,耶律洪基,圆真,萧中慧,都大锦,司马林,叶二娘,安大娘,张三,杨成协,掌棒龙头,福康安,玉林,顾炎武,马超兴,殷离,莫声谷,郑萼,桃干仙,华筝,计无施,苏鲁克,费要多罗,苏荃,玄慈,卫璧,马光佐,常遇春,沐剑声,包惜弱,朱长龄,褚万里

每次运行结果都不一样&#xff0c;大家可以调整类别数量继续测试。从结果可以看到&#xff0c;反派更倾向于被聚合到一起&#xff0c;非正常姓名的人物更倾向于被聚合在一起&#xff0c;主角更倾向于被聚合在一起。

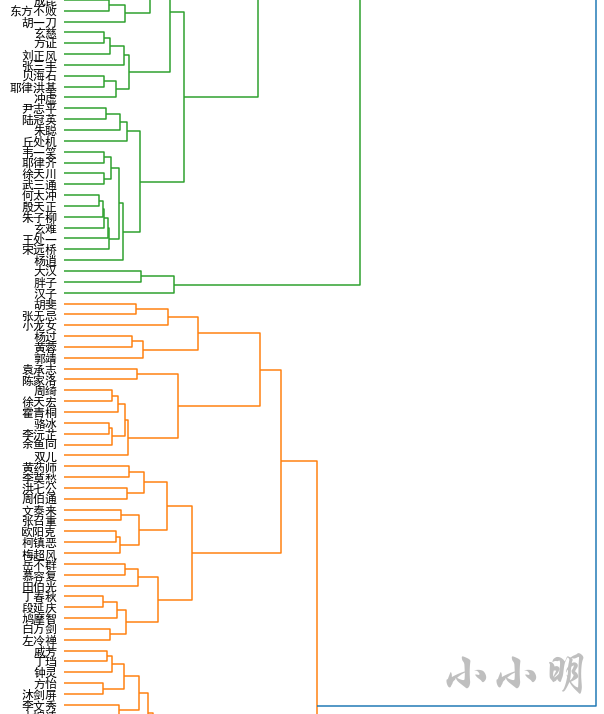

现在我们采用层级聚类的方式&#xff0c;查看人物间的层次关系&#xff0c;这里同样龙套角色不再参与聚类。

层级聚类调用 scipy.cluster.hierarchy 中层级聚类的包&#xff0c;在此之前先解决matplotlib中文乱码问题&#xff1a;

import matplotlib.pyplot as plt

%matplotlib inline

plt.rcParams[&#39;font.sans-serif&#39;] &#61; [&#39;SimHei&#39;]

plt.rcParams[&#39;axes.unicode_minus&#39;] &#61; False

接下来调用代码为&#xff1a;

import scipy.cluster.hierarchy as schy &#61; sch.linkage(remain_vectors, method&#61;"ward")

_, ax &#61; plt.subplots(figsize&#61;(10, 80))

z &#61; sch.dendrogram(y, orientation&#61;&#39;right&#39;)

idx &#61; z[&#39;leaves&#39;]

ax.set_xticks([])

ax.set_yticklabels(remain_names[idx], fontdict&#61;{&#39;fontsize&#39;: 12})

ax.set_frame_on(False)plt.show()

然后我们可以得到金庸小说宇宙的人物层次关系地图&#xff0c;结果较长仅展示一部分结果&#xff1a;

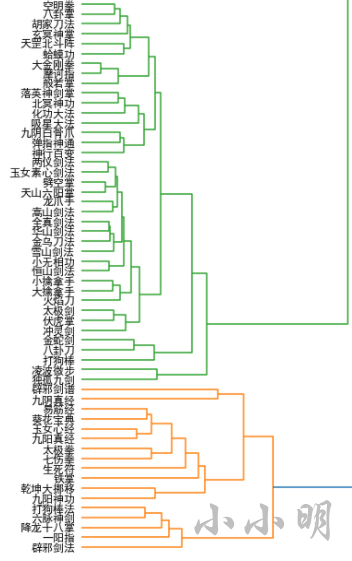

对各种武功作与人物层次聚类相同的操作&#xff1a;

all_names &#61; []

word_vectors &#61; []

for name in kungfus:if name in model.wv:all_names.append(name)word_vectors.append(model.wv[name])

all_names &#61; np.array(all_names)

word_vectors &#61; np.vstack(word_vectors)Y &#61; sch.linkage(word_vectors, method&#61;"ward")_, ax &#61; plt.subplots(figsize&#61;(10, 40))

Z &#61; sch.dendrogram(Y, orientation&#61;&#39;right&#39;)

idx &#61; Z[&#39;leaves&#39;]

ax.set_xticks([])

ax.set_yticklabels(all_names[idx], fontdict&#61;{&#39;fontsize&#39;: 12})

ax.set_frame_on(False)

plt.show()

结果较长&#xff0c;仅展示部分结果&#xff1a;

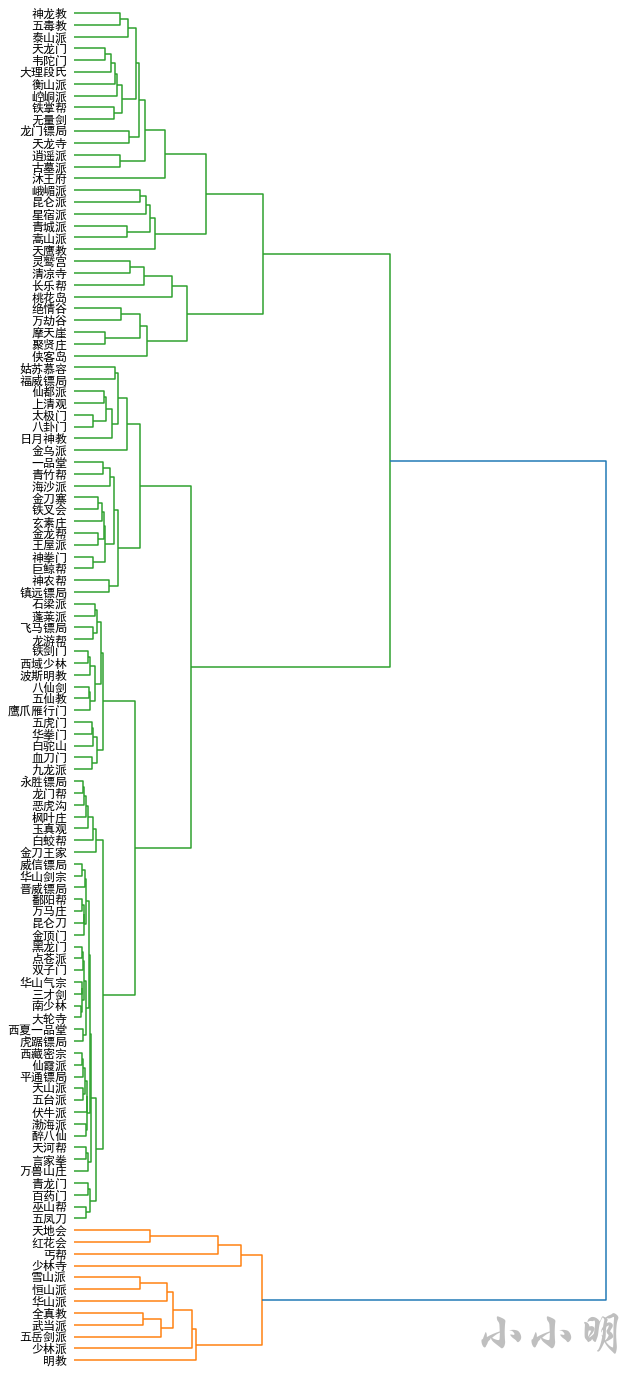

最后我们对门派进行层次聚类&#xff1a;

all_names &#61; []

word_vectors &#61; []

for name in bangs:if name in model.wv:all_names.append(name)word_vectors.append(model.wv[name])

all_names &#61; np.array(all_names)

word_vectors &#61; np.vstack(word_vectors)Y &#61; sch.linkage(word_vectors, method&#61;"ward")_, ax &#61; plt.subplots(figsize&#61;(10, 25))

Z &#61; sch.dendrogram(Y, orientation&#61;&#39;right&#39;)

idx &#61; Z[&#39;leaves&#39;]

ax.set_xticks([])

ax.set_yticklabels(all_names[idx], fontdict&#61;{&#39;fontsize&#39;: 12})

ax.set_frame_on(False)

plt.show()

本文从金庸小说数据的采集&#xff0c;到普通的频次分析、剧情分析、关系分析&#xff0c;再到使用词向量空间分析相似关系&#xff0c;最后使用scipy进行所有小说的各种层次聚类。

基于网络爬虫的数据采集

基于pandas的数据统计分析

基于pyeharts库的可视化分析

基于Word2Vec的词向量分析

基于sklearn.cluster的聚类分析

推荐阅读

3种方案 | 抛弃for循环&#xff0c;让Python代码更丝滑 !

在 Windows上写 Python 代码的最佳组合&#xff01;

Python招聘岗位信息聚合系统&#xff08;拥有爬虫爬取、数据分析、可视化、互动等功能&#xff09;

太全了&#xff01;用Python操作MySQL的使用教程集锦&#xff01;

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有