欢迎访问我的GitHub

https://github.com/zq2599/blog_demos

内容:所有原创文章分类汇总及配套源码,涉及Java、Docker、Kubernetes、DevOPS等;

本篇概览

本文是《Flink的sink实战》系列的第二篇,前文《Flink的sink实战之一:初探》对sink有了基本的了解,本章来体验将数据sink到kafka的操作;

全系列链接

- 《Flink的sink实战之一:初探》

- 《Flink的sink实战之二:kafka》

- 《Flink的sink实战之三:cassandra3》

- 《Flink的sink实战之四:自定义》

版本和环境准备

本次实战的环境和版本如下: - JDK:1.8.0_211

- Flink:1.9.2

- Maven:3.6.0

- 操作系统:macOS Catalina 10.15.3 (MacBook Pro 13-inch, 2018)

- IDEA:2018.3.5 (Ultimate Edition)

- Kafka:2.4.0

- Zookeeper:3.5.5

请确保上述环境和服务已经就绪;

源码下载

如果您不想写代码,整个系列的源码可在GitHub下载到,地址和链接信息如下表所示(https://github.com/zq2599/blog_demos):

这个git项目中有多个文件夹,本章的应用在flinksinkdemo文件夹下,如下图红框所示:

准备完毕,开始开发;

准备工作

正式编码前,先去官网查看相关资料了解基本情况:

- 地址:https://ci.apache.org/projects/flink/flink-docs-release-1.9/dev/connectors/kafka.html

- 我这里用的kafka是2.4.0版本,在官方文档查找对应的库和类,如下图红框所示:

kafka准备

- 创建名为test006的topic,有四个分区,参考命令: ```shell ./kafka-topics.sh

--create

--bootstrap-server 127.0.0.1:9092

--replication-factor 1

--partitions 4

--topic test006

2. 在控制台消费test006的消息,参考命令:

```shell

./kafka-console-consumer.sh

--bootstrap-server 127.0.0.1:9092

--topic test006

- 此时如果该topic有消息进来,就会在控制台输出;

- 接下来开始编码;

创建工程

- 用maven命令创建flink工程: ```shell mvn

archetype:generate

-DarchetypeGroupId=org.apache.flink

-DarchetypeArtifactId=flink-quickstart-java

-DarchetypeVersion=1.9.2

2. 根据提示,groupid输入com.bolingcavalry,artifactid输入flinksinkdemo,即可创建一个maven工程;

3. 在pom.xml中增加kafka依赖库:

```xml

org.apache.flinkflink-connector-kafka_2.111.9.0

- 工程创建完成,开始编写flink任务的代码;

发送字符串消息的sink

先尝试发送字符串类型的消息: - 创建KafkaSerializationSchema接口的实现类,后面这个类要作为创建sink对象的参数使用:

package com.bolingcavalry.addsink;

import org.apache.flink.streaming.connectors.kafka.KafkaSerializationSchema; import org.apache.kafka.clients.producer.ProducerRecord; import java.nio.charset.StandardCharsets;

public class ProducerStringSerializationSchema implements KafkaSerializationSchema {

private String topic;public ProducerStringSerializationSchema(String topic) {super();this.topic = topic;

}@Override

public ProducerRecord serialize(String element, Long timestamp) {return new ProducerRecord(topic, element.getBytes(StandardCharsets.UTF_8));

}

}

2. 创建任务类KafkaStrSink,请注意FlinkKafkaProducer对象的参数,FlinkKafkaProducer.Semantic.EXACTLY_ONCE表示严格一次:

```java

package com.bolingcavalry.addsink;import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaProducer;

import java.util.ArrayList;

import java.util.List;

import java.util.Properties;public class KafkaStrSink {public static void main(String[] args) throws Exception {final StreamExecutionEnvironment env &#61; StreamExecutionEnvironment.getExecutionEnvironment();//并行度为1env.setParallelism(1);Properties properties &#61; new Properties();properties.setProperty("bootstrap.servers", "192.168.50.43:9092");String topic &#61; "test006";FlinkKafkaProducer producer &#61; new FlinkKafkaProducer<>(topic,new ProducerStringSerializationSchema(topic),properties,FlinkKafkaProducer.Semantic.EXACTLY_ONCE);//创建一个List&#xff0c;里面有两个Tuple2元素List list &#61; new ArrayList<>();list.add("aaa");list.add("bbb");list.add("ccc");list.add("ddd");list.add("eee");list.add("fff");list.add("aaa");//统计每个单词的数量env.fromCollection(list).addSink(producer).setParallelism(4);env.execute("sink demo : kafka str");}

}

使用mvn命令编译构建&#xff0c;在target目录得到文件flinksinkdemo-1.0-SNAPSHOT.jar&#xff1b;

在flink的web页面提交flinksinkdemo-1.0-SNAPSHOT.jar&#xff0c;并制定执行类&#xff0c;如下图&#xff1a;

提交成功后&#xff0c;如果flink有四个可用slot&#xff0c;任务会立即执行&#xff0c;会在消费kafak消息的终端收到消息&#xff0c;如下图&#xff1a;

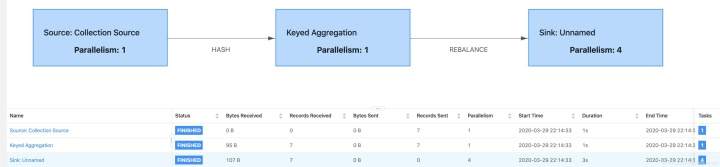

任务执行情况如下图&#xff1a;

发送对象消息的sink

再来尝试如何发送对象类型的消息&#xff0c;这里的对象选择常用的Tuple2对象&#xff1a;

创建KafkaSerializationSchema接口的实现类&#xff0c;该类后面要用作sink对象的入参&#xff0c;请注意代码中捕获异常的那段注释&#xff1a;生产环境慎用printStackTrace()!!!

package com.bolingcavalry.addsink;import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.shaded.jackson2.com.fasterxml.jackson.core.JsonProcessingException;

import org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.ObjectMapper;

import org.apache.flink.streaming.connectors.kafka.KafkaSerializationSchema;

import org.apache.kafka.clients.producer.ProducerRecord;

import javax.annotation.Nullable;public class ObjSerializationSchema implements KafkaSerializationSchema> {private String topic;private ObjectMapper mapper;public ObjSerializationSchema(String topic) {super();this.topic &#61; topic;}&#64;Overridepublic ProducerRecord serialize(Tuple2 stringIntegerTuple2, &#64;Nullable Long timestamp) {byte[] b &#61; null;if (mapper &#61;&#61; null) {mapper &#61; new ObjectMapper();}try {b&#61; mapper.writeValueAsBytes(stringIntegerTuple2);} catch (JsonProcessingException e) {// 注意&#xff0c;在生产环境这是个非常危险的操作&#xff0c;// 过多的错误打印会严重影响系统性能&#xff0c;请根据生产环境情况做调整e.printStackTrace();}return new ProducerRecord(topic, b);}

}

- 创建flink任务类&#xff1a;

package com.bolingcavalry.addsink;import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaProducer;

import java.util.ArrayList;

import java.util.List;

import java.util.Properties;public class KafkaObjSink {public static void main(String[] args) throws Exception {final StreamExecutionEnvironment env &#61; StreamExecutionEnvironment.getExecutionEnvironment();//并行度为1env.setParallelism(1);Properties properties &#61; new Properties();//kafka的broker地址properties.setProperty("bootstrap.servers", "192.168.50.43:9092");String topic &#61; "test006";FlinkKafkaProducer> producer &#61; new FlinkKafkaProducer<>(topic,new ObjSerializationSchema(topic),properties,FlinkKafkaProducer.Semantic.EXACTLY_ONCE);//创建一个List&#xff0c;里面有两个Tuple2元素List> list &#61; new ArrayList<>();list.add(new Tuple2("aaa", 1));list.add(new Tuple2("bbb", 1));list.add(new Tuple2("ccc", 1));list.add(new Tuple2("ddd", 1));list.add(new Tuple2("eee", 1));list.add(new Tuple2("fff", 1));list.add(new Tuple2("aaa", 1));//统计每个单词的数量env.fromCollection(list).keyBy(0).sum(1).addSink(producer).setParallelism(4);env.execute("sink demo : kafka obj");}

}

- 像前一个任务那样编译构建&#xff0c;把jar提交到flink&#xff0c;并指定执行类是com.bolingcavalry.addsink.KafkaObjSink&#xff1b;

- 消费kafka消息的控制台输出如下&#xff1a;

- 在web页面可见执行情况如下&#xff1a;

至此&#xff0c;flink将计算结果作为kafka消息发送出去的实战就完成了&#xff0c;希望能给您提供参考&#xff0c;接下来的章节&#xff0c;我们会继续体验官方提供的sink能力&#xff1b;

欢迎关注公众号&#xff1a;程序员欣宸

微信搜索「程序员欣宸」&#xff0c;我是欣宸&#xff0c;期待与您一同畅游Java世界... https://github.com/zq2599/blog_demos