为什么80%的码农都做不了架构师?>>>

安装环境:centos ;

jdk-7-linux-x64.tar.gz

hadoop-2.0.4-alpha.tar.gz

安装目录:/opt/cloud

1、首先安装jdk:

tar -zvxf jdk-7-linux-x64.tar.gz,将jdk解压至 /opt/cloud/jdk,设置环境变量,亦可不设置。

2、解压 hadoop-2.0.4-alpha.tar.gz

tar -zvxf hadoop-2.0.4-alpha.tar.gz,将hadoop解压至 /opt/cloud/hadoop,可修改目录或者软连接。

3、配置 hadoop

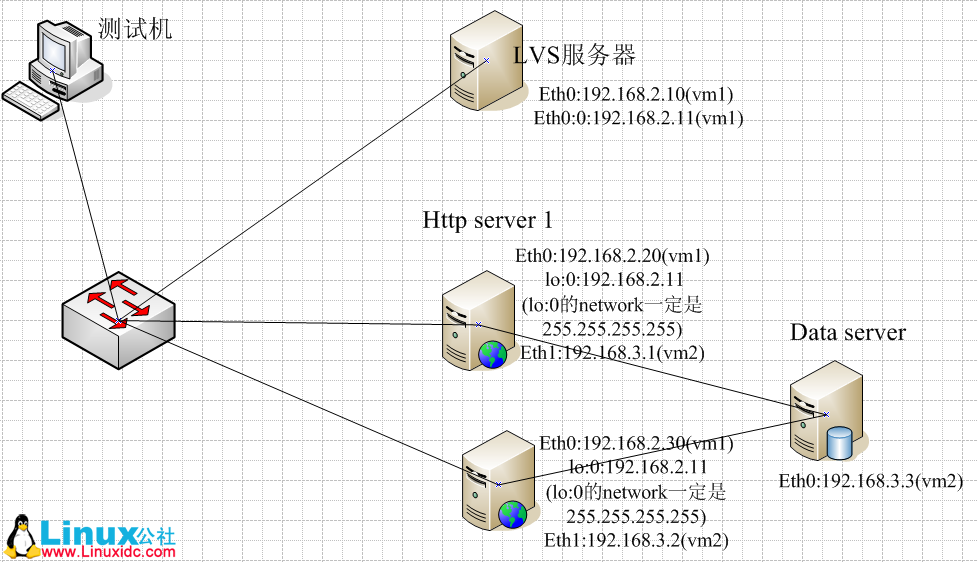

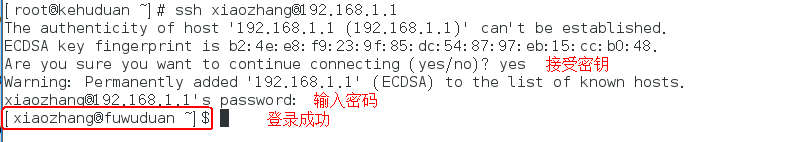

- ssh 免密码登陆:ssh-keygen -t rsa,使用 ssh localhost 测试,直接进入ssh则成功

- Hadoop 环境变量配置

export HADOOP_PREFIX=/opt/cloud/hadoop

export PATH=$PATH:$HADOOP_PREFIX/bin:$HADOOP_PREFIX/sbin

export HADOOP_MAPRED_HOME=${HADOOP_PREFIX}

export HADOOP_COMMON_HOME=${HADOOP_PREFIX}

export HADOOP_HDFS_HOME=${HADOOP_PREFIX}

export YARN_HOME=${HADOOP_PREFIX}

- 修改Hadoop的配置文件:

hadoop-env.sh:

#vim /opt/cloud/hadoop/etc/hadoop/hadoop-env.sh

修改 export JAVA_HOME=/opt/cloud/jdk

-

编辑以下几个文件,加入配置信息,文件位于 hadoop/etc/hadoop

----------------core-site.xml

fs.default.name hdfs://localhost:8020 The name of the default file system. Either the literal string "local" or a host:port for NDFS. true ------------------------- yarn-site.xmlyarn.nodemanager.aux-services mapreduce.shuffle yarn.nodemanager.aux-services.mapreduce.shuffle.class org.apache.hadoop.mapred.ShuffleHandler ------------------------ mapred-site.xmlmapreduce.framework.name yarn mapred.system.dir file:/opt/cloud/hadoop_space/mapred/system true mapred.local.dir file:/opt/cloud/hadoop_space/mapred/local true ----------- hdfs-site.xmldfs.namenode.name.dir file:/opt/cloud/hadoop_space/dfs/name Determines where on the local filesystem the DFS name node should store the name table. If this is a comma-delimited listof directories then the name table is replicated in all of the directories, for redundancy.true dfs.datanode.data.dir file:/opt/cloud/hadoop_space/dfs/data Determines where on the local filesystem an DFS data node should store its blocks. If this is a comma-delimitedlist of directories, then data will be stored in all nameddirectories, typically on different devices.Directories that do not exist are ignored.true dfs.replication 1 dfs.permissions false

4、测试

以上配置好后启动HDFS:

# hdfs namenode -format

运行成功以后可以使用一下命令启动NameNode和DataNode

# hadoop-daemon.sh start namenode

# hadoop-daemon.sh start datanode

打开:http://mycentos:50070/dfshealth.jsp

京公网安备 11010802041100号

京公网安备 11010802041100号