作者:李小白无悔 | 来源:互联网 | 2023-09-06 10:00

1 、准备数据

准备表dm_action_log 数据如下:

| bdp_day |

action |

uv |

| 20190101 |

click |

11173 |

| 20190101 |

exit |

11109 |

| 20190101 |

install |

11139 |

| 20190101 |

launch |

11083 |

| 20190101 |

login |

11220 |

| 20190101 |

page_enter_h5 |

11016 |

| 20190101 |

page_enter_native |

11076 |

| 20190101 |

page_exit |

11120 |

| 20190101 |

register |

11064 |

| 20190102 |

click |

11168 |

···················只取前十条·························

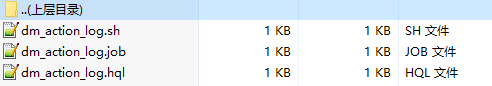

2 、编写azkaban调度job

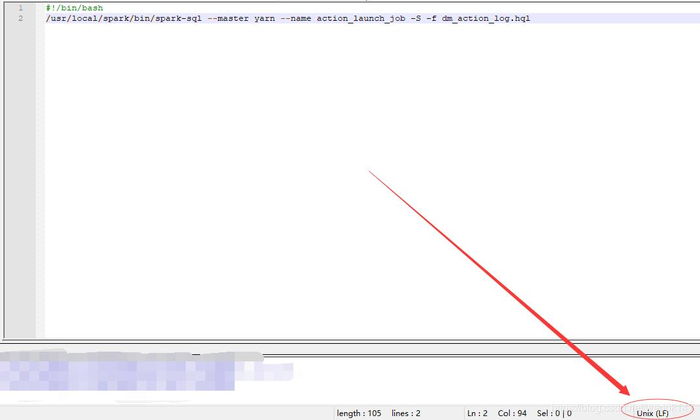

dm_action_log.sh

#!/bin/bash

/usr/local/spark/bin/spark-sql --master yarn --name action_launch_job -S -f dm_action_log.hql

dm_action_log.job

type=command

command=sh dm_action_log.sh

dm_action_log.hql

use databasename;

create table if not exists databasename.dm_action_log_bak(

bdp_day string comment '时间',

action string comment '行为',

uv int comment 'uv'

)

stored as parquet;

insert overwrite table databasename.dm_action_log_bak

select bdp_day,action,count(userid) uv from databasename.dw_action_log

group by bdp_day,action;

这里注意这里需要是Unix环境(notepad++为例)

3 、配置spark-sql运行环境

3、1修改 yarn-site.xml,添加两行配置

yarn.nodemanager.vmem-check-enabled

false

yarn.nodemanager.vmem-pmem-ratio

4

Ratio between virtual memory to physical memory when setting memory limits for containers

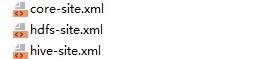

3、2 发送配置文件

- 把修改后的 yarn-site.xml 发送到集群其他节点

- 把$HADOOP_HOME/etc/hadoop中的 core-site.xml 和 hdfs-site.xml发送到$SPARK_HOME/conf/中(如果是spark集群,需要发送到每一个)

- 把hive-site.xml同样也发送到spark的每个节点($SPARK_HOME\conf\)

4 、启动hadoop集群和spark集群

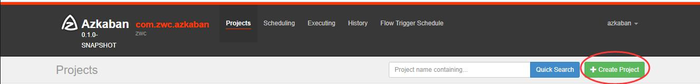

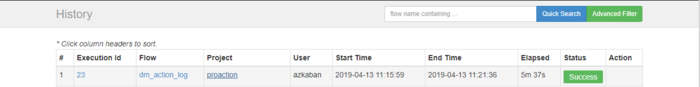

5 、启动Azkaban并创建任务

6 、上传zip包执行任务

把 dm_action_log.sh、dm_action_log.job、dm_action_log.hql,打成zip包

7 、等待执行成功

发生ERROR怎么办?

推荐以下两个网站

网站一

网站二