篇首语:本文由编程笔记#小编为大家整理,主要介绍了Hadoop 部署之 Hadoop 相关的知识,希望对你有一定的参考价值。

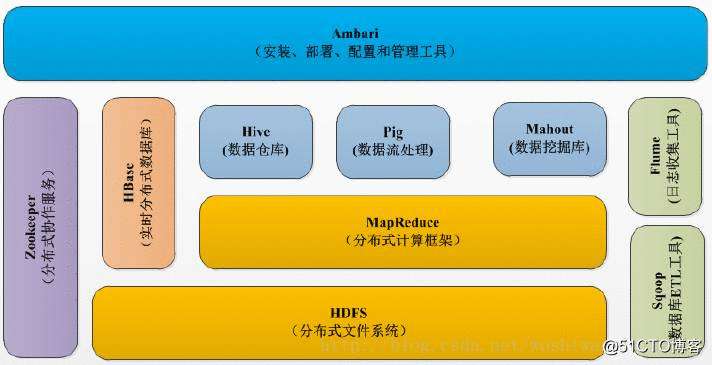

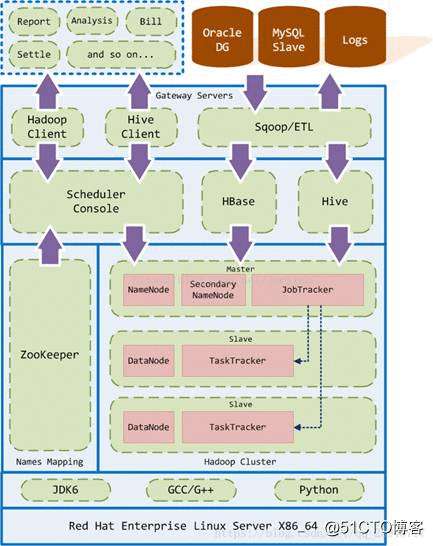

Hadoop的框架最核心的设计就是:HDFS和MapReduce。HDFS为海量的数据提供了存储,则MapReduce为海量的数据提供了计算。

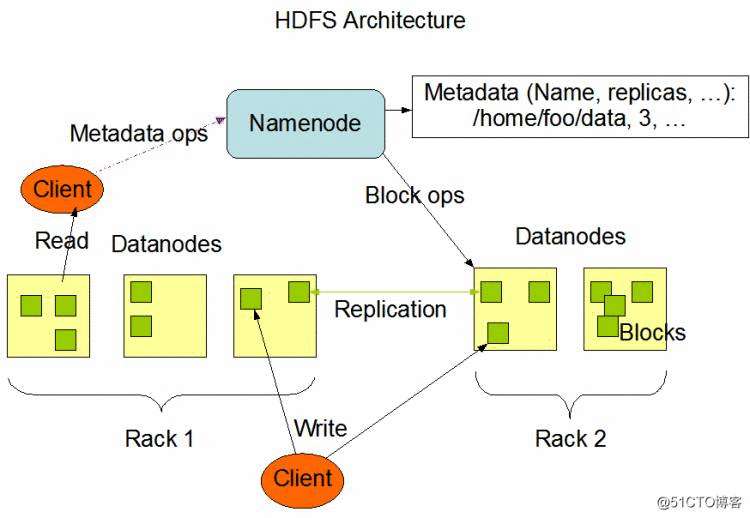

Hadoop实现了一个分布式文件系统(Hadoop Distributed File System),简称HDFS。

HDFS有高容错性的特点,并且设计用来部署在低廉的(low-cost)硬件上;而且它提供高吞吐量(high throughput)来访问应用程序的数据,适合那些有着超大数据集(large data set)的应用程序。HDFS放宽了(relax)POSIX的要求,可以以流的形式访问(streaming access)文件系统中的数据。

HDFS采用主从(Master/Slave)结构模型,一个HDFS集群是由一个NameNode和若干个DataNode组成的。NameNode作为主服务器,管理文件系统命名空间和客户端对文件的访问操作。DataNode管理存储的数据。HDFS支持文件形式的数据。

从内部来看,文件被分成若干个数据块,这若干个数据块存放在一组DataNode上。NameNode执行文件系统的命名空间,如打开、关闭、重命名文件或目录等,也负责数据块到具体DataNode的映射。DataNode负责处理文件系统客户端的文件读写,并在NameNode的统一调度下进行数据库的创建、删除和复制工作。NameNode是所有HDFS元数据的管理者,用户数据永远不会经过NameNode。

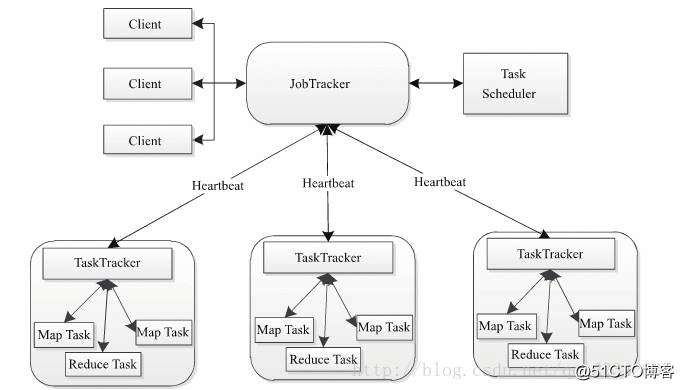

Hadoop MapReduce是google MapReduce 克隆版。

MapReduce是一种计算模型,用以进行大数据量的计算。其中Map对数据集上的独立元素进行指定的操作,生成键-值对形式中间结果。Reduce则对中间结果中相同“键”的所有“值”进行规约,以得到最终结果。MapReduce这样的功能划分,非常适合在大量计算机组成的分布式并行环境里进行数据处理。

Hadoop MapReduce采用Master/Slave(M/S)架构,如下图所示,主要包括以下组件:Client、JobTracker、TaskTracker和Task。

# 下载安装包

wget https://archive.apache.org/dist/hadoop/common/hadoop-2.7.3/hadoop-2.7.3.tar.gz

# 解压安装包

tar xf hadoop-2.7.3.tar.gz && mv hadoop-2.7.3 /usr/local/hadoop

# 创建目录

mkdir -p /home/hadoop/{name,data,log,journal}

编辑文件/etc/profile.d/hadoop.sh。

# HADOOP ENV

export HADOOP_HOME=/usr/local/hadoop

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

使 Hadoop 环境变量生效。

source /etc/profile.d/hadoop.sh

三、Hadoop 配置

编辑文件/usr/local/hadoop/etc/hadoop/hadoop-env.sh,修改下面字段。

export JAVA_HOME=/usr/local/java

export HADOOP_HOME=/usr/local/hadoop

编辑文件/usr/local/hadoop/etc/hadoop/yarn-env.sh,修改下面字段。

export JAVA_HOME=/usr/local/java

编辑文件/usr/local/hadoop/etc/hadoop/slaves

datanode01

datanode02

datanode03

编辑文件/usr/local/hadoop/etc/hadoop/core-site.xml,修改为如下:

编辑文件/usr/local/hadoop/etc/hadoop/hdfs-site.xml,修改为如下:

编辑文件/usr/local/hadoop/etc/hadoop/mapred-site.xml,修改为如下:

编辑文件/usr/local/hadoop/etc/hadoop/yarn-site.xml,修改为如下:

cd /usr/local/hadoop/etc/hadoop

scp * datanode01:/usr/local/hadoop/etc/hadoop

scp * datanode02:/usr/local/hadoop/etc/hadoop

scp * datanode03:/usr/local/hadoop/etc/hadoop

chown -R hadoop:hadoop /usr/local/hadoop

chmod 755 /usr/local/hadoop/etc/hadoop

四、Hadoop 启动

hdfs namenode -format

hadoop-daemon.sh start namenode

stop-all.sh

start-all.sh

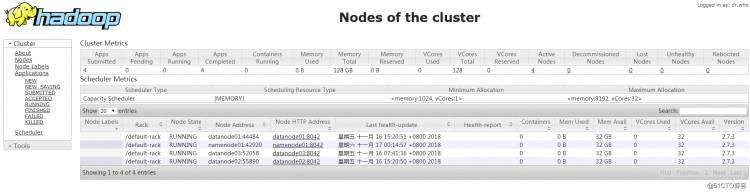

五、检查 Hadoop

[[email protected] ~]# jps

17419 NameNode

17780 ResourceManager

18152 Jps

[[email protected] ~]# jps

27264 -- process information unavailable

2227 DataNode

1292 QuorumPeerMain

2509 Jps

2334 NodeManager

[[email protected] ~]# jps

13940 QuorumPeerMain

18980 DataNode

19093 NodeManager

27292 -- process information unavailable

32526 -- process information unavailable

19743 Jps

[[email protected] ~]# jps

19238 DataNode

19350 NodeManager

14215 QuorumPeerMain

27351 -- process information unavailable

20014 Jps

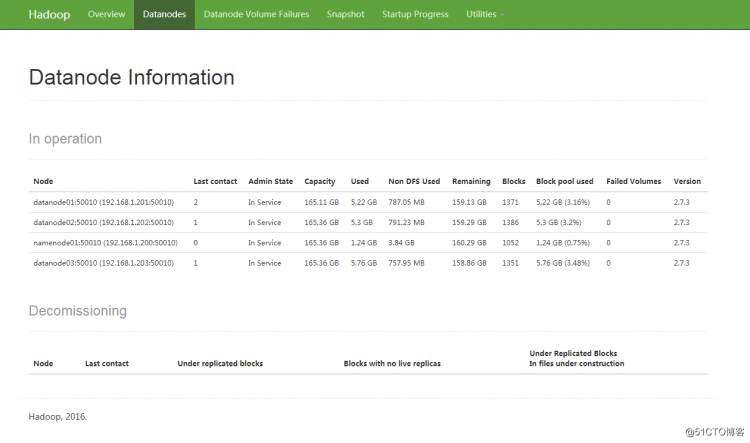

访问 http://192.168.1.200:50070/

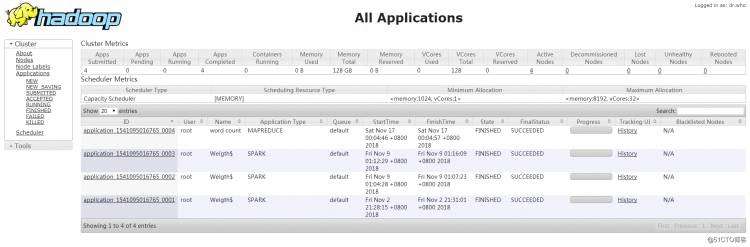

访问 http://192.168.1.200:8088/

[[email protected] ~]# hadoop fs -put /root/anaconda-ks.cfg /anaconda-ks.cfg

[[email protected] ~]# cd /usr/local/hadoop/share/hadoop/mapreduce/

[[email protected] mapreduce]# hadoop jar hadoop-mapreduce-examples-2.7.3.jar wordcount /anaconda-ks.cfg /test

18/11/17 00:04:45 INFO client.RMProxy: Connecting to ResourceManager at namenode01/192.168.1.200:8032

18/11/17 00:04:45 INFO input.FileInputFormat: Total input paths to process : 1

18/11/17 00:04:45 INFO mapreduce.JobSubmitter: number of splits:1

18/11/17 00:04:45 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1541095016765_0004

18/11/17 00:04:46 INFO impl.YarnClientImpl: Submitted application application_1541095016765_0004

18/11/17 00:04:46 INFO mapreduce.Job: The url to track the job: http://namenode01:8088/proxy/application_1541095016765_0004/

18/11/17 00:04:46 INFO mapreduce.Job: Running job: job_1541095016765_0004

18/11/17 00:04:51 INFO mapreduce.Job: Job job_1541095016765_0004 running in uber mode : false

18/11/17 00:04:51 INFO mapreduce.Job: map 0% reduce 0%

18/11/17 00:04:55 INFO mapreduce.Job: map 100% reduce 0%

18/11/17 00:04:59 INFO mapreduce.Job: map 100% reduce 100%

18/11/17 00:04:59 INFO mapreduce.Job: Job job_1541095016765_0004 completed successfully

18/11/17 00:04:59 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=1222

FILE: Number of bytes written=241621

FILE: Number of read operatiOns=0

FILE: Number of large read operatiOns=0

FILE: Number of write operatiOns=0

HDFS: Number of bytes read=1023

HDFS: Number of bytes written=941

HDFS: Number of read operatiOns=6

HDFS: Number of large read operatiOns=0

HDFS: Number of write operatiOns=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=1758

Total time spent by all reduces in occupied slots (ms)=2125

Total time spent by all map tasks (ms)=1758

Total time spent by all reduce tasks (ms)=2125

Total vcore-milliseconds taken by all map tasks=1758

Total vcore-milliseconds taken by all reduce tasks=2125

Total megabyte-milliseconds taken by all map tasks=1800192

Total megabyte-milliseconds taken by all reduce tasks=2176000

Map-Reduce Framework

Map input records=38

Map output records=90

Map output bytes=1274

Map output materialized bytes=1222

Input split bytes=101

Combine input records=90

Combine output records=69

Reduce input groups=69

Reduce shuffle bytes=1222

Reduce input records=69

Reduce output records=69

Spilled Records=138

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=99

CPU time spent (ms)=970

Physical memory (bytes) snapshot=473649152

Virtual memory (bytes) snapshot=4921606144

Total committed heap usage (bytes)=441450496

Shuffle Errors

BAD_ID=0

COnNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=922

File Output Format Counters

Bytes Written=941

[[email protected] mapreduce]# hadoop fs -cat /test/part-r-00000

# 11

#version=DEVEL 1

$6$kRQ2y1nt/B6c6ETs$ITy0O/E9P5p0ePWlHJ7fRTqVrqGEQf7ZGi5IX2pCA7l25IdEThUNjxelq6wcD9SlSa1cGcqlJy2jjiV9/lMjg/ 1

%addon 1

%end 2

%packages 1

--all 1

--boot-drive=sda 1

--bootproto=dhcp 1

--device=enp1s0 1

--disable 1

--drives=sda 1

--enable 1

--enableshadow 1

--hostname=localhost.localdomain 1

--initlabel 1

--ipv6=auto 1

--isUtc 1

--iscrypted 1

--location=mbr 1

--Onboot=off 1

--only-use=sda 1

--passalgo=sha512 1

--reserve-mb=‘auto‘ 1

--type=lvm 1

--vckeymap=cn 1

--xlayouts=‘cn‘ 1

@^minimal 1

@core 1

Agent 1

Asia/Shanghai 1

CDROM 1

Keyboard 1

Network 1

Partition 1

Root 1

Run 1

Setup 1

System 4

Use 2

auth 1

authorization 1

autopart 1

boot 1

bootloader 2

cdrom 1

clearing 1

clearpart 1

com_redhat_kdump 1

configuration 1

first 1

firstboot 1

graphical 2

ignoredisk 1

information 3

install 1

installation 1

keyboard 1

lang 1

language 1

layouts 1

media 1

network 2

on 1

password 1

rootpw 1

the 1

timezone 2

zh_CN.UTF-8 1

七、Hadoop 的使用

查看fs帮助命令: hadoop fs -help

查看HDFS磁盘空间: hadoop fs -df -h

创建目录: hadoop fs -mkdir

上传本地文件: hadoop fs -put

查看文件: hadoop fs -ls

查看文件内容: hadoop fs –cat

复制文件: hadoop fs -cp

下载HDFS文件到本地: hadoop fs -get

移动文件: hadoop fs -mv

删除文件: hadoop fs -rm -r -f

删除文件夹: hadoop fs -rm –r

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有