LVM。。。让我理解就是一个将好多分区磁盘帮到一起的玩意,类似于烙大饼。。。然后再切

新建了一个虚拟机,然后又挂了一个5G的硬盘,然后分出了5块空间,挂载到了虚拟机上。这些步骤很简单

fdisk mkdir mount......不赘述了。。。鸟哥也不赘述我也就不赘述了。继续看重点

这是鸟哥的官方解释,看看,是不是跟我说的一样摊大饼,在切割?买过饼吃的人都应该懂的。。。。

LVM概念

好了。概念讲完了,鸟哥讲了动态分配的实现原理,继续截图

这几个东东的关系,你看明白了么?没看明白不要紧,我给你做大饼吃

首先,将磁盘都做成LVM可识别的格式。就是PV

然后,用VG将这些PV串成一张大饼

最后,就是切大饼 LV。那LV的最基础的组成部分是什么呢?就是PE。PE就是切块的最小单元。

看完我做的大饼,再看上面的图,是否会更理解一下。

也就是说,你要扩充只能扩充VG中未被LV切块的饼,是否能明白,稍微懂点分区的都明白应该。比如你现在空间不够了,需要干嘛,先PV,然后加入到VG,然后再切饼。

LVM硬盘写入,鸟哥说有两种模式

线性模式,写完一张再写另一张

交错模式,文件分成两部分,两张硬盘,交互写入。注:我没看明白为啥当初要设计这种模式的原因

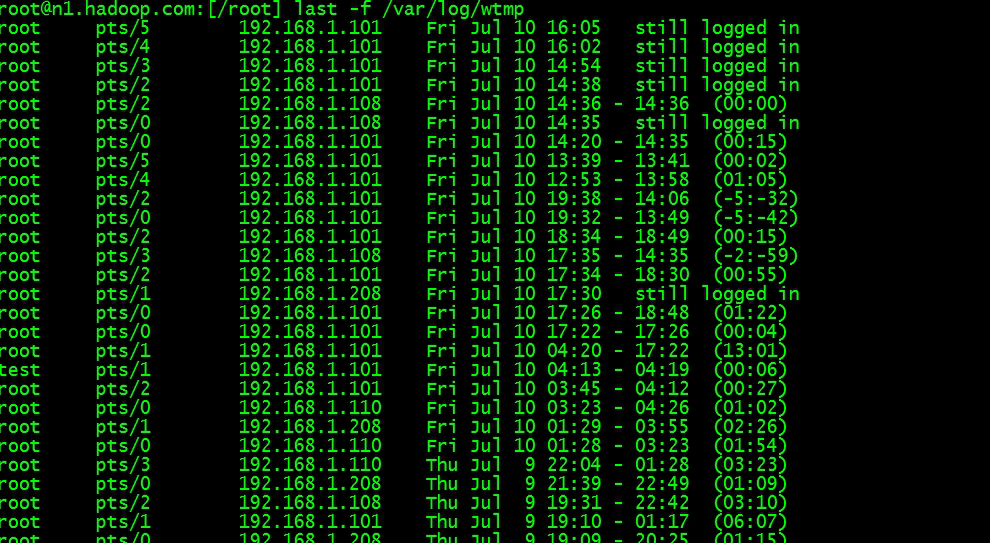

[root@localhost ~]# pvcreate /dev/sdb{1,2,3,4,5}Device /dev/sdb1 excluded by a filter.Physical volume "/dev/sdb2" successfully created.Physical volume "/dev/sdb3" successfully created.Physical volume "/dev/sdb4" successfully created.Physical volume "/dev/sdb5" successfully created.

[root@localhost ~]# pvdisplay /dev/sdb3WARNING: Device for PV rGAe2U-E01D-o80Z-GG2n-Q3Gt-JnxH-QpdECV not found or rejected by a filter."/dev/sdb3" is a new physical volume of "954.00 MiB"--- NEW Physical volume ---PV Name /dev/sdb3VG Name PV Size 954.00 MiBAllocatable NOPE Size 0 Total PE 0Free PE 0Allocated PE 0PV UUID tiph15-4Jg5-4Yf0-Rdtn-Jj0o-Vmgj-LBpfhu

鸟哥的解释很透彻,截图如下

[root@localhost ~]# vgcreate -s 16M lsqvg /dev/sdb{1,2,3}WARNING: Device for PV rGAe2U-E01D-o80Z-GG2n-Q3Gt-JnxH-QpdECV not found or rejected by a filter.WARNING: Device for PV rGAe2U-E01D-o80Z-GG2n-Q3Gt-JnxH-QpdECV not found or rejected by a filter.Volume group "lsqvg" successfully created

[root@localhost ~]# vgscanReading volume groups from cache.Found volume group "lsqvg" using metadata type lvm2Found volume group "centos" using metadata type lvm2

[root&#64;localhost ~]# pvscanWARNING: Device for PV rGAe2U-E01D-o80Z-GG2n-Q3Gt-JnxH-QpdECV not found or rejected by a filter.PV /dev/sdb1 VG lsqvg lvm2 [944.00 MiB / 944.00 MiB free]PV /dev/sdb2 VG lsqvg lvm2 [944.00 MiB / 944.00 MiB free]PV /dev/sdb3 VG lsqvg lvm2 [944.00 MiB / 944.00 MiB free]PV /dev/sda2 VG centos lvm2 [19.70 GiB / 8.00 MiB free]PV /dev/sdb4 lvm2 [954.00 MiB]Total: 5 [23.40 GiB] / in use: 4 [<22.47 GiB] / in no VG: 1 [954.00 MiB]

[root&#64;localhost ~]# vgdisplay lsqvg--- Volume group ---VG Name lsqvgSystem ID Format lvm2Metadata Areas 3Metadata Sequence No 1VG Access read/writeVG Status resizableMAX LV 0Cur LV 0Open LV 0Max PV 0Cur PV 3Act PV 3VG Size <2.77 GiBPE Size 16.00 MiBTotal PE 177Alloc PE / Size 0 / 0 Free PE / Size 177 / <2.77 GiBVG UUID yLBdez-VeIK-2xjQ-bMeT-8IBH-ENt3-Mu9NwE[root&#64;localhost ~]# vgextend lsqvg /dev/sdb4WARNING: Device for PV rGAe2U-E01D-o80Z-GG2n-Q3Gt-JnxH-QpdECV not found or rejected by a filter.Volume group "lsqvg" successfully extended

[root&#64;localhost ~]# vgdisplay lsqvg--- Volume group ---VG Name lsqvgSystem ID Format lvm2Metadata Areas 4Metadata Sequence No 2VG Access read/writeVG Status resizableMAX LV 0Cur LV 0Open LV 0Max PV 0Cur PV 4Act PV 4VG Size <3.69 GiBPE Size 16.00 MiBTotal PE 236Alloc PE / Size 0 / 0 Free PE / Size 236 / <3.69 GiBVG UUID yLBdez-VeIK-2xjQ-bMeT-8IBH-ENt3-Mu9NwE

通过上述加粗&#xff0c;可以看出&#xff0c;如何新增VG。另VG的名字我们是可以自定义的&#xff0c;然后我们定义了每个PE的大小。第一行红色的位置。基本上VG就这么些东东。也不难

PE有了。VG这张大饼也有了。剩下的就是需要对VG这张大饼切分了。通过我们上面的步骤可以看出&#xff0c;就是利用LV

让我们来跟着鸟哥实践一下

[root&#64;localhost ~]# lvcreate -L 2G -n lsqlv lsqvg

WARNING: LVM2_member signature detected on /dev/lsqvg/lsqlv at offset 536. Wipe it? [y/n]: yWiping LVM2_member signature on /dev/lsqvg/lsqlv.Logical volume "lsqlv" created.

[root&#64;localhost ~]# lvscanACTIVE &#39;/dev/lsqvg/lsqlv&#39; [2.00 GiB] inheritACTIVE &#39;/dev/centos/var&#39; [1.86 GiB] inheritACTIVE &#39;/dev/centos/swap&#39; [192.00 MiB] inheritACTIVE &#39;/dev/centos/root&#39; [9.31 GiB] inheritACTIVE &#39;/dev/centos/home&#39; [8.33 GiB] inherit

[root&#64;localhost ~]# lvdisplay /dev/lsqlvVolume group "lsqlv" not foundCannot process volume group lsqlv

[root&#64;localhost ~]# lvdisplay /dev/lsqvg/lsqlv--- Logical volume ---LV Path /dev/lsqvg/lsqlvLV Name lsqlvVG Name lsqvgLV UUID I36ZBB-abG3-YZVt-h61R-qAya-ohn0-5GmH2nLV Write Access read/writeLV Creation host, time localhost.localdomain, 2019-08-20 11:00:50 &#43;0800LV Status available# open 0LV Size 2.00 GiBCurrent LE 128Segments 3Allocation inheritRead ahead sectors auto- currently set to 8192Block device 253:4

我们跟着鸟哥&#xff0c;创建了一个2G大小的LV。名字就是我自己的名字喽。

然后查看一下。可以看到我们的LV的相关情况&#xff0c;还有其他的LV的情况&#xff0c;就是系统设置的LV的情况

然后单独查看我们自己的LV&#xff0c;可以看到他的大小&#xff0c;名称&#xff0c;等相关信息

OK。LV建立之后&#xff0c;就是格式化&#xff0c;挂载等等了。让我们跟着鸟哥继续往下走

[root&#64;localhost ~]# mkdir /srv/lvm

[root&#64;localhost ~]# mount /dev/lsqvg/lsqlv /srv/lvm

mount: /dev/mapper/lsqvg-lsqlv 写保护&#xff0c;将以只读方式挂载

mount: 未知的文件系统类型“(null)”

[root&#64;localhost ~]# ^C

[root&#64;localhost ~]# unmount /srv/lvm

bash: unmount: 未找到命令...

[root&#64;localhost ~]# umount /srv/lvm

umount: /srv/lvm&#xff1a;未挂载

[root&#64;localhost ~]# mkfs.xfs /dev/lsqvg/lsqlv

meta-data&#61;/dev/lsqvg/lsqlv isize&#61;512 agcount&#61;4, agsize&#61;131072 blks&#61; sectsz&#61;512 attr&#61;2, projid32bit&#61;1&#61; crc&#61;1 finobt&#61;0, sparse&#61;0

data &#61; bsize&#61;4096 blocks&#61;524288, imaxpct&#61;25&#61; sunit&#61;0 swidth&#61;0 blks

naming &#61;version 2 bsize&#61;4096 ascii-ci&#61;0 ftype&#61;1

log &#61;internal log bsize&#61;4096 blocks&#61;2560, version&#61;2&#61; sectsz&#61;512 sunit&#61;0 blks, lazy-count&#61;1

realtime &#61;none extsz&#61;4096 blocks&#61;0, rtextents&#61;0

[root&#64;localhost ~]# mount /dev/lsqvg/lsqlv /dev/lvm

mount: 挂载点 /dev/lvm 不存在

[root&#64;localhost ~]# mount /dev/lsqvg/lsqlv /srv/lvm

[root&#64;localhost ~]# df -Th /srv/lvm

文件系统 类型 容量 已用 可用 已用% 挂载点

/dev/mapper/lsqvg-lsqlv xfs 2.0G 33M 2.0G 2% /srv/lvm

首先创建一个挂载点 /srv/lvm

然后格式化一下&#xff0c;格式化命令mkfs.xfs /dev/lsqvg/lsqlv 然后进行了格式化。加粗的信息就是格式化后的相关信息。

然后利用mount进行挂载&#xff0c;挂载之后。df -Th查看一下相关的信息。可以看到相关情况&#xff0c;表明我们挂载成功。

[root&#64;localhost ~]# vgdisplay lsqvg--- Volume group ---VG Name lsqvgSystem ID Format lvm2Metadata Areas 4Metadata Sequence No 3VG Access read/writeVG Status resizableMAX LV 0Cur LV 1Open LV 0Max PV 0Cur PV 4Act PV 4VG Size <3.69 GiBPE Size 16.00 MiBTotal PE 236Alloc PE / Size 128 / 2.00 GiBFree PE / Size 108 / <1.69 GiB #这里可以看出&#xff0c;我们有足够的容量来新增LV的大小VG UUID yLBdez-VeIK-2xjQ-bMeT-8IBH-ENt3-Mu9NwE#新增一个500M的lv的大小

[root&#64;localhost ~]# lvresize -L &#43;500M /dev/lsqvg/lsqlvRounding size to boundary between physical extents: 512.00 MiB.Size of logical volume lsqvg/lsqlv changed from 2.00 GiB (128 extents) to 2.50 GiB (160 extents).Logical volume lsqvg/lsqlv successfully resized.

[root&#64;localhost ~]# lvscanACTIVE &#39;/dev/lsqvg/lsqlv&#39; [2.50 GiB] inherit #从这可以看出确实是增加到2.5G了ACTIVE &#39;/dev/centos/var&#39; [1.86 GiB] inheritACTIVE &#39;/dev/centos/swap&#39; [192.00 MiB] inheritACTIVE &#39;/dev/centos/root&#39; [9.31 GiB] inheritACTIVE &#39;/dev/centos/home&#39; [8.33 GiB] inherit

[root&#64;localhost ~]# df -Th /srv/lvm

文件系统 类型 容量 已用 可用 已用% 挂载点

/dev/mapper/lsqvg-lsqlv xfs 2.0G 87M 2.0G 5% /srv/lvm #但是从挂载点来看&#xff0c;依然是2.0G。可以看出区别了

[root&#64;localhost ~]# ls -l /srv/lvm

总用量 16

drwxr-xr-x. 161 root root 8192 8月 20 08:17 etc

drwxr-xr-x. 23 root root 4096 8月 20 09:25 log

[root&#64;localhost ~]# xfs_info /srv/lvm

meta-data&#61;/dev/mapper/lsqvg-lsqlv isize&#61;512 agcount&#61;4, agsize&#61;131072 blks #agcount&#61;4 &#61; sectsz&#61;512 attr&#61;2, projid32bit&#61;1&#61; crc&#61;1 finobt&#61;0 spinodes&#61;0

data &#61; bsize&#61;4096 blocks&#61;524288, imaxpct&#61;25 #blocks&#61;524288&#61; sunit&#61;0 swidth&#61;0 blks

naming &#61;version 2 bsize&#61;4096 ascii-ci&#61;0 ftype&#61;1

log &#61;internal bsize&#61;4096 blocks&#61;2560, version&#61;2&#61; sectsz&#61;512 sunit&#61;0 blks, lazy-count&#61;1

realtime &#61;none extsz&#61;4096 blocks&#61;0, rtextents&#61;0

[root&#64;localhost ~]# xfs_growfs /srv/lvm #将原来的500m加入lv中

meta-data&#61;/dev/mapper/lsqvg-lsqlv isize&#61;512 agcount&#61;4, agsize&#61;131072 blks&#61; sectsz&#61;512 attr&#61;2, projid32bit&#61;1&#61; crc&#61;1 finobt&#61;0 spinodes&#61;0

data &#61; bsize&#61;4096 blocks&#61;524288, imaxpct&#61;25&#61; sunit&#61;0 swidth&#61;0 blks

naming &#61;version 2 bsize&#61;4096 ascii-ci&#61;0 ftype&#61;1

log &#61;internal bsize&#61;4096 blocks&#61;2560, version&#61;2&#61; sectsz&#61;512 sunit&#61;0 blks, lazy-count&#61;1

realtime &#61;none extsz&#61;4096 blocks&#61;0, rtextents&#61;0

data blocks changed from 524288 to 655360

[root&#64;localhost ~]# xfs_info /srv/lvm

meta-data&#61;/dev/mapper/lsqvg-lsqlv isize&#61;512 agcount&#61;5, agsize&#61;131072 blks #变化后变成了5&#61; sectsz&#61;512 attr&#61;2, projid32bit&#61;1&#61; crc&#61;1 finobt&#61;0 spinodes&#61;0

data &#61; bsize&#61;4096 blocks&#61;655360, imaxpct&#61;25 #bolcks也变了&#61; sunit&#61;0 swidth&#61;0 blks

naming &#61;version 2 bsize&#61;4096 ascii-ci&#61;0 ftype&#61;1

log &#61;internal bsize&#61;4096 blocks&#61;2560, version&#61;2&#61; sectsz&#61;512 sunit&#61;0 blks, lazy-count&#61;1

realtime &#61;none extsz&#61;4096 blocks&#61;0, rtextents&#61;0

[root&#64;localhost ~]# df -Th /srv/lvm

文件系统 类型 容量 已用 可用 已用% 挂载点

/dev/mapper/lsqvg-lsqlv xfs 2.5G 87M 2.5G 4% /srv/lvm #大小变成了2.5G

[root&#64;localhost ~]# ls -l /srv/lvm #文件还在

总用量 16

drwxr-xr-x. 161 root root 8192 8月 20 08:17 etc

drwxr-xr-x. 23 root root 4096 8月 20 09:25 log

注&#xff1a;XFS文件系统中&#xff0c;文件系统只能放大&#xff0c;不能缩小。只有EXT4系统能够放大和缩小

LVM动态分配

说白了。就是先建立一个容量池&#xff0c;然后实发实用&#xff0c;从容量池中调用容量。直到容量池耗尽为止

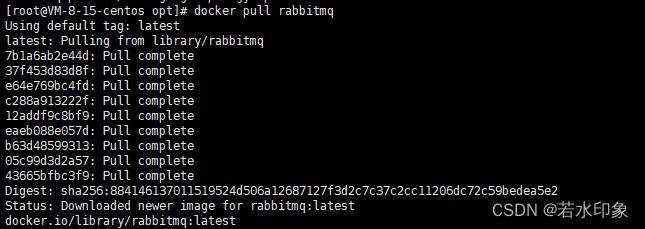

[root&#64;localhost ~]# lvcreate -L 1G -T lsqvg/lsqpoolThin pool volume with chunk size 64.00 KiB can address at most 15.81 TiB of data.Logical volume "lsqpool" created.

[root&#64;localhost ~]# lvdisplay /dev/lsqvg/lsqpool--- Logical volume ---LV Name lsqpoolVG Name lsqvgLV UUID QbEb2i-Eumf-cVfK-yol4-1Qlm-vlDO-leMa6eLV Write Access read/writeLV Creation host, time localhost.localdomain, 2019-08-21 17:01:01 &#43;0800LV Pool metadata lsqpool_tmetaLV Pool data lsqpool_tdataLV Status available# open 0LV Size 1.00 GiBAllocated pool data 0.00%Allocated metadata 10.23%Current LE 64Segments 1Allocation inheritRead ahead sectors auto- currently set to 8192Block device 253:7[root&#64;localhost ~]# lvs lsqvgLV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convertlsqlv lsqvg -wi-ao---- 2.50g lsqpool lsqvg twi-a-tz-- 1.00g 0.00 10.23

[root&#64;localhost ~]# lvcreate -V 10G -T lsqvg/lsqpool -n lsqthin1WARNING: Sum of all thin volume sizes (10.00 GiB) exceeds the size of thin pool lsqvg/lsqpool and the size of whole volume group (<3.69 GiB).WARNING: You have not turned on protection against thin pools running out of space.WARNING: Set activation/thin_pool_autoextend_threshold below 100 to trigger automatic extension of thin pools before they get full.Logical volume "lsqthin1" created.

[root&#64;localhost ~]# lvs lsqvgLV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convertlsqlv lsqvg -wi-ao---- 2.50g lsqpool lsqvg twi-aotz-- 1.00g 0.00 10.25 lsqthin1 lsqvg Vwi-a-tz-- 10.00g lsqpool 0.00

[root&#64;localhost ~]# mkfs.xfs /dev/lsqvg/lsqthin1

meta-data&#61;/dev/lsqvg/lsqthin1 isize&#61;512 agcount&#61;16, agsize&#61;163840 blks&#61; sectsz&#61;512 attr&#61;2, projid32bit&#61;1&#61; crc&#61;1 finobt&#61;0, sparse&#61;0

data &#61; bsize&#61;4096 blocks&#61;2621440, imaxpct&#61;25&#61; sunit&#61;16 swidth&#61;16 blks

naming &#61;version 2 bsize&#61;4096 ascii-ci&#61;0 ftype&#61;1

log &#61;internal log bsize&#61;4096 blocks&#61;2560, version&#61;2&#61; sectsz&#61;512 sunit&#61;16 blks, lazy-count&#61;1

realtime &#61;none extsz&#61;4096 blocks&#61;0, rtextents&#61;0

[root&#64;localhost ~]# mkdir /srv/thin

[root&#64;localhost ~]# moutn /dev/lsqvg/lsqthin1 /srv/thin

bash: moutn: 未找到命令...

相似命令是&#xff1a; &#39;mount&#39;

[root&#64;localhost ~]# mount /dev/lsqvg/lsqthin1 /srv/thin

[root&#64;localhost ~]# df -Th /srv/thin

文件系统 类型 容量 已用 可用 已用% 挂载点

/dev/mapper/lsqvg-lsqthin1 xfs 10G 33M 10G 1% /srv/thin

[root&#64;localhost ~]# dd if&#61;/dev/zero of&#61;/srv/thin/test.img bs&#61;1M count&#61;500

记录了500&#43;0 的读入

记录了500&#43;0 的写出

524288000字节(524 MB)已复制&#xff0c;0.843028 秒&#xff0c;622 MB/秒

[root&#64;localhost ~]# lvs lsqvgLV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convertlsqlv lsqvg -wi-ao---- 2.50g lsqpool lsqvg twi-aotz-- 1.00g 49.92 11.82 lsqthin1 lsqvg Vwi-aotz-- 10.00g lsqpool 4.99

是不是没看明白&#xff1f;我反正一开始也没看明白&#xff0c;我又从头看了鸟哥的介绍

lsqthin1是一个虚拟的10G的大小。但是是否能够可以用到10G的大小&#xff0c;是有lsqpool来决定的。从我们操作来看&#xff0c;lsqpool只有1G的容量&#xff0c;所以这就直接决定了lsqthin1只能够用1G的容量&#xff0c;超过1G就会导致数据损毁&#xff0c;但是你虚拟的时候&#xff0c;lsqthin1是可以随便写的&#xff0c;你可以写100G&#xff0c;但是只能用1G来操作

LVM的快照功能

看完上面的东东不知你是否理解了。其实很简单&#xff0c;就是说&#xff0c;再LV中的块中&#xff0c;他只会保存更改过的块&#xff0c;没有更改的块还是会放到共享区域中&#xff0c;然后&#xff0c;你可以随时还原&#xff0c;就是从快照中找到备份的快照&#xff0c;然后替换就OK了。确实是个很牛的设计。

[root&#64;localhost ~]# vgdisplay lsqvg--- Volume group ---VG Name lsqvgSystem ID Format lvm2Metadata Areas 4Metadata Sequence No 9VG Access read/writeVG Status resizableMAX LV 0Cur LV 3Open LV 2Max PV 0Cur PV 4Act PV 4VG Size <3.69 GiBPE Size 16.00 MiBTotal PE 236Alloc PE / Size 226 / 3.53 GiBFree PE / Size 10 / 160.00 MiB #这个地方是一个点&#xff0c;看到了么&#xff0c;free pe。也就是说我们要给快照分配大小的话&#xff0c;只能用剩余的PE大小&#xff0c;而不能超过&#xff0c;否则的话会出错。所以&#xff0c;要建立快照&#xff0c;首先要看一下这个LV还剩下多大的空间&#xff0c;根据这个来建立VG UUID yLBdez-VeIK-2xjQ-bMeT-8IBH-ENt3-Mu9NwE[root&#64;localhost ~]# lvcreate -s -l 10 -n lsqnap1 /dev/lsqvg/lsqlv # 要对哪一个LV创建快照&#xff0c;这里我们针对lsqlv创建了一个名为lsqnap1的快照WARNING: Sum of all thin volume sizes (10.00 GiB) exceeds the size of thin pools and the size of whole volume group (<3.69 GiB).WARNING: You have not turned on protection against thin pools running out of space.WARNING: Set activation/thin_pool_autoextend_threshold below 100 to trigger automatic extension of thin pools before they get full.Logical volume "lsqnap1" created.

[root&#64;localhost ~]# lvdisplay /dev/lsqvg/lsqlv # 这里我们lvdisplay&#xff0c;我这里display错了&#xff0c;应该display快照&#xff0c;我这里display成了lv&#xff0c;而我们的目的是要查看快照的信息--- Logical volume ---LV Path /dev/lsqvg/lsqlvLV Name lsqlvVG Name lsqvgLV UUID I36ZBB-abG3-YZVt-h61R-qAya-ohn0-5GmH2nLV Write Access read/writeLV Creation host, time localhost.localdomain, 2019-08-20 11:00:50 &#43;0800LV snapshot status source oflsqnap1 [active]LV Status available# open 1LV Size 2.50 GiBCurrent LE 160Segments 3Allocation inheritRead ahead sectors auto- currently set to 8192Block device 253:2

[root&#64;localhost ~]# lvdisplay /dev/lsqvg/lsqnap1 #这里我们dispaly快照的信息--- Logical volume ---LV Path /dev/lsqvg/lsqnap1LV Name lsqnap1VG Name lsqvgLV UUID Nr0dNA-cPS1-NSww-i3BJ-Jx9l-mKz6-4znKzdLV Write Access read/writeLV Creation host, time localhost.localdomain, 2019-08-22 14:45:54 &#43;0800LV snapshot status active destination for lsqlvLV Status available# open 0LV Size 2.50 GiB #原始LV的大小Current LE 160COW-table size 160.00 MiB #快照的大小COW-table LE 10 Allocated to snapshot 0.01% #目前已经用掉的容量Snapshot chunk size 4.00 KiBSegments 1Allocation inheritRead ahead sectors auto- currently set to 8192Block device 253:12

OK。我们现在就已经建立了快照&#xff0c;现在我们对快照挂载一下&#xff0c;然后对比一下LV。按照鸟哥的介绍&#xff0c;因为LV没有任何改动&#xff0c;所以&#xff0c;快照应该和LV是一模一样的&#xff0c;我们来看一下

[root&#64;localhost ~]# mkdir /srv/snapshot1

[root&#64;localhost ~]# mount -o /dev/lsqvg/lsqnap1 /srv/snapshot1 #在这里&#xff0c;我按照既有思维去挂载&#xff0c;结果提示错误&#xff0c;然后看书&#xff0c;后面需要加一个 nouuid

mount: 在 /etc/fstab 中找不到 /srv/snapshot1

[root&#64;localhost ~]# cd /srv

[root&#64;localhost srv]# ls

lvm snapshot1 thin

[root&#64;localhost srv]# mount -o /dev/lsqvg/lsqnap1 /srv/snapshot1

mount: 在 /etc/fstab 中找不到 /srv/snapshot1

[root&#64;localhost srv]# mount -o nouuid /dev/lsqvg/lsqnap1 /srv/snapshot1 #就是在这里&#xff0c;nouuid&#xff0c;鸟哥也介绍了&#xff0c;因为XFS不允许相同的UUID文件系统进行挂载&#xff0c;因此需要加上nouuid这个参数来忽略uuid&#xff0c;因为快照和LV都是同样的UUID

[root&#64;localhost srv]# df -Th /srv/lvm /srv/snap0shot1

df: "/srv/snap0shot1": 没有那个文件或目录

文件系统 类型 容量 已用 可用 已用% 挂载点

/dev/mapper/lsqvg-lsqlv xfs 2.5G 87M 2.5G 4% /srv/lvm

[root&#64;localhost srv]# df -Th /srv/lvm /srv/snapshot1 #我们df一下&#xff0c;发现结论是一样的&#xff0c;lv和snap1是一样的。好吧&#xff0c;鸟哥没有下文了&#xff0c;我这里再测试一下&#xff0c;如果我改动了lv&#xff0c;会是什么后果

文件系统 类型 容量 已用 可用 已用% 挂载点

/dev/mapper/lsqvg-lsqlv xfs 2.5G 87M 2.5G 4% /srv/lvm

/dev/mapper/lsqvg-lsqnap1 xfs 2.5G 87M 2.5G 4% /srv/snapshot1

在这里&#xff0c;我将ect里边的netconfig加了一段注释。然后我们来看一下结果。

[root&#64;localhost etc]# lvdisplay /dev/lsqvg/lsqnap1--- Logical volume ---LV Path /dev/lsqvg/lsqnap1LV Name lsqnap1VG Name lsqvgLV UUID Nr0dNA-cPS1-NSww-i3BJ-Jx9l-mKz6-4znKzdLV Write Access read/writeLV Creation host, time localhost.localdomain, 2019-08-22 14:45:54 &#43;0800LV snapshot status active destination for lsqlvLV Status available# open 1LV Size 2.50 GiBCurrent LE 160COW-table size 160.00 MiBCOW-table LE 10Allocated to snapshot 1.32% #看到了么&#xff0c;已经被用掉了1.32%、原来是我们刚才贴的代码是0.01%。怎么&#xff0c;看不大出来&#xff0c;我们再来改一下&#xff0c;这次见一个文件夹试试Snapshot chunk size 4.00 KiBSegments 1Allocation inheritRead ahead sectors auto- currently set to 8192Block device 253:12

[root&#64;localhost lvm]# lvdisplay /dev/lsqvg/lsqnap1--- Logical volume ---LV Path /dev/lsqvg/lsqnap1LV Name lsqnap1VG Name lsqvgLV UUID Nr0dNA-cPS1-NSww-i3BJ-Jx9l-mKz6-4znKzdLV Write Access read/writeLV Creation host, time localhost.localdomain, 2019-08-22 14:45:54 &#43;0800LV snapshot status active destination for lsqlvLV Status available# open 1LV Size 2.50 GiBCurrent LE 160COW-table size 160.00 MiBCOW-table LE 10Allocated to snapshot 1.48% #看到了么&#xff1f;已经变成1.48了。这里要注意一些&#xff0c;就是你刚做完变动&#xff0c;是不会变的&#xff0c;需要他处理完之后才会变动&#xff0c;所以&#xff0c;我觉得应该不能突然断电&#xff0c;否则会有问题。我虚拟机等了大约10几秒吧Snapshot chunk size 4.00 KiBSegments 1Allocation inheritRead ahead sectors auto- currently set to 8192Block device 253:12

OK.这一章节结束了。。。下面的操作题&#xff0c;我们来操作一下

题目

建立raid5 并在raid5上创建lv

首先是fdisk创建4个分区&#xff0c;我弄了一个20G 的硬盘&#xff0c;放在里面&#xff0c;然后建了4个分区。这个简单&#xff0c;fdisk 那一堆&#xff0c;不说了

然后就是建立raid5的程式

[root&#64;localhost ~]# mdadm --create /dev/md0 --auto&#61;yes --level&#61;5 --raid-devices&#61;3 /dev/sdb{1,2,3}

[root&#64;localhost ~]# mdadm --detail /dev/md0 | grep -i uuid #查出这个UUID。

这里有个问题&#xff0c;我这里能查出UUID&#xff0c;但是去寻找/etc/mdadm.conf的时候&#xff0c;发现没有这个文件&#xff0c;然后find了一下&#xff0c;也没有这个文件。很悲催&#xff0c;然后刦网上找了找&#xff0c;也没找出个大概。。。。但好像不影响使用

然后就是家里pv,vg,lv

[root&#64;localhost ~]# pvcreate /dev/md0

[root&#64;localhost ~]# vgcreate raidvg /dev/md0

[root&#64;localhost ~]# lvcreate -L 1.5G -n raidlv raidvg 在这里我出了个笑话&#xff0c;就是还没有建立lv的时候&#xff0c;就Lvscan。。。有意思

[root&#64;localhost ~]# lvscan

ACTIVE &#39;/dev/raidvg/raidlv&#39; [1.50 GiB] inherit 这个就是我们建立的LV

ACTIVE &#39;/dev/centos/var&#39; [1.86 GiB] inherit

ACTIVE &#39;/dev/centos/swap&#39; [192.00 MiB] inherit

ACTIVE &#39;/dev/centos/root&#39; [9.31 GiB] inherit

ACTIVE &#39;/dev/centos/home&#39; [8.33 GiB] inherit

然后就是格式化&#xff0c;修改fstab启动文件&#xff0c;首先需要查找lv的UUID&#xff0c;然后修改fstab&#xff0c;然后建立挂载文件夹&#xff0c;然后mount -a &#xff0c;然后df查看结果就OK了。

[root&#64;localhost ~]# mkfs.xfs /dev/raidvg/raidlv

[root&#64;localhost ~]# blkid /dev/raidvg/raidlv

/dev/raidvg/raidlv: UUID&#61;"4008bfa1-6b21-458e-ad12-49b77b6739f7" TYPE&#61;"xfs"

[root&#64;localhost ~]# vim /etc/fstab

#

# /etc/fstab

# Created by anaconda on Mon Aug 19 10:59:08 2019

#

# Accessible filesystems, by reference, are maintained under &#39;/dev/disk&#39;

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info

#

/dev/mapper/centos-root / xfs defaults 0 0

UUID&#61;ba80a371-e434-431e-9508-df4b2827efad /boot xfs defaults 0 0

/dev/mapper/centos-home /home xfs defaults 0 0

/dev/mapper/centos-var /var xfs defaults 0 0

/dev/mapper/centos-swap swap swap defaults 0 0

UUID&#61;"4008bfa1-6b21-458e-ad12-49b77b6739f7" /srv/raidlvm xfs defaults 0 0 这个UUID就是我们前面通过blkid查找出来的uuid。这里一定要注意一下

[root&#64;localhost ~]# mkdir /srv/raidlvm

[root&#64;localhost ~]# mount -a

[root&#64;localhost ~]# df -Th /srv/raidlvm

文件系统 类型 容量 已用 可用 已用% 挂载点

/dev/mapper/raidvg-raidlv xfs 1.5G 33M 1.5G 3% /srv/raidlvm

OK&#xff0c;搞定。。。。

我还是没有明白有没有那个conf与raid5有没有影响。。。。。。这个留着点&#xff0c;以后再查找一下

贴一个徐秉义老师的文章&#xff0c;鸟哥推荐的&#xff0c;有时间看一下&#xff0c;太长了&#xff0c;百度文库的

https://wenku.baidu.com/view/3ba28e21dd36a32d7375811b.html。这个章节结束&#xff01;

京公网安备 11010802041100号

京公网安备 11010802041100号