前言:

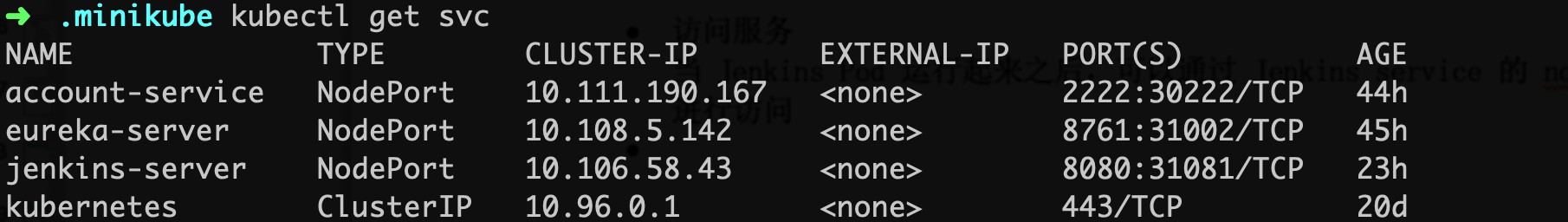

在系列的第一篇文章中,我已经介绍过如何在阿里云基于kubeasz搭建K8S集群,通过在K8S上部署gitlab并暴露至集群外来演示服务部署与发现的流程。文章写于4月,忙碌了小半年后,我才有时间把后续部分补齐。系列会分为三篇,本篇将继续部署基础设施,如jenkins、harbor、efk等,以便为第三篇项目实战做好准备。

需要说明的是,阿里云迭代的实在是太快了,2018年4月的时候,由于SLB不支持HTTP跳转HTTPS,迫不得已使用了Ingress-Nginx来做跳转控制。但在4月底的时候,SLB已经在部分地区如华北、国外节点支持HTTP跳转HTTPS。到了5月更是全节点支持。这样以来,又简化了Ingress-Nginx的配置。

一般情况下,我们搭建一个Jenkins用于持续集成,那么所有的Jobs都会在这一个Jenkins上进行build,如果Jobs数量较多,势必会引起Jenkins资源不足导致各种问题出现。于是,对于项目较多的部门、公司使用Jenkins,需要搭建Jenkins集群,也就是增加Jenkins Slave来协同工作。

但是增加Jenkins Slave又会引出新的问题,资源不能按需调度。Jobs少的时候资源闲置,而Jobs突然增多仍然会资源不足。我们希望能动态分配Jenkins Slave,即用即拿,用完即毁。这恰好符合K8S中Pod的特性。所以这里,我们在K8S中搭建一个Jenkins集群,并且是Jenkins Slave in Pod.

我们需要准备两个镜像,一个是Jenkins Master,一个是Jenkins Slave:

Jenkins Master

可根据实际需求定制Dockerfile

FROM jenkins/jenkins:latest USER root # Set jessie source RUN cecho "" > /etc/apt/sources.list.d/jessie-backports.list && echo "deb http://mirrors.aliyun.com/debian jessie main contrib non-free" > /etc/apt/sources.list && echo "deb http://mirrors.aliyun.com/debian jessie-updates main contrib non-free" >> /etc/apt/sources.list && echo "deb http://mirrors.aliyun.com/debian-security jessie/updates main contrib non-free" >> /etc/apt/sources.list # Update RUN apt-get update && apt-get install -y libltdl7 && apt-get clean # INSTALL KUBECTL RUN curl -LO https://storage.googleapis.com/kubernetes-release/release/`curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt`/bin/linux/amd64/kubectl && chmod +x ./kubectl && mv ./kubectl /usr/local/bin/kubectl # Set time zone RUN rm -rf /etc/localtime && cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime && echo "Asia/Shanghai" > /etc/timezone # Skip setup wizard、 TimeZone and CSP ENV JAVA_OPTS="-Djenkins.install.runSetupWizard=false -Duser.timezOne=Asia/Shanghai -Dhudson.model.DirectoryBrowserSupport.CSP="default-src "self"; script-src "self" "unsafe-inline" "unsafe-eval"; style-src "self" "unsafe-inline";""

Jenkins Salve

一般来说只需要安装kubelet就可以了

FROM jenkinsci/jnlp-slave USER root # INSTALL KUBECTL RUN curl -LO https://storage.googleapis.com/kubernetes-release/release/`curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt`/bin/linux/amd64/kubectl && chmod +x ./kubectl && mv ./kubectl /usr/local/bin/kubectl

生成镜像后可以push到自己的镜像仓库中备用

为了部署Jenkins、Jenkins Slave和后续的Elastic Search,建议ECS的最小内存为8G

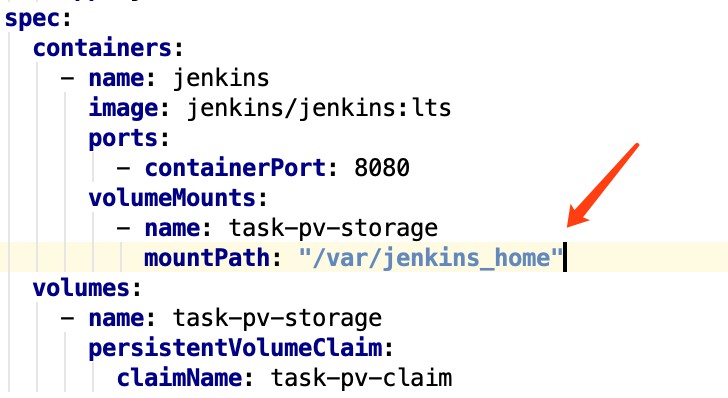

在K8S上部署Jenkins的yaml参考如下:

apiVersion: v1 kind: Namespace metadata: name: jenkins-ci --- apiVersion: v1 kind: ServiceAccount metadata: name: jenkins-ci namespace: jenkins-ci --- apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRoleBinding metadata: name: jenkins-ci roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin subjects: - kind: ServiceAccount name: jenkins-ci namespace: jenkins-ci --- # 设置两个pv,一个用于作为workspace,一个用于存储ssh key apiVersion: v1 kind: PersistentVolume metadata: name: jenkins-home labels: release: jenkins-home namespace: jenkins-ci spec: # workspace 大小为10G capacity: storage: 10Gi accessModes: - ReadWriteMany persistentVolumeReclaimPolicy: Retain # 使用阿里云NAS,需要注意,必须先在NAS创建目录 /jenkins/jenkins-home nfs: path: /jenkins/jenkins-home server: xxxx.nas.aliyuncs.com --- apiVersion: v1 kind: PersistentVolume metadata: name: jenkins-ssh labels: release: jenkins-ssh namespace: jenkins-ci spec: # ssh key 只需要1M空间即可 capacity: storage: 1Mi accessModes: - ReadWriteMany persistentVolumeReclaimPolicy: Retain # 不要忘了在NAS创建目录 /jenkins/ssh nfs: path: /jenkins/ssh server: xxxx.nas.aliyuncs.com --- apiVersion: v1 kind: PersistentVolumeClaim metadata: name: jenkins-home-claim namespace: jenkins-ci spec: accessModes: - ReadWriteMany resources: requests: storage: 10Gi selector: matchLabels: release: jenkins-home --- apiVersion: v1 kind: PersistentVolumeClaim metadata: name: jenkins-ssh-claim namespace: jenkins-ci spec: accessModes: - ReadWriteMany resources: requests: storage: 1Mi selector: matchLabels: release: jenkins-ssh --- apiVersion: extensions/v1beta1 kind: Deployment metadata: name: jenkins namespace: jenkins-ci spec: replicas: 1 template: metadata: labels: name: jenkins spec: serviceAccount: jenkins-ci containers: - name: jenkins imagePullPolicy: Always # 使用1.1小结创建的 Jenkins Master 镜像 image: xx.xx.xx/jenkins:1.0.0 # 资源管理,详见第二章 resources: limits: cpu: 1 memory: 2Gi requests: cpu: 0.5 memory: 1Gi # 开放8080端口用于访问,开放50000端口用于Jenkins Slave和Master的通讯 ports: - containerPort: 8080 - containerPort: 50000 readinessProbe: tcpSocket: port: 8080 initialDelaySeconds: 40 periodSeconds: 20 securityContext: privileged: true volumeMounts: # 映射K8S Node的docker,也就是docker outside docker,这样就不需要在Jenkins里面安装docker - mountPath: /var/run/docker.sock name: docker-sock - mountPath: /usr/bin/docker name: docker-bin - mountPath: /var/jenkins_home name: jenkins-home - mountPath: /root/.ssh name: jenkins-ssh volumes: - name: docker-sock hostPath: path: /var/run/docker.sock - name: docker-bin hostPath: path: /opt/kube/bin/docker - name: jenkins-home persistentVolumeClaim: claimName: jenkins-home-claim - name: jenkins-ssh persistentVolumeClaim: claimName: jenkins-ssh-claim --- kind: Service apiVersion: v1 metadata: name: jenkins-service namespace: jenkins-ci spec: type: NodePort selector: name: jenkins # 将Jenkins Master的50000端口作为NodePort映射到K8S的30001端口 ports: - name: jenkins-agent port: 50000 targetPort: 50000 nodePort: 30001 - name: jenkins port: 8080 targetPort: 8080 --- apiVersion: extensions/v1beta1 kind: Ingress metadata: name: jenkins-ingress namespace: jenkins-ci annotations: nginx.ingress.kubernetes.io/proxy-body-size: "0" spec: rules: # 设置Ingress-Nginx域名和端口 - host: xxx.xxx.com http: paths: - path: / backend: serviceName: jenkins-service servicePort: 8080

最后附一下SLB的配置

这样就可以通过域名xxx.xxx.com访问Jenkins,并且可以通过xxx.xxx.com:50000来链接集群外的Slave。当然,集群内的Slave直接通过serviceName-namespace:50000访问就可以了

以管理员进入Jenkins,安装”Kubernetes”插件,然后进入系统设置界面,”Add a new cloud” – “Kubernetes”,配置如下:

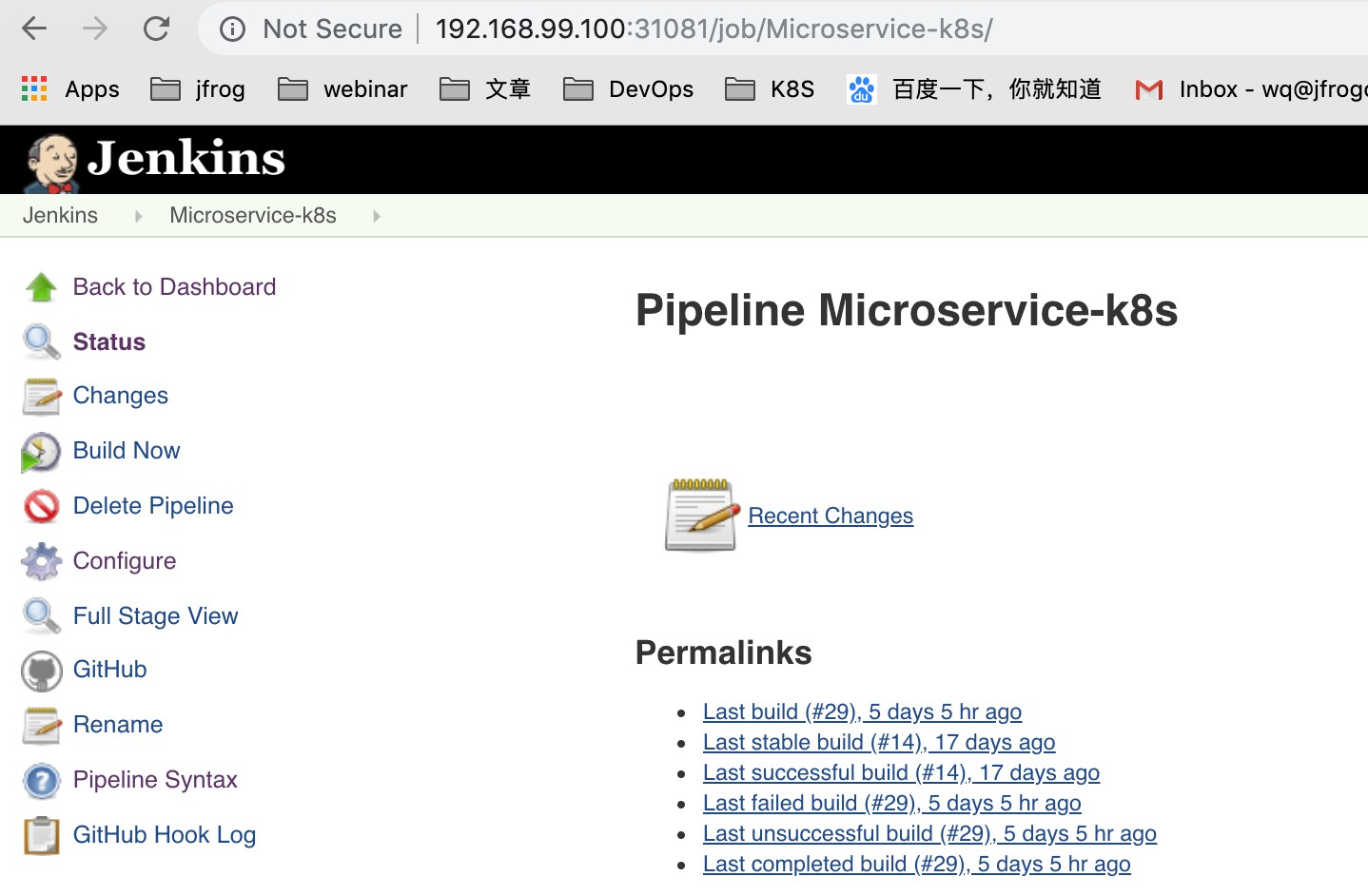

我们可以写一个FreeStyle Project的测试Job:

测试运行:

可以看到名为”jnlp-agent-xxxxx”的Jenkins Salve被创建,Job build完成后又消失,即为正确完成配置。

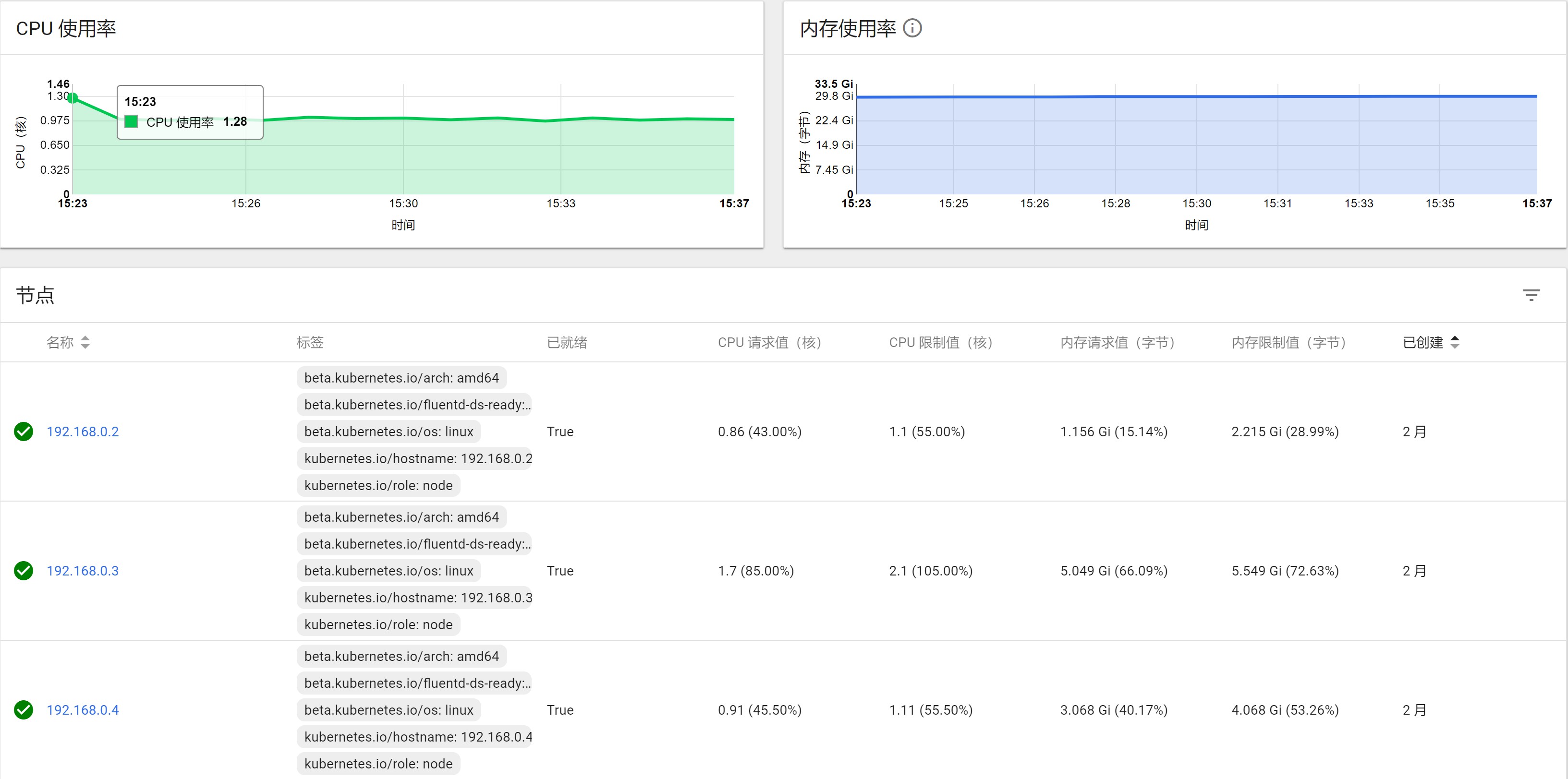

在第一章中,先后提到两次资源管理,一次是Jenkins Master的yaml,一次是Kubernetes Pod Template给Jenkins Slave 配置。Resource的控制是K8S的基础配置之一。但一般来说,用到最多的就是以下四个:

比如在我这个项目中,Gitlab至少需要配置Request Memory为3G,对于Elastic Search的Request Memory也至少为2.5 G.

其他服务需要根据K8S Dashboard中的监控插件结合长时间运行后给出一个合理的Resource控制范围。

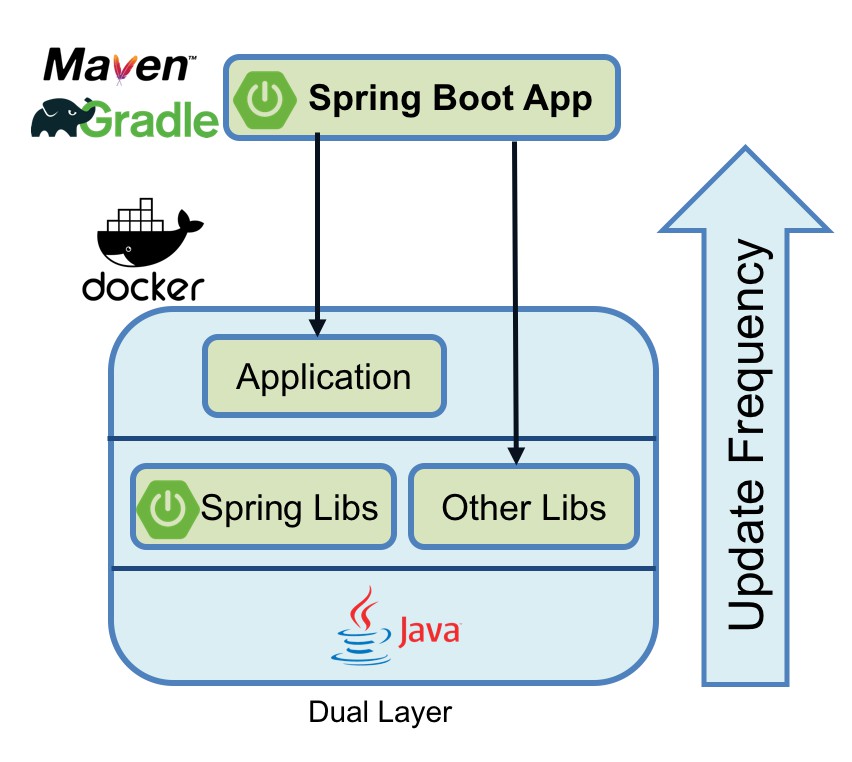

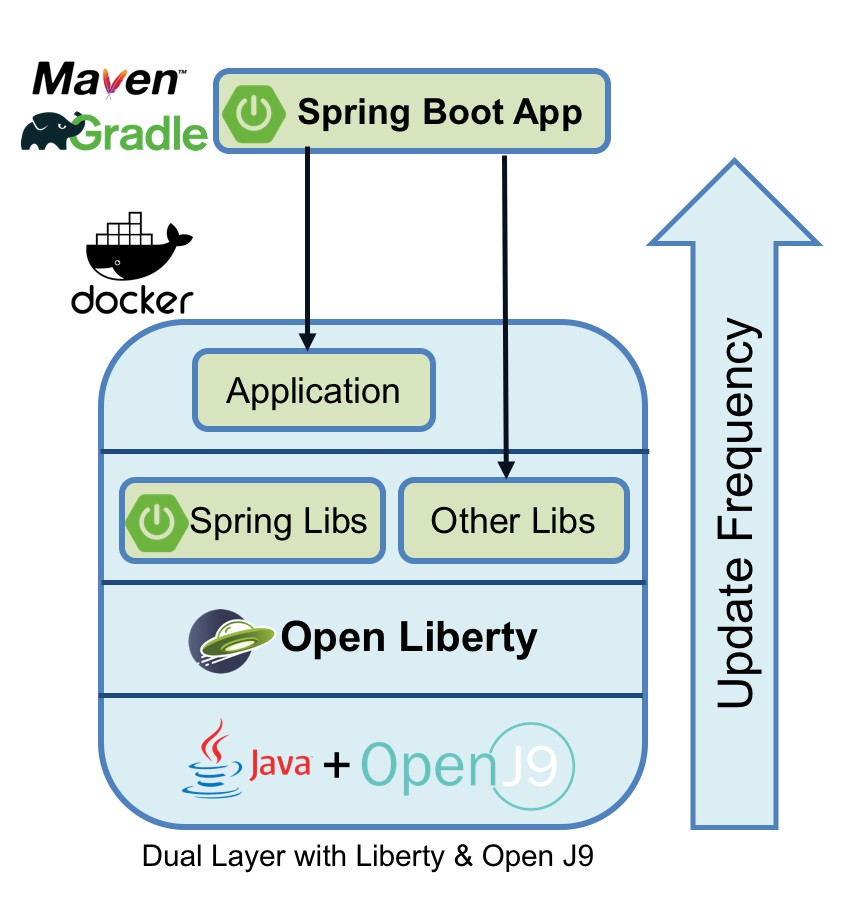

在K8S中跑CI,大致流程是Jenkins将Gitlab代码打包成Image,Push到Docker Registry中,随后Jenkins通过yaml文件部署应用,Pod的Image从Docker Registry中Pull.也就是说到目前为止,我们还缺一个Docker Registry才能准备好所有CI需要的基础软件。

利用阿里云的镜像仓库或者Docker HUB可以节省硬件成本,但考虑数据安全、传输效率和操作易用性,还是希望自建一个Docker Registry. 可选的方案并不多,官方提供的Docker Registry v2轻量简洁,vmware的Harbor功能更丰富。

Harbor提供了一个界面友好的UI,支持镜像同步,这对于DevOps尤为重要。Harbor官方提供了Helm方式在K8S中部署。但我考虑Harbor占用的资源较多,从节省硬件成本来说,把Harbor放到了K8S Master上(Master节点不会被调度用于部署Pod,所以大部分空间资源没有被利用)。当然这不是一个最好的方案,但它是最适合我们目前业务场景的方案。

在Master节点使用docker compose部署Harbor的步骤如下:

pip install docker-compose

mkdir /data mount -t nfs -o vers=4.0 xxx.xxx.com:/harbor /data

# 域名 hostname = xx.xx.com # 协议,这里可以使用http可以免去配置ssl_cert,通过SLB暴露至集群外再加上ssh即可 ui_url_protocol = http # 邮箱配置 email_identity = rfc2595 email_server = xx email_server_port = xx email_username = xx email_password = xx email_from = xx email_ssl = xx email_insecure = xx # admin账号默认密码 harbor_admin_password = xx

proxy: image: vmware/nginx-photon:v1.5.0 container_name: nginx restart: always volumes: - ./common/config/nginx:/etc/nginx:z networks: - harbor ports: - 23280:80 #- 443:443 #- 4443:4443 depends_on: - mysql - registry - ui - log logging: driver: "syslog" options: syslog-address: "tcp://127.0.0.1:1514" tag: "proxy"

{ "registry-mirrors": ["https://kuamavit.mirror.aliyuncs.com", "https://registry.docker-cn.com", "https://docker.mirrors.ustc.edu.cn"], "insecure-registries": ["192.168.0.1:23280"], "max-concurrent-downloads": 10, "log-driver": "json-file", "log-level": "warn", "log-opts": { "max-size": "10m", "max-file": "3" } }

重新安装kubeasz的docker

ansible-playbook 03.docker.yml

这样在集群内的任何一个节点就可以通过http协议192.168.0.1:23280 访问harbor

vi /etc/rc.local # 加入如下内容 # mount -t nfs -o vers=4.0 xxxx.com:/harbor /data # cd /etc/ansible/heygears/harbor # sudo docker-compose up -d chmod +x /etc/rc.local

kubectl create secret docker-registry regcred --docker-server=192.168.0.1:23280 --docker-username=xxx --docker-password=xxx --docker-email=xxx

docker login 192.168.0.1:23280验证Harbor是否创建成功最后我们来给集群加上日志系统。

项目中常用的日志系统多数是Elastic家族的ELK,外加Redis或者Kafka作为缓冲队列。由于Logstash需要运行在java环境下,且占用空间大,配置相对复杂,随着Elastic家族的产品逐渐丰富,Logstash开始慢慢偏向日志解析、过滤、格式化等方面,所以并不太适合在容器环境下的日志收集。K8S官方给出的方案是EFK,其中F指的是Fluentd,一个用Ruby写的轻量级日志收集工具。对比Logstash来说,支持的插件少一些。

容器日志的收集方式不外乎以下四种:

docker默认的driver是json-driver,容器输出到控制台的日志,都会以 *-json.log 的命名方式保存在 /var/lib/docker/containers/ 目录下。所以EFK的日志策略就是在每个Node部署一个Fluentd,读取/var/lib/docker/containers/ 目录下的所有日志,传输到ES中。这样做有两个弊端,一方面不是所有的服务都会把log输出到控制台;另一方面不是所有的容器都需要收集日志。我们更想定制化的去实现一个轻量级的日志收集。所以综合各个方案,还是采取了网上推荐的以FileBeat作为日志收集的“EFK”架构方案。

FileBeat用Golang编写,输出为二进制文件,不存在依赖。占用空间极小,吞吐率高。但它的功能相对单一,仅仅用来做日志收集。所以对于有需要的业务场景,可以用FileBeat收集日志,Logstash格式解析,ES存储,Kibana展示。

使用FileBeat收集容器日志的业务逻辑如下:

也就是说我们利用K8S的Pod的临时目录{}来实现Container的数据共享,举个例子:

apiVersion: extensions/v1beta1 kind: Deployment metadata: name: test labels: app: test spec: replicas: 2 strategy: type: Recreate template: metadata: labels: app: test spec: containers: - image: #appImage name: app volumeMounts: - name: log-volume mountPath: /var/log/app/ #app log path - image: #filebeatImage name: filebeat args: [ "-c", "/etc/filebeat.yml" ] securityContext: runAsUser: 0 volumeMounts: - name: config mountPath: /etc/filebeat.yml readOnly: true subPath: filebeat.yml - name: log-volume mountPath: /var/log/container/ volumes: - name: config configMap: defaultMode: 0600 name: filebeat-config - name: log-volume emptyDir: {} #利用{}实现数据交互 imagePullSecrets: - name: regcred --- apiVersion: v1 kind: ConfigMap metadata: name: filebeat-config namespace: test labels: app: filebeat data: filebeat.yml: |- filebeat.inputs: - type: log enabled: true paths: - /var/log/container/*.log #FileBeat读取log的源 output.elasticsearch: hosts: ["xx.xx.xx:9200"] tags: ["test"] #log tag

实现这种FileBeat作为日志收集的“EFK”系统,只需要在K8S集群中搭建好ES和Kibana即可,FileBeat是随着应用一起创建,无需提前部署。搭建ES和Kibana的方式可参考K8S官方文档,我也进行了一个简单整合:

ES:

# RBAC authn and authz apiVersion: v1 kind: ServiceAccount metadata: name: elasticsearch-logging namespace: kube-system labels: k8s-app: elasticsearch-logging kubernetes.io/cluster-service: "true" addonmanager.kubernetes.io/mode: Reconcile --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: elasticsearch-logging labels: k8s-app: elasticsearch-logging kubernetes.io/cluster-service: "true" addonmanager.kubernetes.io/mode: Reconcile rules: - apiGroups: - "" resources: - "services" - "namespaces" - "endpoints" verbs: - "get" --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: namespace: kube-system name: elasticsearch-logging labels: k8s-app: elasticsearch-logging kubernetes.io/cluster-service: "true" addonmanager.kubernetes.io/mode: Reconcile subjects: - kind: ServiceAccount name: elasticsearch-logging namespace: kube-system apiGroup: "" roleRef: kind: ClusterRole name: elasticsearch-logging apiGroup: "" --- apiVersion: v1 kind: PersistentVolume metadata: name: es-pv-0 labels: release: es-pv namespace: kube-system spec: capacity: storage: 20Gi accessModes: - ReadWriteMany volumeMode: Filesystem persistentVolumeReclaimPolicy: Recycle storageClassName: "es-storage-class" nfs var cpro_id = "u6885494";

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有