阅读此文请先阅读上文:[大数据]-Elasticsearch5.3.1 IK分词,同义词/联想搜索设置,前面介绍了ES,Kibana5.3.1的安装配置,以及IK分词的安装和同义词设置,这里主要记录Logstash导入mysql数据到Elasticsearch5.3.1并设置IK分词和同义词。由于logstash配置好JDBC,ES连接之后运行脚本一站式创建index,mapping,导入数据。但是如果我们要配置IK分词器就需要修改创建index,mapping的配置,下面详细介绍。

Sending Logstash's logs to /home/rzxes/logstash-5.3.1/logs which is now configured via log4j2.properties

[2017-05-16T10:27:36,957][INFO ][logstash.setting.writabledirectory] Creating directory {:setting=>"path.queue", :path=>"/home/rzxes/logstash-5.3.1/data/queue"}

[2017-05-16T10:27:37,041][INFO ][logstash.agent ] No persistent UUID file found. Generating new UUID {:uuid=>"c987803c-9b18-4395-bbee-a83a90e6ea60", :path=>"/home/rzxes/logstash-5.3.1/data/uuid"}

[2017-05-16T10:27:37,581][INFO ][logstash.pipeline ] Starting pipeline {"id"=>"main", "pipeline.workers"=>1, "pipeline.batch.size"=>125, "pipeline.batch.delay"=>5, "pipeline.max_inflight"=>125}

[2017-05-16T10:27:37,682][INFO ][logstash.pipeline ] Pipeline main started

The stdin plugin is now waiting for input:

[2017-05-16T10:27:37,886][INFO ][logstash.agent ] Successfully started Logstash API endpoint {:port=>9600}

input {stdin {}jdbc {# 数据库地址 端口 数据库名jdbc_connection_string => "jdbc:mysql://IP:3306/dbname"# 数据库用户名jdbc_user => "user"# 数据库密码jdbc_password => "pass"# mysql java驱动地址jdbc_driver_library => "/home/rzxes/logstash-5.3.1/mysql-connector-java-5.1.17.jar"jdbc_driver_class => "com.mysql.jdbc.Driver"jdbc_paging_enabled => "true"jdbc_page_size => "100000"# sql 语句文件,也可以直接写SQL,如statement => "select * from table1"statement_filepath => "/home/rzxes/logstash-5.3.1/test.sql"schedule => "* * * * *"type => "jdbc"}

}

output {stdout {codec => json_lines}elasticsearch {hosts => "192.168.230.150:9200"index => "test-1" #索引名称document_type => "form" #type名称document_id => "%{id}" #id必须是待查询的数据表的序列字段

} }

可以看到已经导入了11597条数据。

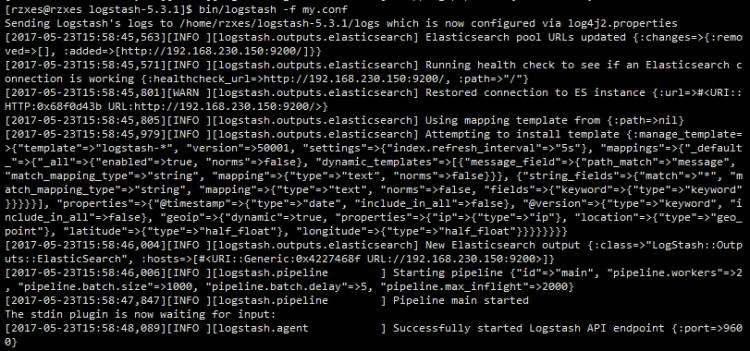

ES导入数据必须先创建index,mapping,但是在logstash中并没有直接创建,我们只传入了index,type等参数,logstash是通过es的mapping template来创建的,这个模板文件不需要指定字段,就可以根据输入自动生成。在logstash启动的时候这个模板已经输出了如下log:

[2017-05-23T15:58:45,801][WARN ][logstash.outputs.elasticsearch] Restored connection to ES instance {:url&#61;>#<URI::HTTP:0x68f0d43b URL:http://192.168.230.150:9200/>}

[2017-05-23T15:58:45,805][INFO ][logstash.outputs.elasticsearch] Using mapping template from {:path&#61;>nil}

[2017-05-23T15:58:45,979][INFO ][logstash.outputs.elasticsearch] Attempting to install template {:manage_template&#61;>{"template"&#61;>"logstash-*", "version"&#61;>50001, "settings"&#61;>{"index.refresh_interval"&#61;>"5s"}, "mappings"&#61;>{"_default_"&#61;>{"_all"&#61;>{"enabled"&#61;>true, "norms"&#61;>false}, "dynamic_templates"&#61;>[{"message_field"&#61;>{"path_match"&#61;>"message", "match_mapping_type"&#61;>"string", "mapping"&#61;>{"type"&#61;>"text", "norms"&#61;>false}}}, {"string_fields"&#61;>{"match"&#61;>"*", "match_mapping_type"&#61;>"string", "mapping"&#61;>{"type"&#61;>"text", "norms"&#61;>false, "fields"&#61;>{"keyword"&#61;>{"type"&#61;>"keyword"}}}}}], "properties"&#61;>{"&#64;timestamp"&#61;>{"type"&#61;>"date", "include_in_all"&#61;>false}, "&#64;version"&#61;>{"type"&#61;>"keyword", "include_in_all"&#61;>false}, "geoip"&#61;>{"dynamic"&#61;>true, "properties"&#61;>{"ip"&#61;>{"type"&#61;>"ip"}, "location"&#61;>{"type"&#61;>"geo_point"}, "latitude"&#61;>{"type"&#61;>"half_float"}, "longitude"&#61;>{"type"&#61;>"half_float"}}}}}}}}

{"template": "*","version": 50001,"settings": {"index.refresh_interval": "5s"},"mappings": {"_default_": {"_all": {"enabled": true,"norms": false},"dynamic_templates": [{"message_field": {"path_match": "message","match_mapping_type": "string","mapping": {"type": "text","norms": false}}},{"string_fields": {"match": "*","match_mapping_type": "string","mapping": {"type": "text","norms": false,"analyzer": "ik_max_word",#只需要添加这一行即可设置分词器为ik_max_word"fields": {"keyword": {"type": "keyword"}}}}}],"properties": {"&#64;timestamp": {"type": "date","include_in_all": false},"&#64;version": {"type": "keyword","include_in_all": false}}}}

}

如需配置同义词&#xff0c;需自定义分词器&#xff0c;配置同义词过滤

{"template" : "*","version" : 50001,"settings" : {"index.refresh_interval" : "5s",#分词&#xff0c;同义词配置&#xff1a;自定义分词器&#xff0c;过滤器&#xff0c;如不配同义词则没有index这一部分 "index": {"analysis": {"analyzer": {"by_smart": {"type": "custom","tokenizer": "ik_smart","filter": ["by_tfr","by_sfr"],"char_filter": ["by_cfr"]},"by_max_word": {"type": "custom","tokenizer": "ik_max_word","filter": ["by_tfr","by_sfr"],"char_filter": ["by_cfr"]}},"filter": {"by_tfr": {"type": "stop","stopwords": [" "]},"by_sfr": {"type": "synonym","synonyms_path": "analysis/synonyms.txt" #同义词路径}},"char_filter": {"by_cfr": {"type": "mapping","mappings": ["| &#61;> |"]}}}} # index --end--},"mappings" : {"_default_" : {"_all" : {"enabled" : true,"norms" : false},"dynamic_templates" : [{"message_field" : {"path_match" : "message","match_mapping_type" : "string","mapping" : {"type" : "text","norms" : false}}},{"string_fields" : {"match" : "*","match_mapping_type" : "string","mapping" : {"type" : "text","norms" : false,#选择分词器&#xff1a;自定义分词器&#xff0c;或者ik_mmax_word"analyzer" : "by_max_word","fields" : {"keyword" : {"type" : "keyword"}}}}}],"properties" : {"&#64;timestamp" : {"type" : "date","include_in_all" : false},"&#64;version" : {"type" : "keyword","include_in_all" : false}}}}

}

input {stdin {}jdbc {# 数据库地址 端口 数据库名jdbc_connection_string &#61;> "jdbc:mysql://IP:3306/dbname"# 数据库用户名jdbc_user &#61;> "user"# 数据库密码jdbc_password &#61;> "pass"# mysql java驱动地址jdbc_driver_library &#61;> "/home/rzxes/logstash-5.3.1/mysql-connector-java-5.1.17.jar"jdbc_driver_class &#61;> "com.mysql.jdbc.Driver"jdbc_paging_enabled &#61;> "true"jdbc_page_size &#61;> "100000"# sql 语句文件statement_filepath &#61;> "/home/rzxes/logstash-5.3.1/mytest.sql"schedule &#61;> "* * * * *"type &#61;> "jdbc"}}output {stdout {codec &#61;> json_lines}elasticsearch {hosts &#61;> "192.168.230.150:9200"index &#61;> "test-1"document_type &#61;> "form"document_id &#61;> "%{id}" #id必须是待查询的数据表的序列字段template_overwrite &#61;> truetemplate &#61;> "/home/rzxes/logstash-5.3.1/template/logstash.json"}}

curl -XPUT "http://192.168.230.150:9200/_template/rtf" -H &#39;Content-Type: application/json&#39; -d&#39;

{"template" : "*","version" : 50001,"settings" : {"index.refresh_interval" : "5s","index": {"analysis": {"analyzer": {"by_smart": {"type": "custom","tokenizer": "ik_smart","filter": ["by_tfr","by_sfr"],"char_filter": ["by_cfr"]},"by_max_word": {"type": "custom","tokenizer": "ik_max_word","filter": ["by_tfr","by_sfr"],"char_filter": ["by_cfr"]}},"filter": {"by_tfr": {"type": "stop","stopwords": [" "]},"by_sfr": {"type": "synonym","synonyms_path": "analysis/synonyms.txt"}},"char_filter": {"by_cfr": {"type": "mapping","mappings": ["| &#61;> |"]}}}}},"mappings" : {"_default_" : {"_all" : {"enabled" : true,"norms" : false},"dynamic_templates" : [{"message_field" : {"path_match" : "message","match_mapping_type" : "string","mapping" : {"type" : "text","norms" : false}}},{"string_fields" : { "match" : "*", "match_mapping_type" : "string", "mapping" : {"type" : "text","norms" : false,"analyzer" : "by_max_word", "fields" : { "keyword" : {"type" : "keyword"}}}}}],"properties" : {"&#64;timestamp" : {"type" : "date","include_in_all" : false},"&#64;version" : {"type" : "keyword","include_in_all" : false}}}}}&#39;

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有