DMA操作允许设备直接访问内存,但是也带来了诸多问题:

IOMMU与cpu的MMU类似,给设备提供一套虚拟地址空间,设备发出虚拟总线地址空间的访问请求、送到IOMMU单元翻译成物理地址的方式间接访问物理内存。

以x86环境为例,在文件arch/x86/include/asm/iommu_table.h文件中定义了每种IOMMU的初始化函数集结构体:

struct iommu_table_entry {initcall_t detect; //IOMMU探测函数,返回非0表示该类IOMMU开启,返回0表示关闭。initcall_t depend; //另一类IOMMU的detect函数,用于多种IOMMU的初始化排序。void (*early_init)(void); /* No memory allocate available. */void (*late_init)(void); /* Yes, can allocate memory. */

//当flags的IOMMU_FINISH_IF_DETECTED置位,detect函数返回1后不再扫描后续的IOMMU

#define IOMMU_FINISH_IF_DETECTED (1<<0)

//当flags的IOMMU_DETECTED置位&#xff0c;表示该类IOMMU已经成功探测到开启

#define IOMMU_DETECTED (1<<1)int flags;

};

此外&#xff0c;在该文件中还定义了生成iommu_table_entry全局变量的宏&#xff1a;

//将全局变量编译到".iommu_table"段中

#define __IOMMU_INIT(_detect, _depend, _early_init, _late_init, _finish)\static const struct iommu_table_entry \__iommu_entry_##_detect __used \__attribute__ ((unused, __section__(".iommu_table"), \aligned((sizeof(void *))))) \&#61; {_detect, _depend, _early_init, _late_init, \_finish ? IOMMU_FINISH_IF_DETECTED : 0}

其他的宏IOMMU_INIT_POST/IOMMU_INIT_POST_FINISH&#xff0c;和IOMMU_INIT_FINISH/IOMMU_INIT都是基于__IOMMU_INIT的封装。

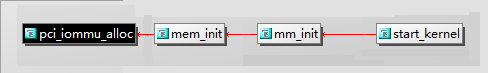

内核加载后&#xff0c;通过start_kernel->mm_init->mem_init->pci_iommu_alloc&#xff0c;执行IOMMU初始化。

pci_iommu_alloc函数定义在arch/x86/kernel/pci-dma.c文件中&#xff1a;

void __init pci_iommu_alloc(void)

{struct iommu_table_entry *p;//首先&#xff0c;对所有类型的IOMMU函数集合进行排序sort_iommu_table(__iommu_table, __iommu_table_end);//发现depend等于自己的detect&#xff0c;将其置NULL&#xff1b;如果发现排序后的某个IOMMU在其被依赖的IOMMU前面&#xff0c;报错&#xff01;&#xff01;&#xff01;check_iommu_entries(__iommu_table, __iommu_table_end);for (p &#61; __iommu_table; p < __iommu_table_end; p&#43;&#43;) {if (p && p->detect && p->detect() > 0) {p->flags |&#61; IOMMU_DETECTED;if (p->early_init)p->early_init();if (p->flags & IOMMU_FINISH_IF_DETECTED)break;}}

}

最后&#xff0c;在pci_iommu_init函数中调用late_init完成最后的IOMMU初始化操作。该函数也在arch/x86/kernel/pci-dma.c文件中

static int __init pci_iommu_init(void)

{struct iommu_table_entry *p;x86_init.iommu.iommu_init();for (p &#61; __iommu_table; p < __iommu_table_end; p&#43;&#43;) {if (p && (p->flags & IOMMU_DETECTED) && p->late_init)p->late_init();}return 0;

}

/* Must execute after PCI subsystem */

rootfs_initcall(pci_iommu_init);

相关概念如下&#xff1a;

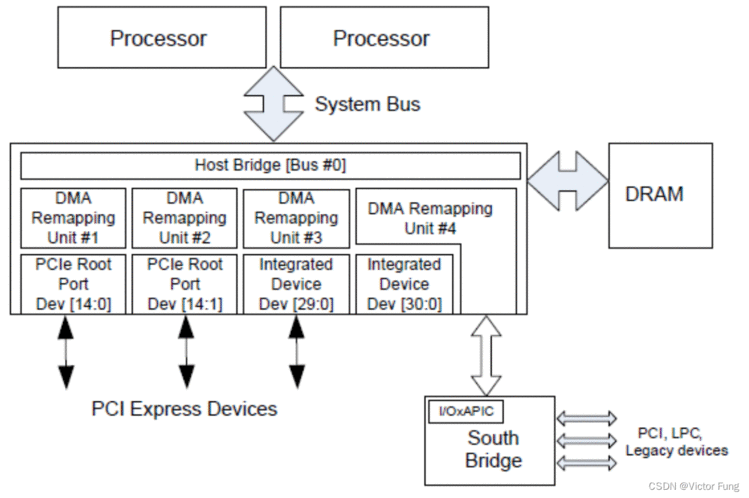

下图是x86物理服务器视图&#xff1a;

如上图&#xff0c;在主桥中有多个DMA Remapping Unit。每个单元管理相关设备的DMA请求&#xff0c;负责将它们的设备虚拟地址转为设备物理地址。图中&#xff0c;DMA Remapping Unit #1管理PCIe Root Port Dev[14:0]及其下属设备; 依次类推&#xff0c;DMA Remapping Unit #4管理PCIe Root Port Dev[30:0]及其下属设备和南桥设备。

注&#xff1a;BIOS通过在ACPI表中的DMA Remapping Reporting Structure 信息来描述这些管理信息。

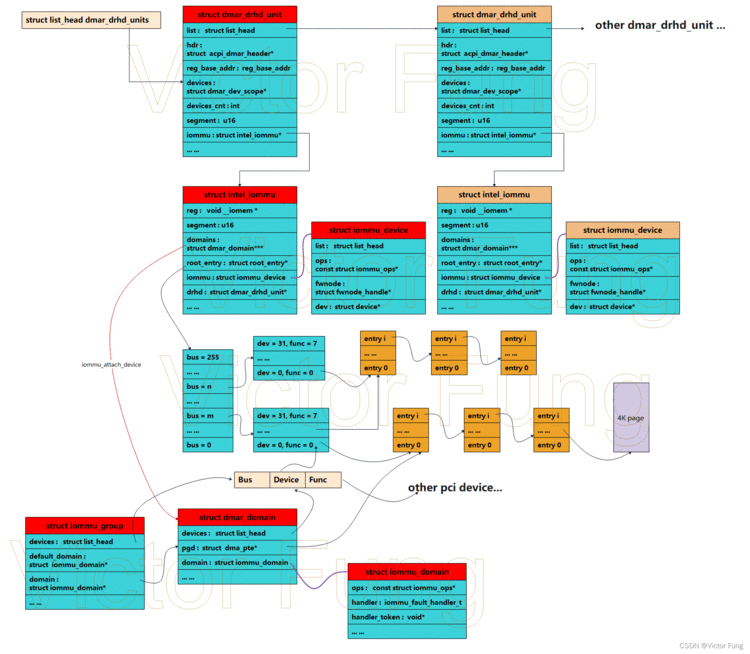

linux中关于intel IOMMU的相关数据结构如下&#xff1a;

通过IOMMU_INIT_POST(detect_intel_iommu)定义了用于intel-iommu的detect&#xff0c;同时该函数会在pci_swiotlb_detect_4gb后执行。在detect_intel_iommu函数中对全局回调x86_init.iommu.iommu_init赋值为intel_iommu_init&#xff0c;最后会在pci_iommu_init函数中调用该回调(即intel_iommu_init函数)。

int __init intel_iommu_init(void)

{# 略略略... ...if (iommu_init_mempool()) {if (force_on)panic("tboot: Failed to initialize iommu memory\n");return -ENOMEM;}down_write(&dmar_global_lock);if (dmar_table_init()) {if (force_on)panic("tboot: Failed to initialize DMAR table\n");goto out_free_dmar;}if (dmar_dev_scope_init() < 0) {if (force_on)panic("tboot: Failed to initialize DMAR device scope\n");goto out_free_dmar;}up_write(&dmar_global_lock);dmar_register_bus_notifier();down_write(&dmar_global_lock);# 略略略... ...if (dmar_init_reserved_ranges()) {if (force_on)panic("tboot: Failed to reserve iommu ranges\n");goto out_free_reserved_range;}if (dmar_map_gfx)intel_iommu_gfx_mapped &#61; 1;init_no_remapping_devices();ret &#61; init_dmars();if (ret) {if (force_on)panic("tboot: Failed to initialize DMARs\n");pr_err("Initialization failed\n");goto out_free_reserved_range;}up_write(&dmar_global_lock);#if defined(CONFIG_X86) && defined(CONFIG_SWIOTLB)if (!has_untrusted_dev() || intel_no_bounce)swiotlb &#61; 0;

#endifdma_ops &#61; &intel_dma_ops;init_iommu_pm_ops();down_read(&dmar_global_lock);for_each_active_iommu(iommu, drhd) {iommu_device_sysfs_add(&iommu->iommu, NULL,intel_iommu_groups,"%s", iommu->name);iommu_device_set_ops(&iommu->iommu, &intel_iommu_ops);iommu_device_register(&iommu->iommu);}up_read(&dmar_global_lock);bus_set_iommu(&pci_bus_type, &intel_iommu_ops);if (si_domain && !hw_pass_through)register_memory_notifier(&intel_iommu_memory_nb);cpuhp_setup_state(CPUHP_IOMMU_INTEL_DEAD, "iommu/intel:dead", NULL,intel_iommu_cpu_dead);down_read(&dmar_global_lock);if (probe_acpi_namespace_devices())pr_warn("ACPI name space devices didn&#39;t probe correctly\n");/* Finally, we enable the DMA remapping hardware. */for_each_iommu(iommu, drhd) {if (!drhd->ignored && !translation_pre_enabled(iommu))iommu_enable_translation(iommu);iommu_disable_protect_mem_regions(iommu);}up_read(&dmar_global_lock);pr_info("Intel(R) Virtualization Technology for Directed I/O\n");intel_iommu_enabled &#61; 1;return 0;out_free_reserved_range:# 略略略... ...

out_free_dmar:# 略略略... ...

}

核心流程如下&#xff1a;

iommu_group_get_for_dev函数负责获取iommu_group&#xff0c;若不存在就创建新的iommu_group并将设备添加到iommu_group之中&#xff1a;

struct iommu_group *iommu_group_get_for_dev(struct device *dev)

{const struct iommu_ops *ops &#61; dev->bus->iommu_ops;# 略略略... ...group &#61; iommu_group_get(dev);if (group)return group;# 略略略... ...group &#61; ops->device_group(dev);# 略略略... ...if (!group->default_domain) {struct iommu_domain *dom;dom &#61; __iommu_domain_alloc(dev->bus, iommu_def_domain_type);if (!dom && iommu_def_domain_type !&#61; IOMMU_DOMAIN_DMA) {dom &#61; __iommu_domain_alloc(dev->bus, IOMMU_DOMAIN_DMA);# 略略略... ...}group->default_domain &#61; dom;if (!group->domain)group->domain &#61; dom;if (dom && !iommu_dma_strict) {int attr &#61; 1;iommu_domain_set_attr(dom,DOMAIN_ATTR_DMA_USE_FLUSH_QUEUE,&attr);}}ret &#61; iommu_group_add_device(group, dev);if (ret) {iommu_group_put(group);return ERR_PTR(ret);}return group;

}

函数中通过ops->device_group创建iommu_group&#xff0c;并创建默认的struct iommu_domain赋值给iommu_group的default_domain和domain字段。当iommu_group透传到虚拟机的时候&#xff0c;其domain会指向虚拟机的域。

在intel-iommu实现中&#xff0c;ops->device_group回调的实现函数为intel_iommu_device_group。在该函数中&#xff0c;对于PCI设备会执行pci_device_group&#xff1b;反之&#xff0c;则执行generic_device_group。

pci_device_group函数如下&#xff1a;

struct iommu_group *pci_device_group(struct device *dev)

{# 略略略... ...u64 devfns[4] &#61; { 0 };# 略略略... ...if (pci_for_each_dma_alias(pdev, get_pci_alias_or_group, &data))return data.group;pdev &#61; data.pdev;for (bus &#61; pdev->bus; !pci_is_root_bus(bus); bus &#61; bus->parent) {if (!bus->self)continue;if (pci_acs_path_enabled(bus->self, NULL, REQ_ACS_FLAGS))break;pdev &#61; bus->self;group &#61; iommu_group_get(&pdev->dev);if (group)return group;}group &#61; get_pci_alias_group(pdev, (unsigned long *)devfns);if (group)return group;group &#61; get_pci_function_alias_group(pdev, (unsigned long *)devfns);if (group)return group;/* No shared group found, allocate new */return iommu_group_alloc();

}

执行流程如下&#xff1a;

pci_for_each_dma_alias迭代规则如下&#xff1a;

int pci_for_each_dma_alias(struct pci_dev *pdev,int (*fn)(struct pci_dev *pdev,u16 alias, void *data), void *data)

{# 略略略... ...ret &#61; fn(pdev, pci_dev_id(pdev), data);if (ret)return ret;if (unlikely(pdev->dma_alias_mask)) {unsigned int devfn;for_each_set_bit(devfn, pdev->dma_alias_mask, MAX_NR_DEVFNS) {ret &#61; fn(pdev, PCI_DEVID(pdev->bus->number, devfn),data);if (ret)return ret;}}for (bus &#61; pdev->bus; !pci_is_root_bus(bus); bus &#61; bus->parent) {struct pci_dev *tmp;/* Skip virtual buses */if (!bus->self)continue;tmp &#61; bus->self;/* stop at bridge where translation unit is associated */if (tmp->dev_flags & PCI_DEV_FLAGS_BRIDGE_XLATE_ROOT)return ret;if (pci_is_pcie(tmp)) {switch (pci_pcie_type(tmp)) {case PCI_EXP_TYPE_ROOT_PORT:case PCI_EXP_TYPE_UPSTREAM:case PCI_EXP_TYPE_DOWNSTREAM:continue;case PCI_EXP_TYPE_PCI_BRIDGE:ret &#61; fn(tmp,PCI_DEVID(tmp->subordinate->number,PCI_DEVFN(0, 0)), data);if (ret)return ret;continue;case PCI_EXP_TYPE_PCIE_BRIDGE:ret &#61; fn(tmp, pci_dev_id(tmp), data);if (ret)return ret;continue;}} else {if (tmp->dev_flags & PCI_DEV_FLAG_PCIE_BRIDGE_ALIAS)ret &#61; fn(tmp,PCI_DEVID(tmp->subordinate->number,PCI_DEVFN(0, 0)), data);elseret &#61; fn(tmp, pci_dev_id(tmp), data);if (ret)return ret;}}return ret;

}

执行流程如下&#xff1a;

注&#xff1a;对于PCIE总线&#xff0c;之所以需要查询PCIE-PCI桥或PCI-PCIE桥&#xff0c;是因为PCI接入到PCIE其下属设备均共享source identifier(使用桥设备的bus、device、func)。

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有

京公网安备 11010802041100号 | 京ICP备19059560号-4 | PHP1.CN 第一PHP社区 版权所有