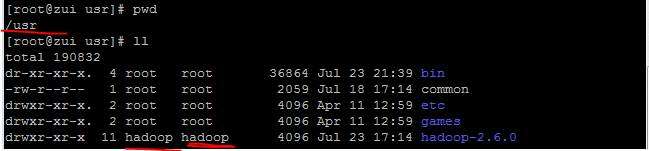

3.0.3玩不好,现将2.6.0tar.gz上传到 / usr , chmod -R hadoop:hadop hadoop-2.6.0 , rm掉3.0.3

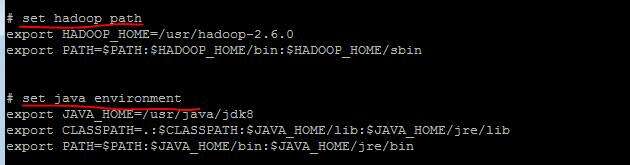

2.在/etc/profile中 配置java的环境配置 , hadoop环境配置

ssh免密登录配置 (查看之前记录)

3. 配置文件

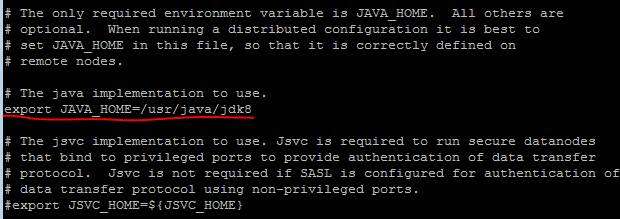

hadoop-env.sh中配置java环境

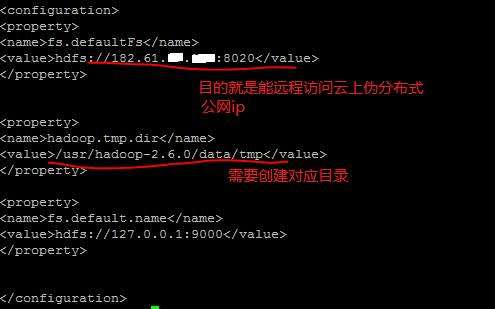

core-sit.xml

官网上没有提到 端口9000这个配置,但是如果不添加, start-dfs.sh的时候会出现如下错误:

Incorrect configuration: namenode address dfs.namenode.servicerpc-address or dfs.namenode.rpc-address is not configured.

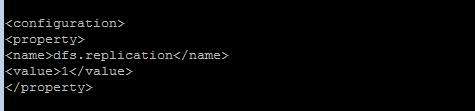

hdfs-site.xml

| 参数 | 描述 | 默认 | 配置文件 | 例子值 |

| dfs.name.dir | name node的元数据,以,号隔开,hdfs会把元数据冗余复制到这些目录,一般这些目录是不同的块设备,不存在的目录会被忽略掉 | {hadoop.tmp.dir} /dfs/name | hdfs-site.xm | /hadoop/hdfs/name |

| dfs.name.edits.dir | node node的事务文件存储的目录,以,号隔开,hdfs会把事务文件冗余复制到这些目录,一般这些目录是不同的块设备,不存在的目录会被忽略掉 | ${dfs.name.dir}/current?? | hdfs-site.xm | ${ |

4.格式化文件系统

# hadoop namenode –format

[root@zui hadoop]# hadoop namenode -format (因为这里用到了root用户, 所以start-dfs.sh如果不在root下执行,启动不了namenode / datanode and secondnamenode , yarn没有关系)

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

18/07/23 17:03:28 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = zui/182.61.17.191

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.6.0

STARTUP_MSG: classpath =/***********各种jar包的path/

STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r e34 96499ecb8d220fba99dc5ed4c99c8f9e33bb1; compiled by 'jenkins' on 2014-11-13T21:10 Z

STARTUP_MSG: java = 1.8.0_152

************************************************************/

18/07/23 17:03:29 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT]

18/07/23 17:03:29 INFO namenode.NameNode: createNameNode [-format]

Formatting using clusterid: CID-cb98355b-6a1d-47a2-964c-48dc32752b55

18/07/23 17:03:30 INFO namenode.FSNamesystem: No KeyProvider found.

18/07/23 17:03:30 INFO namenode.FSNamesystem: fsLock is fair:true

18/07/23 17:03:30 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000

18/07/23 17:03:30 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true

18/07/23 17:03:30 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000

18/07/23 17:03:30 INFO blockmanagement.BlockManager: The block deletion will start around 2018 Jul 23 17:03:30

18/07/23 17:03:30 INFO util.GSet: Computing capacity for map BlocksMap

18/07/23 17:03:30 INFO util.GSet: VM type = 64-bit

18/07/23 17:03:30 INFO util.GSet: 2.0% max memory 966.7 MB = 19.3 MB

18/07/23 17:03:30 INFO util.GSet: capacity = 2^21 = 2097152 entries

18/07/23 17:03:30 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false

18/07/23 17:03:30 INFO blockmanagement.BlockManager: defaultReplication= 1

18/07/23 17:03:30 INFO blockmanagement.BlockManager: maxReplication= 512

18/07/23 17:03:30 INFO blockmanagement.BlockManager: minReplication= 1

18/07/23 17:03:30 INFO blockmanagement.BlockManager: maxReplicationStreams= 2

18/07/23 17:03:30 INFO blockmanagement.BlockManager: shouldCheckForEnoughRacks = false

18/07/23 17:03:30 INFO blockmanagement.BlockManager: replicationRecheckInterval= 3000

18/07/23 17:03:30 INFO blockmanagement.BlockManager: encryptDataTransfer= false

18/07/23 17:03:30 INFO blockmanagement.BlockManager: maxNumBlocksToLog= 1000

18/07/23 17:03:30 INFO namenode.FSNamesystem: fsOwner = root (auth:SIMPLE)

18/07/23 17:03:30 INFO namenode.FSNamesystem: supergroup = supergroup

18/07/23 17:03:30 INFO namenode.FSNamesystem: isPermissionEnabled = true

18/07/23 17:03:30 INFO namenode.FSNamesystem: HA Enabled: false

18/07/23 17:03:30 INFO namenode.FSNamesystem: Append Enabled: true

18/07/23 17:03:31 INFO util.GSet: Computing capacity for map INodeMap

18/07/23 17:03:31 INFO util.GSet: VM type = 64-bit

18/07/23 17:03:31 INFO util.GSet: 1.0% max memory 966.7 MB = 9.7 MB

18/07/23 17:03:31 INFO util.GSet: capacity = 2^20 = 1048576 entries

18/07/23 17:03:31 INFO namenode.NameNode: Caching file names occuring more than 10 times

18/07/23 17:03:31 INFO util.GSet: Computing capacity for map cachedBlocks

18/07/23 17:03:31 INFO util.GSet: VM type = 64-bit

18/07/23 17:03:31 INFO util.GSet: 0.25% max memory 966.7 MB = 2.4 MB

18/07/23 17:03:31 INFO util.GSet: capacity = 2^18 = 262144 entries

18/07/23 17:03:31 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033

18/07/23 17:03:31 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0

18/07/23 17:03:31 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension= 30000

18/07/23 17:03:31 INFO namenode.FSNamesystem: Retry cache on namenode is enabled

18/07/23 17:03:31 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis

18/07/23 17:03:31 INFO util.GSet: Computing capacity for map NameNodeRetryCache

18/07/23 17:03:31 INFO util.GSet: VM type = 64-bit

18/07/23 17:03:31 INFO util.GSet: 0.029999999329447746% max memory 966.7 MB = 297.0 KB

18/07/23 17:03:31 INFO util.GSet: capacity = 2^15 = 32768 entries

18/07/23 17:03:31 INFO namenode.NNConf: ACLs enabled? false

18/07/23 17:03:31 INFO namenode.NNConf: XAttrs enabled? true

18/07/23 17:03:31 INFO namenode.NNConf: Maximum size of an xattr: 16384

18/07/23 17:03:31 INFO namenode.FSImage: Allocated new BlockPoolId: BP-702429615-182.61.17.191-1532336611838

18/07/23 17:03:31 INFO common.Storage: Storage directory /usr/hadoop-2.6.0/data/tmp/dfs/name has been successfully formatted.

18/07/23 17:03:32 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0

18/07/23 17:03:32 INFO util.ExitUtil: Exiting with status 0

18/07/23 17:03:32 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at zui/182.61.17.191

************************************************************/

[root@zui hadoop]# [root@zui hadoop]# hadoop namenode -format

-bash: [root@zui: command not found

[root@zui hadoop]# DEPRECATED: Use of this script to execute hdfs command is deprecated.

-bash: DEPRECATED:: command not found

[root@zui hadoop]# Instead use the hdfs command for it.

-bash: Instead: command not found

[root@zui hadoop]#

[root@zui hadoop]# 18/07/23 17:03:28 INFO namenode.NameNode: STARTUP_MSG:

-bash: 18/07/23: No such file or directory

[root@zui hadoop]# /************************************************************

-bash: /appd.log: Text file busy

[root@zui hadoop]# STARTUP_MSG: Starting NameNode

-bash: STARTUP_MSG:: command not found

[root@zui hadoop]# STARTUP_MSG: host = zui/182.61.17.191

-bash: STARTUP_MSG:: command not found

[root@zui hadoop]# STARTUP_MSG: args = [-format]

-bash: STARTUP_MSG:: command not found

[root@zui hadoop]# STARTUP_MSG: version = 2.6.0

-bash: STARTUP_MSG:: command not found

格式化成功,这里我把打印的信息贴上了,深入的学习是需要分析的

5.

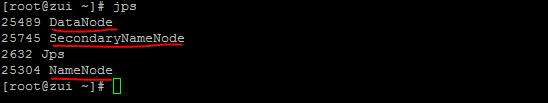

执行 start-dfs.sh

检查 结果jps

6.通过浏览器访问 : http://公网ip:50070/

来张大图爽快一把

全文参考: https://blog.csdn.net/liuge36/article/details/78353930

如有雷同,全属抄袭

2018 07 23

Hadoop中的资源调度 : yarn

mapreduce-site.xml

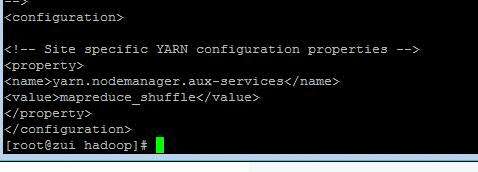

yarn-site.xml

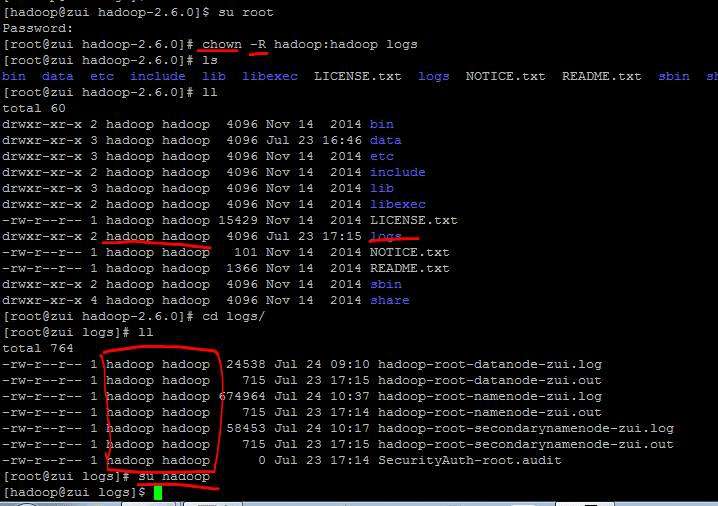

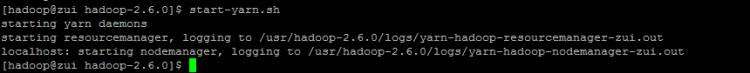

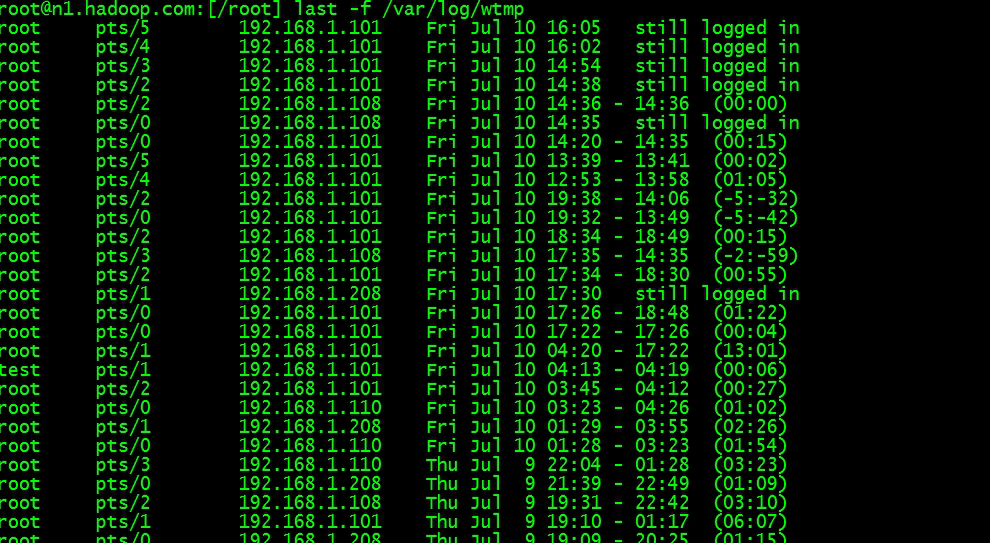

切换到hadoop用户,执行 start-yarn.sh, 因为免密配置是在hadoop用户下操作的,如果root用户,需要一次次输入密码

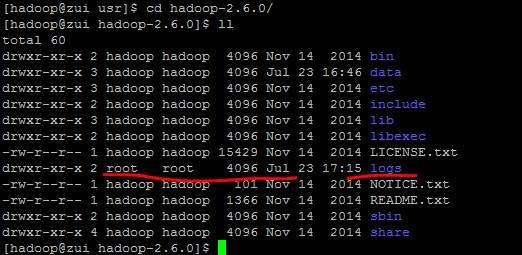

因为之前start-dfs的操作是在root下操作的,所以log文件对hadoop用户 Permission denied

检查如下;

将logs用户和组 assign给 hadoop (提示:免密登录在什么用户下配置的,后面hadoop任何操作都要在这个user下 1.其他用户操作不知要输入多少次密码,如果一百次操作都要输入pwd你会晕挂的 2.假使前面用了root,后面恍然大悟切回到hadoop用户了,但是有些生成的文件是root用户和组,如果hadoop下也需要操作这些目录那么明显没有权限,运行检查发现100个文件,运气好也许一个 chown -R就好了,运气不好 100次 chown你来试试)

再次执行 start-yarn.sh

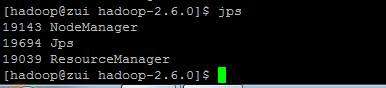

查看 ,为什么没有显示 namenode 和 datanode的进程, 此时http://182.61.**.***:50070也还是可以访问的呀 ????

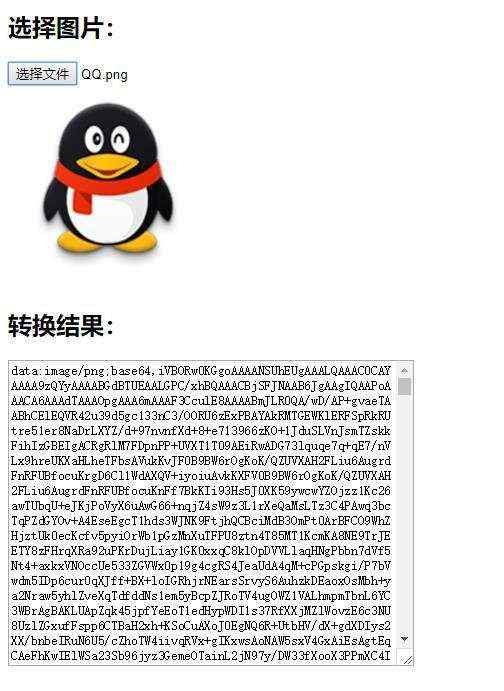

在浏览器输入,OK, 看到下面结果,伪分布式搭建完成

京公网安备 11010802041100号

京公网安备 11010802041100号